一、背景

在近期的項(xiàng)目推進(jìn)過程中,我們計(jì)劃采用Oracle19c RAC數(shù)據(jù)庫。為確保項(xiàng)目落地階段的高效實(shí)施,同時(shí)沉淀標(biāo)準(zhǔn)化安裝經(jīng)驗(yàn),特在本地虛擬機(jī)環(huán)境中開展全流程模擬搭建工作。本文將詳細(xì)記錄從環(huán)境準(zhǔn)備、集群配置到數(shù)據(jù)庫實(shí)例部署的完整過程,期望通過技術(shù)實(shí)踐分享,為朋友們提供幫助。

聲明:本文中的ip都是本地虛擬機(jī)中設(shè)置的ip,不涉及生產(chǎn),如有雷同純屬巧合!

二、安裝前規(guī)劃

網(wǎng)絡(luò)規(guī)劃

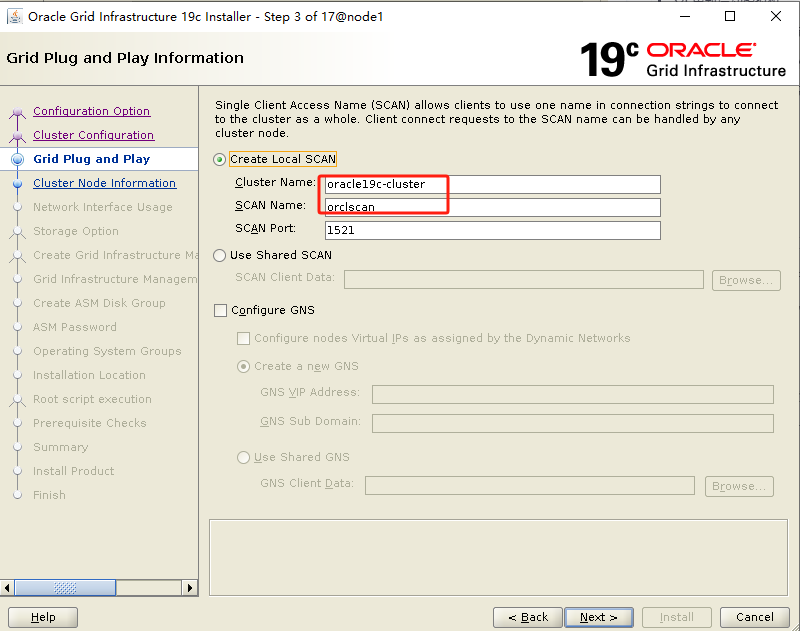

| 主機(jī)名 | public ip | vip ip | private ip | scan ip | scan name |

|---|---|---|---|---|---|

| node1 | 192.*.*.60 | 192.*.*.70 | 10.*.*.11 | 192.*.*.75 | orclscan |

| node2 | 192.*.*.62 | 192.*.*.72 | 10.*.*.12 | 192.*.*.75 | orclscan |

三、主機(jī)配置

本次使用Centos7.9操作系統(tǒng),安裝操作系統(tǒng)過程略。

共享存儲(chǔ)配置(node1 & node2)

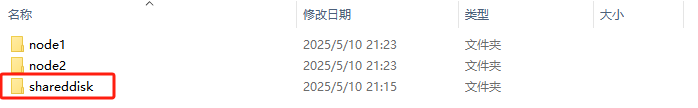

windows主機(jī)上創(chuàng)建共享磁盤目錄:

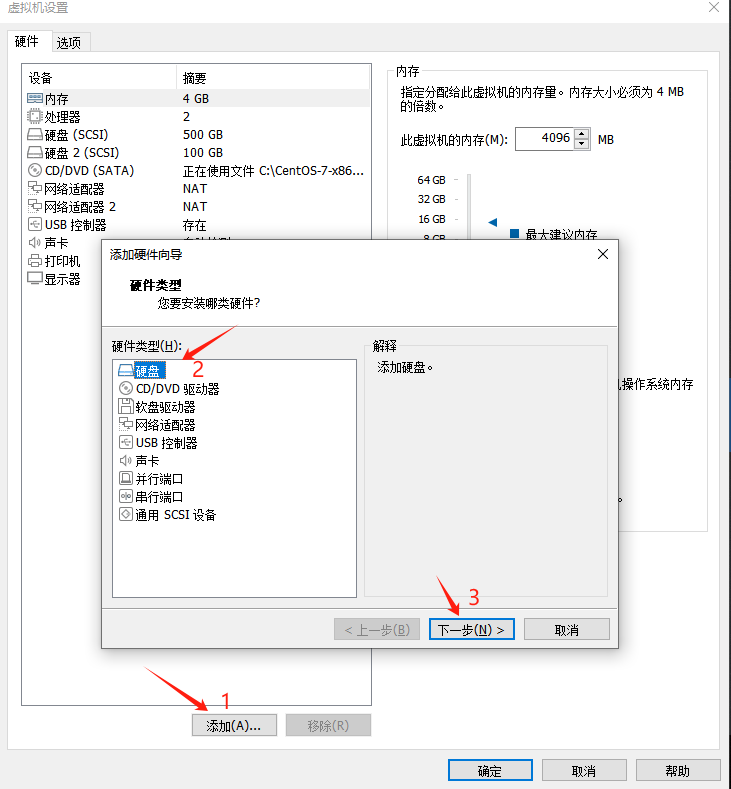

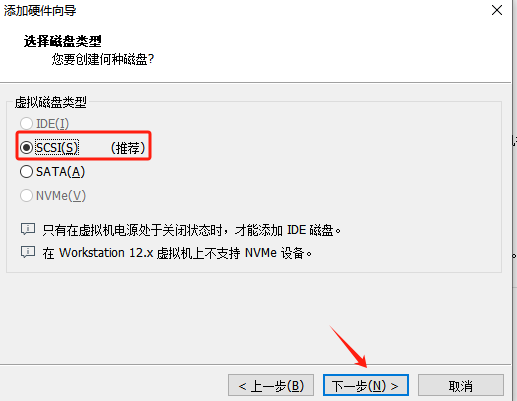

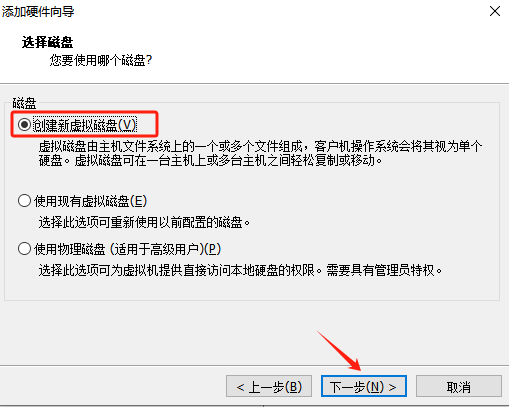

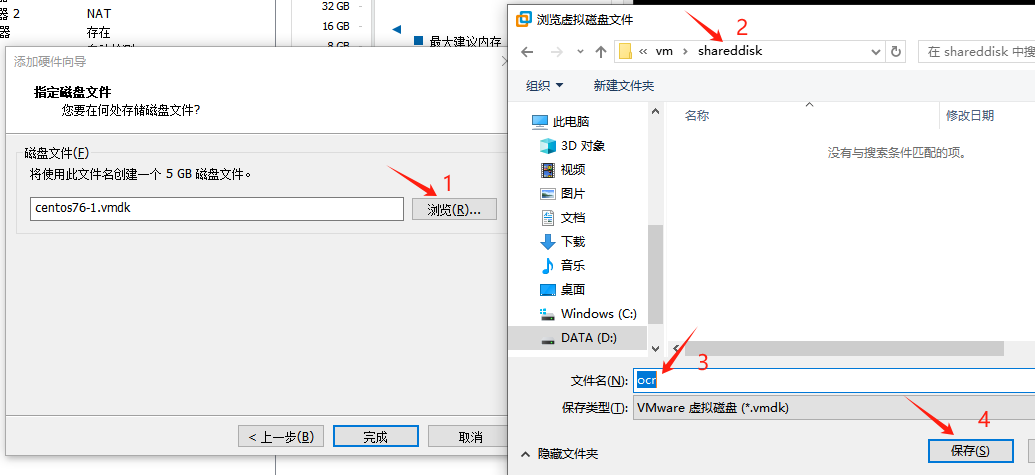

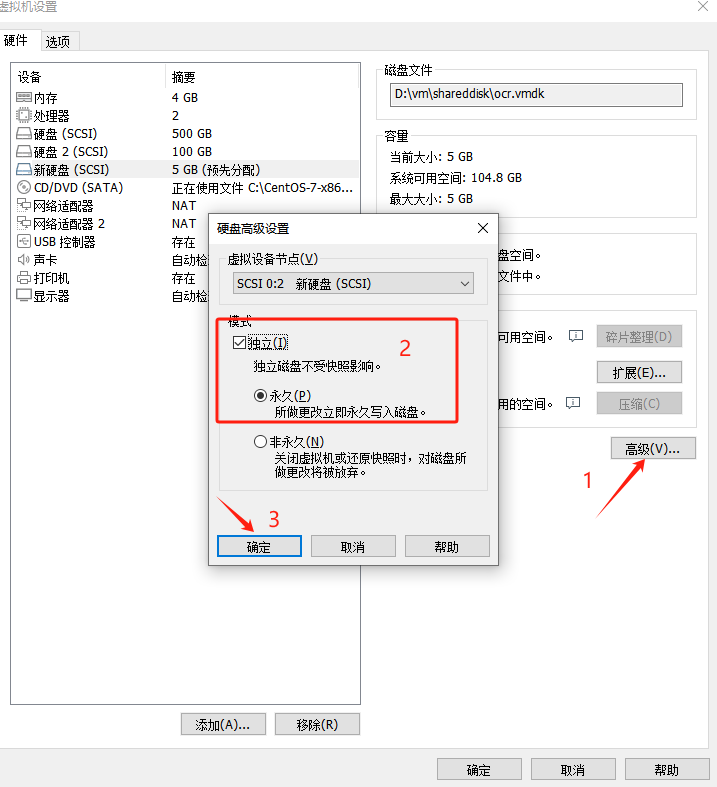

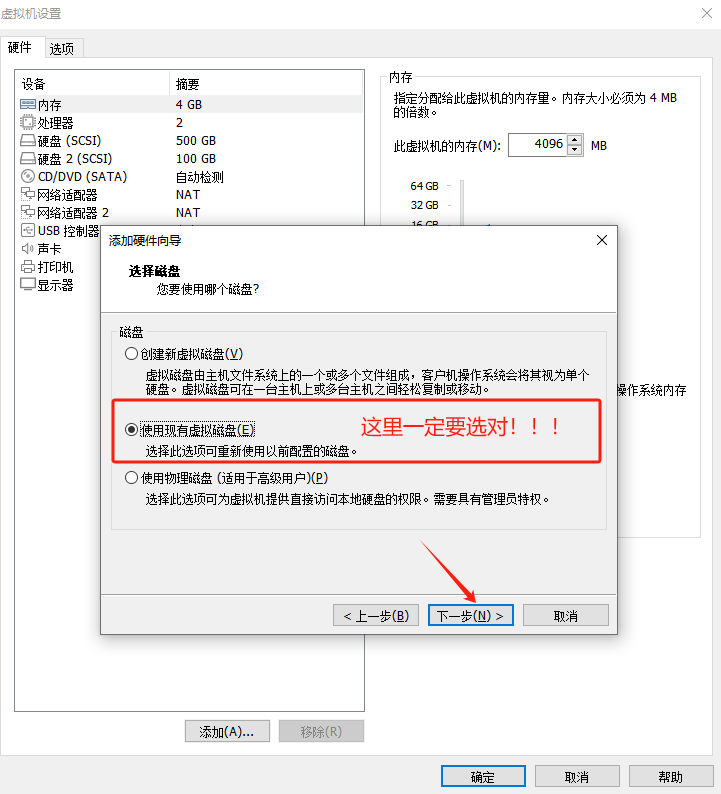

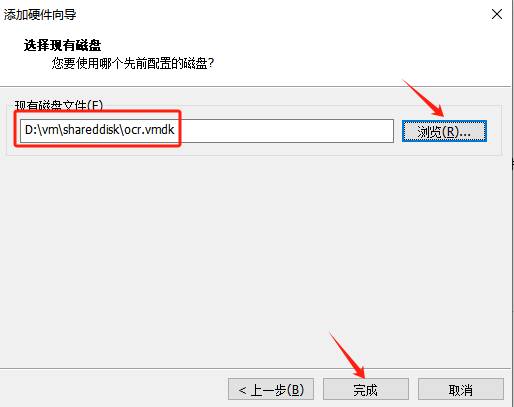

添加共享磁盤:

在node1節(jié)點(diǎn)操作:

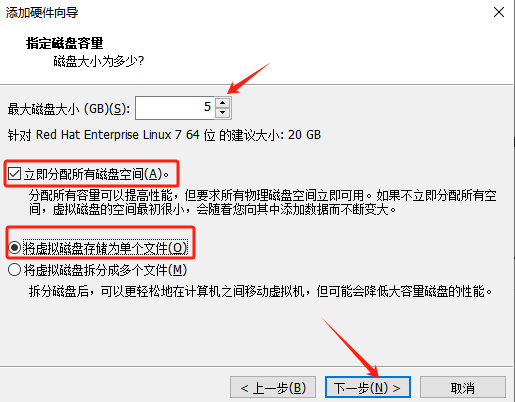

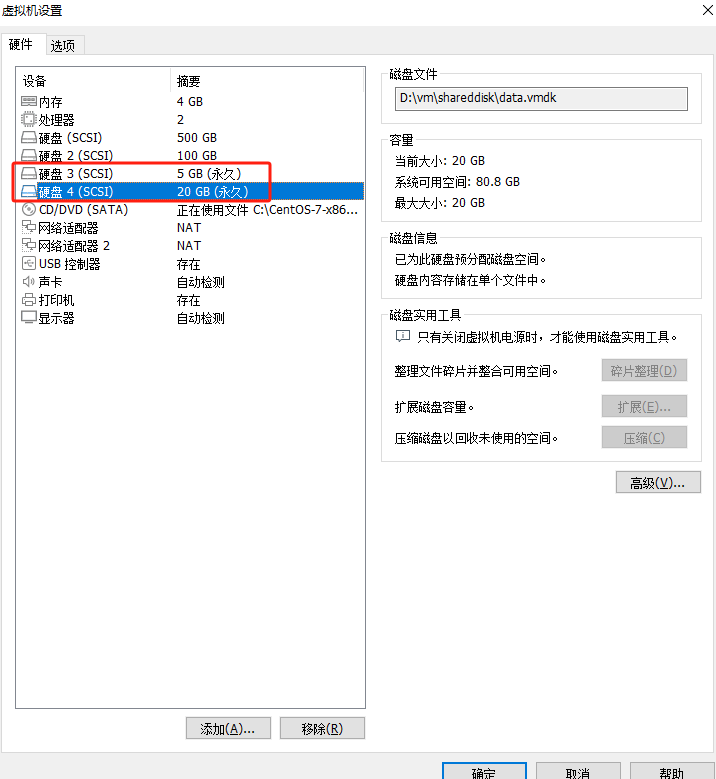

添加一個(gè)大小5G的磁盤作為asm-ocr

同樣的方法,再添加一個(gè)大小20G的磁盤作為asm-data

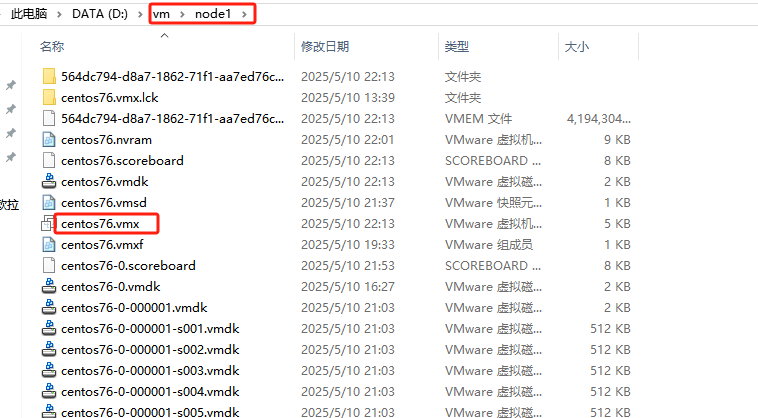

為了防止共享盤獨(dú)占,需要找到node1節(jié)點(diǎn)的vmx文件,如下圖:

編輯node1節(jié)點(diǎn)的vmx文件,在最后加入:

scsi1.sharedBus= "virtual"

disk.locking= "false"

diskLib.dataCacheMaxSize= "0"

diskLib.dataCacheMaxReadAheadSize= "0"

diskLib.DataCacheMinReadAheadSize= "0"

diskLib.dataCachePageSize= "4096"

diskLib.maxUnsyncedWrites= "0"

在node2節(jié)點(diǎn)操作:

同樣的操作方式,在node2節(jié)點(diǎn)加入asm-data盤!

同樣,編輯node2節(jié)點(diǎn)的vmx文件,在最后加入:

scsi1.sharedBus= "virtual"

disk.locking= "false"

diskLib.dataCacheMaxSize= "0"

diskLib.dataCacheMaxReadAheadSize= "0"

diskLib.DataCacheMinReadAheadSize= "0"

diskLib.dataCachePageSize= "4096"

diskLib.maxUnsyncedWrites= "0"

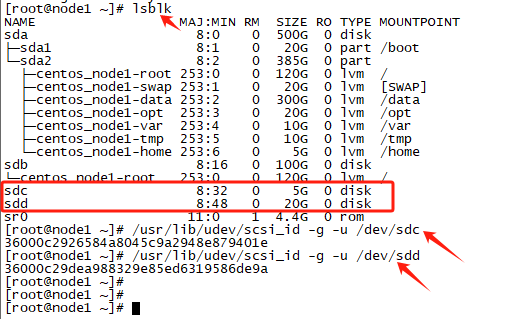

多路徑配置(node1 & node2)

安裝 multipath 綁定多路徑

#安裝并配置multipath

yum install -y device-mapper*

mpathconf --enable --with_multipathd y

#列出所有可用塊設(shè)備的信息

lsblk

#查看共享盤的scsi_id

/usr/lib/udev/scsi_id -g -u /dev/sdc

/usr/lib/udev/scsi_id -g -u /dev/sdd

配置 multipath 文件:

cat >/etc/multipath.conf<<EOF

defaults {

user_friendly_names yes

}

blacklist {

devnode "^sd[a-b]"

}

multipaths {

multipath {

wwid "36000c2926584a8045c9a2948e879401e"

alias asm_ocr

}

multipath {

wwid "36000c29dea988329e85ed6319586de9a"

alias asm_data

}

}

EOF

注意:wwid的值為上面獲取的scsi_id,alias可自定義,這里配置1塊OCR盤,1塊DATA盤!

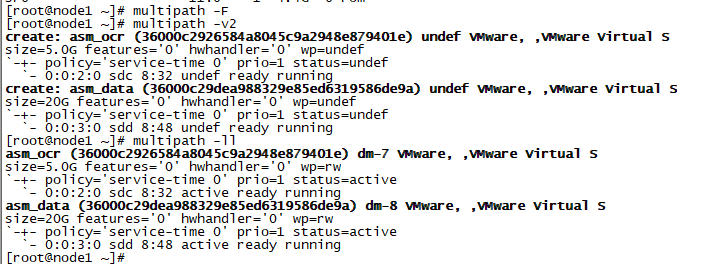

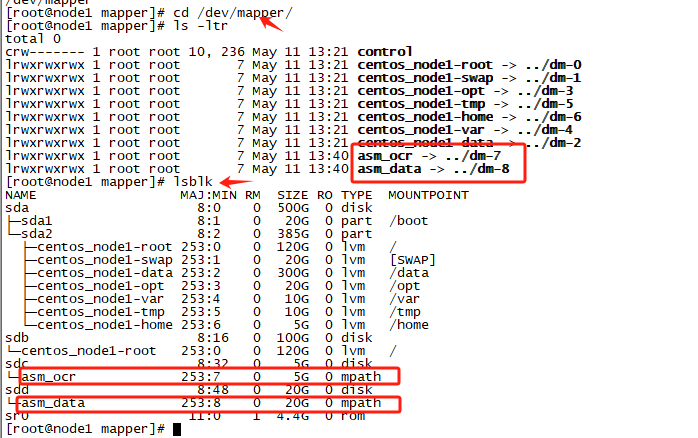

激活multipath多路徑

#刪除現(xiàn)有路徑

multipath -F

#格式化路徑

multipath -v2

#查看多路徑

multipath -ll

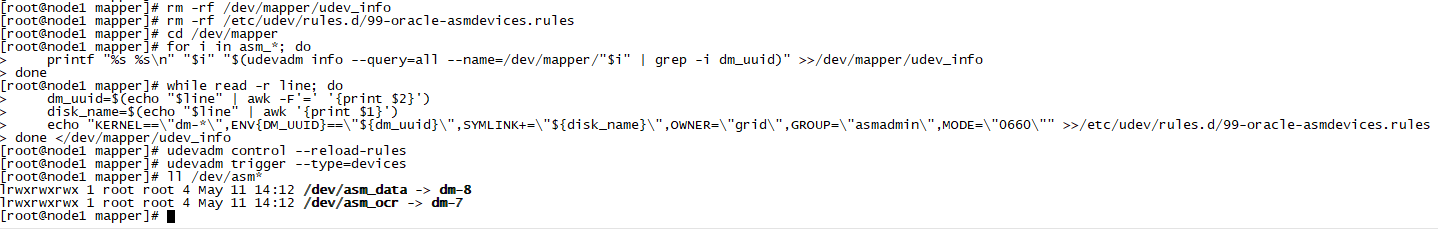

配置UDEV綁盤

rm -rf /dev/mapper/udev_info

rm -rf /etc/udev/rules.d/99-oracle-asmdevices.rules

cd /dev/mapper

for i in asm_*; do

printf "%s %s\n" "$i" "$(udevadm info --query=all --name=/dev/mapper/"$i" | grep -i dm_uuid)" >>/dev/mapper/udev_info

done

while read -r line; do

dm_uuid=$(echo "$line" | awk -F'=' '{print $2}')

disk_name=$(echo "$line" | awk '{print $1}')

echo "KERNEL==\"dm-*\",ENV{DM_UUID}==\"${dm_uuid}\",SYMLINK+=\"${disk_name}\",OWNER=\"grid\",GROUP=\"asmadmin\",MODE=\"0660\"" >>/etc/udev/rules.d/99-oracle-asmdevices.rules

done </dev/mapper/udev_info

##重載udev

udevadm control --reload-rules

udevadm trigger --type=devices

ll /dev/asm*

注意: 這里由于沒有創(chuàng)建 grid 用戶,因此權(quán)限和組是 root,等創(chuàng)建 grid 用戶后,再次重載 udev 即可!

確認(rèn)配置完成后,分別在兩個(gè)節(jié)點(diǎn)輸入命令 ls /dev/asm* 查看是否已經(jīng)成功綁定!

node2節(jié)點(diǎn)操作方式同node1節(jié)點(diǎn)。

網(wǎng)絡(luò)配置(node1 & node2)

啟動(dòng)node1節(jié)點(diǎn),登錄node1節(jié)點(diǎn):

#查看網(wǎng)卡編號(hào)

[root@node1 ~]# ifconfig

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.*.*.60 netmask 255.255.255.0 broadcast 192.*.*.255

inet6 fe80::278c:4ef0:51a0:76ee prefixlen 64 scopeid 0x20<link>

ether 00:50:56:21:a1:ea txqueuelen 1000 (Ethernet)

RX packets 479 bytes 44375 (43.3 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 282 bytes 41455 (40.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

ens36: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.31.237 netmask 255.255.255.0 broadcast 192.168.31.255

inet6 fe80::c7aa:dbf3:238c:425a prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:6c:e4:8a txqueuelen 1000 (Ethernet)

RX packets 2403 bytes 150121 (146.6 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 76 bytes 10497 (10.2 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 108 bytes 9180 (8.9 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 108 bytes 9180 (8.9 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

virbr0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 192.168.122.1 netmask 255.255.255.0 broadcast 192.168.122.255

ether 52:54:00:30:a4:a4 txqueuelen 1000 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@node1 ~]# nmcli connection show

NAME UUID TYPE DEVICE

Wired connection 1 3a14e728-e688-3735-a3a6-56686811ecc7 ethernet ens36

ens33 c96bc909-188e-ec64-3a96-6a90982b08ad ethernet ens33

virbr0 9ca1ac9f-e875-4c43-bd54-cd2dd817b13b bridge virbr0

可以看到,ens36網(wǎng)卡并沒有激活,并且有多余的'Wired connection 1'連接。

#刪除'Wired connection 1'

[root@node1 ~]# nmcli connection delete 'Wired connection 1'

Connection 'Wired connection 1' (3a14e728-e688-3735-a3a6-56686811ecc7) successfully deleted.

[root@node1 ~]# nmcli connection show

NAME UUID TYPE DEVICE

ens33 c96bc909-188e-ec64-3a96-6a90982b08ad ethernet ens33

virbr0 9ca1ac9f-e875-4c43-bd54-cd2dd817b13b bridge virbr0

#添加ens36網(wǎng)卡

[root@node1 ~]# nmcli connection add con-name ens36 type ethernet ifname ens36

Connection 'ens36' (0a0dc267-5cbe-4dd2-a1ef-c1aa6cf3e52b) successfully added.

[root@node1 ~]# nmcli connection show

NAME UUID TYPE DEVICE

ens33 c96bc909-188e-ec64-3a96-6a90982b08ad ethernet ens33

ens36 0a0dc267-5cbe-4dd2-a1ef-c1aa6cf3e52b ethernet ens36

virbr0 9ca1ac9f-e875-4c43-bd54-cd2dd817b13b bridge virbr0

可以看到,ens36網(wǎng)卡已經(jīng)成功激活。

啟動(dòng)node2節(jié)點(diǎn),node2節(jié)點(diǎn)操作方式同node1節(jié)點(diǎn)。

node1節(jié)點(diǎn)使用nmcli配置Public IP、Private IP:

#配置Public IP

nmcli connection modify ens33 ipv4.addresses 192.*.*.60/24 ipv4.gateway 192.*.*.2 ipv4.method manual autoconnect yes

#配置Private IP

nmcli connection modify ens36 ipv4.addresses 10.*.*.11/24 ipv4.method manual autoconnect yes

#生效

nmcli connection up ens33

nmcli connection up ens36

nmcli connection show

node2節(jié)點(diǎn)使用nmcli配置Public IP、Private IP:

#配置Public IP

nmcli connection modify ens33 ipv4.addresses 192.*.*.62/24 ipv4.gateway 192.*.*.2 ipv4.method manual autoconnect yes

#配置Private IP

nmcli connection modify ens36 ipv4.addresses 10.*.*.12/24 ipv4.method manual autoconnect yes

#生效

nmcli connection up ens33

nmcli connection up ens36

nmcli connection show

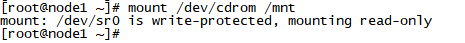

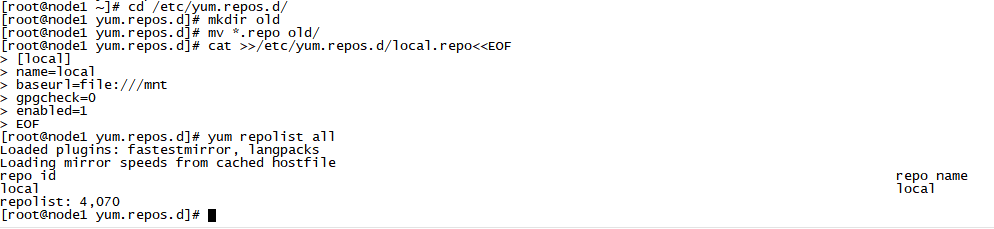

掛載iso鏡像(node1 & node2)

mount /dev/cdrom /mnt

配置yum源

cd /etc/yum.repos.d/

mkdir old

mv *.repo old/

cat >>/etc/yum.repos.d/local.repo<<EOF

[local]

name=local

baseurl=file:///mnt

gpgcheck=0

enabled=1

EOF

yum repolist all

安裝依賴包(node1 & node2)

yum install -y bc \ binutils \ compat-libcap1 \ compat-libstdc++-33 \ elfutils-libelf \ elfutils-libelf-devel \ fontconfig-devel \ glibc \ glibc-devel \ ksh \ libaio \ libaio-devel \ libXrender \ libXrender-devel \ libX11 \ libXau \ libXi \ libXtst \ libgcc \ libstdc++ \ libstdc++-devel \ libxcb \ make \ policycoreutils \ policycoreutils-python \ smartmontools \ sysstat \ unzip \ psmisc \ xorg-x11-xauth \ net-tools \ nfs-utils \ gcc \ gcc-c++

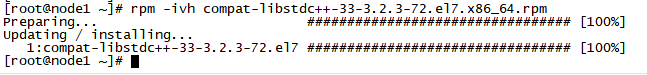

系統(tǒng)安裝盤里面沒有compat-libstdc++-33包,需要提前下載并安裝:

rpm -ivh compat-libstdc++-33-3.2.3-72.el7.x86_64.rpm

檢查依賴包安裝情況

rpm -q bc binutils compat-libcap1 compat-libstdc++-33 elfutils-libelf elfutils-libelf-devel fontconfig-devel glibc glibc-devel ksh libaio libaio-devel libXrender libXrender-devel libX11 libXau libXi libXtst libgcc libstdc++ libstdc++-devel libxcb make policycoreutils policycoreutils-python smartmontools sysstat unzip psmisc xorg-x11-xauth net-tools nfs-utils gcc gcc-c++ | grep "not installed"

/etc/hosts配置(node1 & node2)

cat >>/etc/hosts<<EOF

#Public IP

192.*.*.60 node1

192.*.*.62 node2

#Private IP

10.*.*.11 node1-priv

10.*.*.12 node2-priv

#Vip IP

192.*.*.70 node1-vip

192.*.*.72 node2-vip

#Scan IP

192.*.*.75 orclscan

EOF

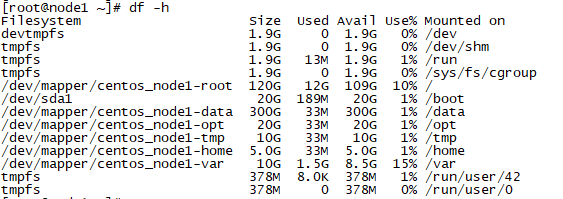

檢查目錄大小

df -h

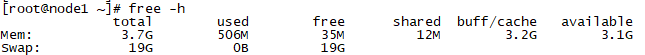

檢查內(nèi)存和SWAP大小

free -h

關(guān)閉透明大頁和numa(node1 & node2)

1、查看透明大頁是否關(guān)閉(always 表示啟用,never 表示禁用):

cat /sys/kernel/mm/transparent_hugepage/enabled

2、修改 grub 配置文件添加"transparent_hugepage=never numa=off":

vi /etc/default/grub

在GRUB_CMDLINE_LINUX…行最后添加transparent_hugepage=never

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

GRUB_DEFAULT=saved

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="console"

GRUB_CMDLINE_LINUX="rd.lvm.lv=rhel/root rd.lvm.lv=rhel/swap rhgb quiet transparent_hugepage=never numa=off"

GRUB_DISABLE_RECOVERY="true"

3、重新生成grub文件

當(dāng)節(jié)點(diǎn)使用UEFI安裝和啟動(dòng)時(shí),使用如下命令:

# grub2-mkconfig -o /boot/efi/EFI/redhat/grub.cfg

當(dāng)節(jié)點(diǎn)使用傳統(tǒng)BIOS安裝和啟動(dòng)時(shí),使用如下命令:

# grub2-mkconfig -o /boot/grub2/grub.cfg

4、重啟節(jié)點(diǎn)生效,重啟后檢查透明大頁和numa是否生效,使用如下命令:

cat /sys/kernel/mm/transparent_hugepage/enabled

lscpu | grep -i numa

sysctl -a | grep numa

kernel.numa_balancing = 0

關(guān)閉防火墻(node1 & node2)

systemctl stop firewalld.service

systemctl disable firewalld.service

systemctl status firewalld.service

關(guān)閉selinux

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

setenforce 0

getenforce

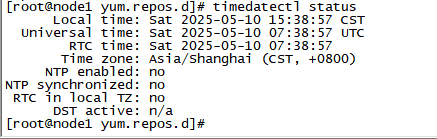

檢查 OS 時(shí)區(qū)(node1 & node2)

timedatectl status

# 如果時(shí)區(qū)不正確,使用下面命令修改:

# timedatectl set-timezone "Asia/Shanghai"

禁用chronyd(node1 & node2)

systemctl stop chronyd.service

systemctl disable chronyd.service

配置NTP時(shí)間同步

只在node2節(jié)點(diǎn)執(zhí)行,和node1節(jié)點(diǎn)同步,生產(chǎn)系統(tǒng)中需要和時(shí)間服務(wù)器同步:

yum install -y ntpdate

##192.*.*.60為時(shí)間服務(wù)器IP,每天12點(diǎn)同步系統(tǒng)時(shí)間

cat >>/var/spool/cron/root<<EOF

00 12 * * * /usr/sbin/ntpdate -u 192.*.*.60 && /usr/sbin/hwclock -w

EOF

#查看計(jì)劃任務(wù)

crontab -l

##手動(dòng)執(zhí)行

/usr/sbin/ntpdate -u 192.*.*.60 && /usr/sbin/hwclock -w

#禁用avahi-daemon

avahi-daemon 會(huì)導(dǎo)致 RAC 的節(jié)點(diǎn)重啟。

systemctl stop avahi-daemon.socket

systemctl stop avahi-daemon.service

systemctl disable avahi-daemon.socket

systemctl disable avahi-daemon.service

系統(tǒng)參數(shù)配置(node1 & node2)

memTotal=$(grep MemTotal /proc/meminfo | awk '{print $2}')

totalMemory=$((memTotal / 2048))

shmall=$((memTotal / 4))

if [ $shmall -lt 2097152 ]; then

shmall=2097152

fi

shmmax=$((memTotal * 1024 - 1))

if [ "$shmmax" -lt 4294967295 ]; then

shmmax=4294967295

fi

cat >>/etc/sysctl.conf<<EOF

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.shmall = $shmall

kernel.shmmax = $shmmax

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048576

net.ipv4.conf.ens33.rp_filter = 1

net.ipv4.conf.ens36.rp_filter = 2

EOF

注意:ens33和ens36要根據(jù)實(shí)際環(huán)境替換。

參數(shù)生效:

sysctl -p

系統(tǒng)資源限制配置(node1 & node2)

配置limits.conf:

cat >>/etc/security/limits.conf<<EOF

oracle soft nofile 1024

oracle hard nofile 65536

oracle soft stack 10240

oracle hard stack 32768

oracle soft nproc 2047

oracle hard nproc 16384

oracle hard memlock 134217728

oracle soft memlock 134217728

grid soft nofile 1024

grid hard nofile 65536

grid soft stack 10240

grid hard stack 32768

grid soft nproc 2047

grid hard nproc 16384

EOF

配置pam.d/login:

cat >>/etc/pam.d/login <<EOF

session required pam_limits.so

session required /lib64/security/pam_limits.so

EOF

用戶及組、目錄創(chuàng)建(node1 & node2)

創(chuàng)建安裝 Oracle 數(shù)據(jù)庫所需的用戶、組以及安裝目錄。

創(chuàng)建組:

/usr/sbin/groupadd -g 54321 oinstall /usr/sbin/groupadd -g 54322 dba /usr/sbin/groupadd -g 54323 oper /usr/sbin/groupadd -g 54324 backupdba /usr/sbin/groupadd -g 54325 dgdba /usr/sbin/groupadd -g 54326 kmdba /usr/sbin/groupadd -g 54327 asmdba /usr/sbin/groupadd -g 54328 asmoper /usr/sbin/groupadd -g 54329 asmadmin /usr/sbin/groupadd -g 54330 racdba

創(chuàng)建用戶:

/usr/sbin/useradd -u 54322 -g oinstall -G asmadmin,asmdba,asmoper,dba,racdba,oper grid

/usr/sbin/useradd -u 54321 -g oinstall -G asmdba,dba,backupdba,dgdba,kmdba,racdba,oper oracle

##修改用戶密碼為oracle

echo "oracle" |passwd oracle --stdin

echo "oracle" |passwd grid --stdin

##查看用戶組

id grid

id oracle

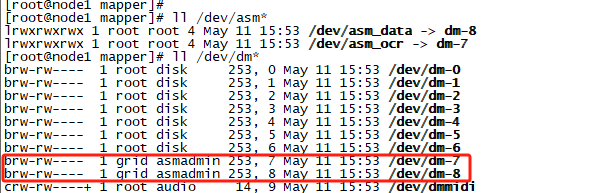

##重載udev

udevadm control --reload-rules

udevadm trigger --type=devices

ll /dev/asm*

ll /dev/dm*

可以看到,綁盤權(quán)限已經(jīng)變成 grid 了!

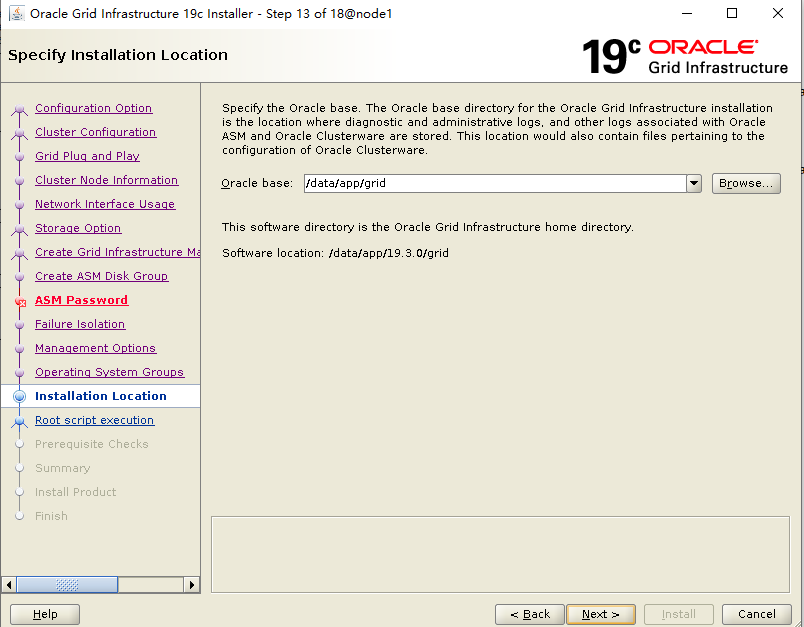

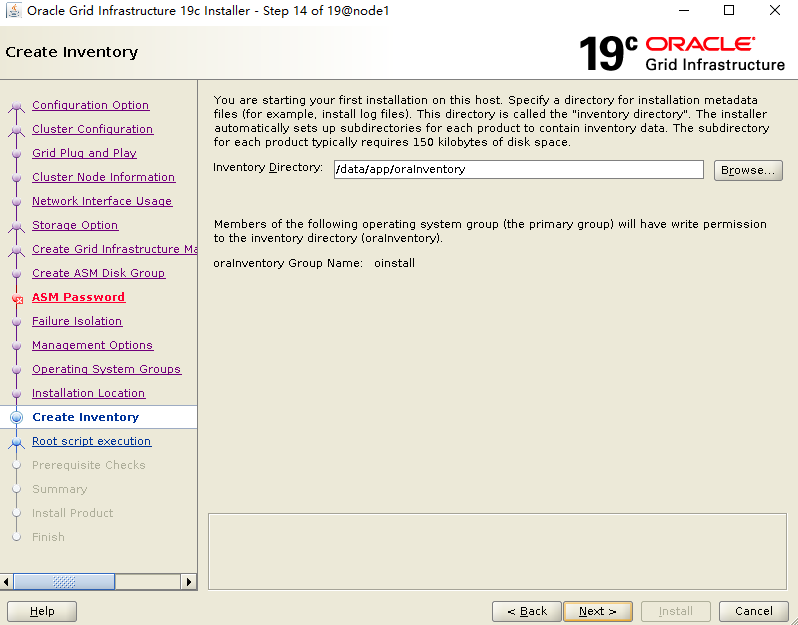

創(chuàng)建軟件目錄:

mkdir -p /data/app/19.3.0/grid mkdir -p /data/app/grid mkdir -p /data/app/oracle/product/19.3.0/db mkdir -p /data/app/oraInventory mkdir -p /backup mkdir -p /home/oracle/scripts chown -R oracle:oinstall /backup chown -R oracle:oinstall /home/oracle/scripts chown -R grid:oinstall /data chown -R grid:oinstall /data/app/grid chown -R grid:oinstall /data/app/19.3.0/grid chown -R grid:oinstall /data/app/oraInventory chown -R oracle:oinstall /data/app/oracle chmod -R 775 /data

環(huán)境變量配置(node1 & node2)

grid用戶環(huán)境變量:

cat >>/home/grid/.bash_profile<<EOF

umask 022

export TMP=/tmp

export TMPDIR=\$TMP

export NLS_LANG=AMERICAN_AMERICA.AL32UTF8

export ORACLE_BASE=/data/app/grid

export ORACLE_HOME=/data/app/19.3.0/grid

export ORACLE_TERM=xterm

export TNS_ADMIN=\$ORACLE_HOME/network/admin

export LD_LIBRARY_PATH=\$ORACLE_HOME/lib:/lib:/usr/lib

export ORACLE_SID=+ASM1

export PATH=/usr/sbin:\$PATH

export PATH=\$ORACLE_HOME/bin:\$ORACLE_HOME/OPatch:\$PATH

alias sas='sqlplus / as sysasm'

export PS1="[\`whoami\`@\`hostname\`:"'\$PWD]\$ '

EOF

注意: 每個(gè)節(jié)點(diǎn)的 ORACLE_SID 不一樣(+ASM1/+ASM2),需要自行修改!

oracle用戶環(huán)境變量:

cat >>/home/oracle/.bash_profile<<EOF

umask 022

export TMP=/tmp

export TMPDIR=\$TMP

export NLS_LANG=AMERICAN_AMERICA.AL32UTF8

export ORACLE_BASE=/data/app/oracle

export ORACLE_HOME=\$ORACLE_BASE/product/19.3.0/db

export ORACLE_HOSTNAME=node1

export ORACLE_TERM=xterm

export TNS_ADMIN=\$ORACLE_HOME/network/admin

export LD_LIBRARY_PATH=\$ORACLE_HOME/lib:/lib:/usr/lib

export ORACLE_SID=orcl1

export PATH=/usr/sbin:\$PATH

export PATH=\$ORACLE_HOME/bin:\$ORACLE_HOME/OPatch:\$PATH

alias sas='sqlplus / as sysdba'

export PS1="[\`whoami\`@\`hostname\`:"'\$PWD]\$ '

EOF

注意: 每個(gè)節(jié)點(diǎn)的 ORACLE_HOSTNAME(node1/node2) 和 ORACLE_SID(orcl1/orcl2)不一樣,需要自行修改!

安裝介質(zhì)上傳并解壓(node1)

安裝包上傳至node1節(jié)點(diǎn) /soft 目錄:

#創(chuàng)建安裝介質(zhì)存放目錄

mkdir /soft

#上傳安裝介質(zhì)到/soft目錄

LINUX.X64_193000_db_home.zip

LINUX.X64_193000_grid_home.zip

p37257886_190000_Linux-x86-64.zip

p6880880_190000_Linux-x86-64.zip

注意: 19C 的安裝包需要解壓到對(duì)應(yīng)的 ORACLE_HOME 目錄下!

靜默解壓grid安裝包:

chown -R grid:oinstall /soft

su - grid -c "unzip -q /soft/LINUX.X64_193000_grid_home.zip -d /data/app/19.3.0/grid/"

靜默解壓oracle安裝包:

chown -R oracle:oinstall /soft

su - oracle -c "unzip -q /soft/LINUX.X64_193000_db_home.zip -d /data/app/oracle/product/19.3.0/db/"

靜默解壓補(bǔ)丁安裝包:

cd /soft

##解壓RU補(bǔ)丁包和OPatch補(bǔ)丁包

chown -R grid:oinstall /soft

su - grid -c "unzip -q -o /soft/p6880880_190000_Linux-x86-64.zip -d /data/app/19.3.0/grid"

su - grid -c "unzip -q /soft/p37257886_190000_Linux-x86-64.zip -d /soft"

chown -R oracle:oinstall /soft

su - oracle -c "unzip -q -o /soft/p6880880_190000_Linux-x86-64.zip -d /data/app/oracle/product/19.3.0/db"

注意: 由于19C支持安裝grid軟件前打RU補(bǔ)丁,因此提前解壓OPatch和RU補(bǔ)丁,為安裝做準(zhǔn)備!

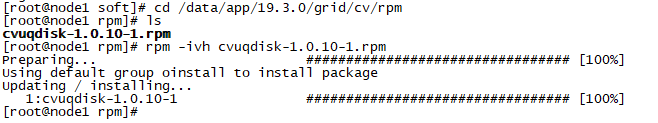

root用戶下,cvuqdisk安裝(node1 & node2):

cd /data/app/19.3.0/grid/cv/rpm

rpm -ivh cvuqdisk-1.0.10-1.rpm

#傳輸?shù)絥ode2,安裝

scp cvuqdisk-1.0.10-1.rpm node2:/soft

rpm -ivh /soft/cvuqdisk-1.0.10-1.rpm

配置grid用戶ssh互信(node1)

su - grid

$ORACLE_HOME/oui/prov/resources/scripts/sshUserSetup.sh -user grid -hosts "node1 node2" -advanced -noPromptPassphrase

配置oracle用戶ssh互信(node1)

su - grid

$ORACLE_HOME/oui/prov/resources/scripts/sshUserSetup.sh -user oracle -hosts "node1 node2" -advanced -noPromptPassphrase

所有配置都完成后,重啟虛擬機(jī)。此時(shí)可以保存虛擬機(jī)快照,以防安裝過程中出錯(cuò)!

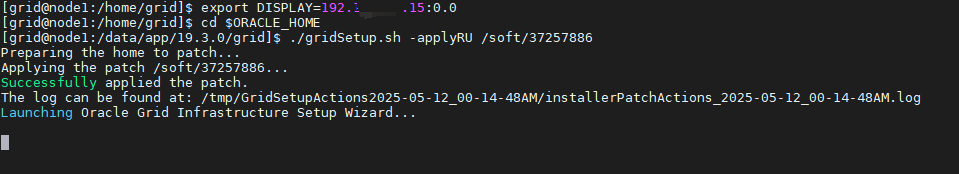

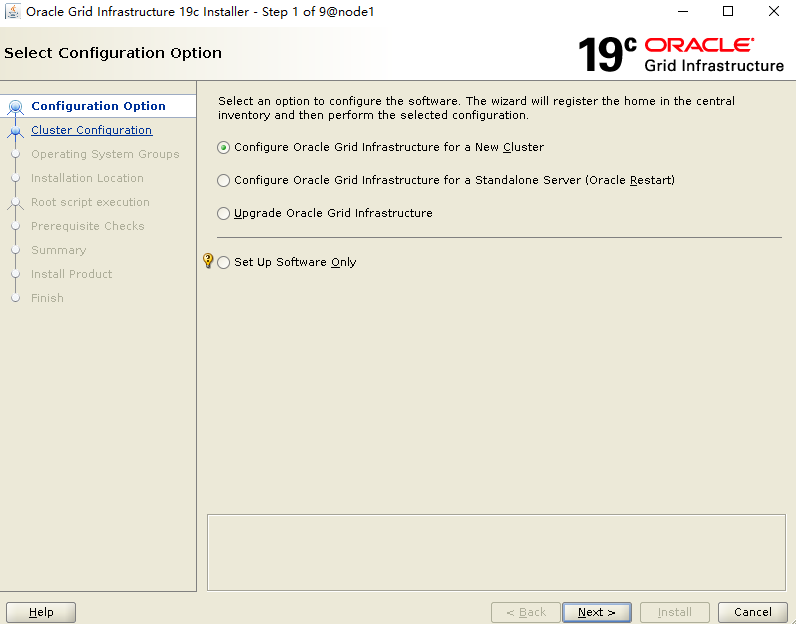

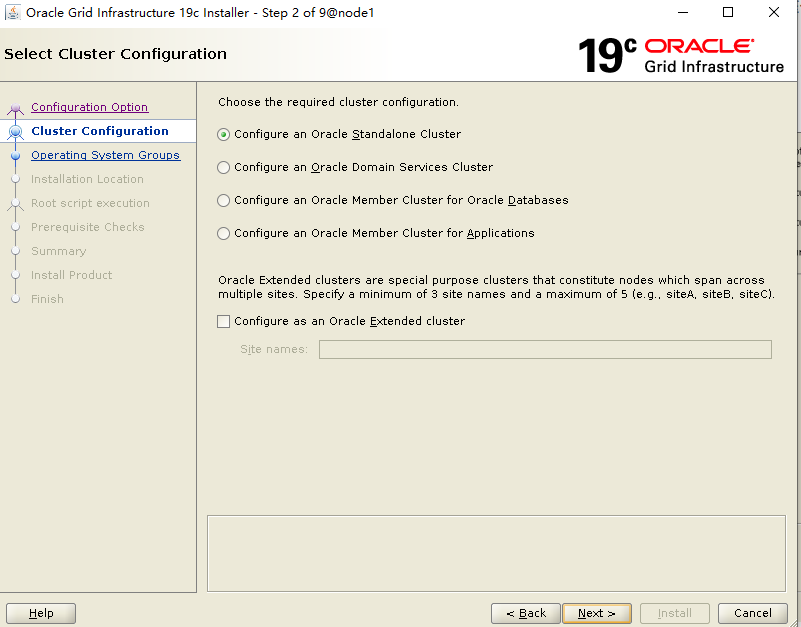

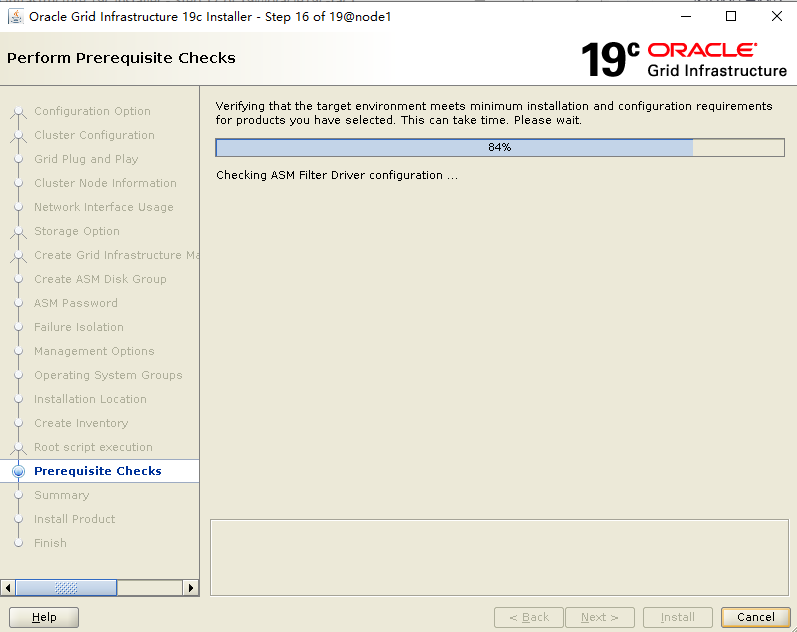

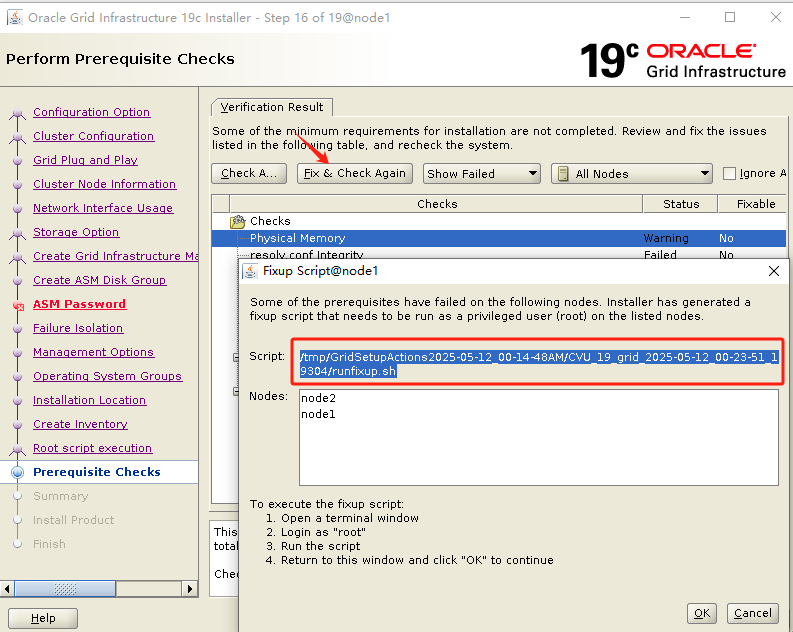

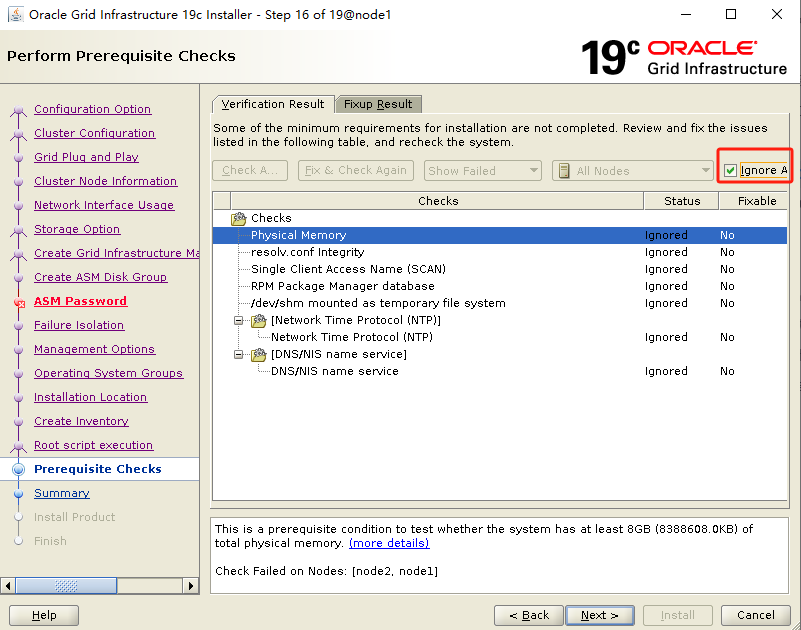

四、安裝grid軟件(node1)+ RU

su - grid

cd $ORACLE_HOME

export DISPLAY=192.*.*.15:0.0 #這個(gè)根據(jù)本地實(shí)際情況進(jìn)行配置

./gridSetup.sh -applyRU /soft/37257886

執(zhí)行安裝程序開始安裝,通過-applyRU參數(shù)指向RU補(bǔ)丁解壓位置,提前安裝grid補(bǔ)丁。

./gridSetup.sh -applyRU /soft/37257886

這里首先進(jìn)行的是補(bǔ)丁升級(jí),然后再啟動(dòng)安裝界面

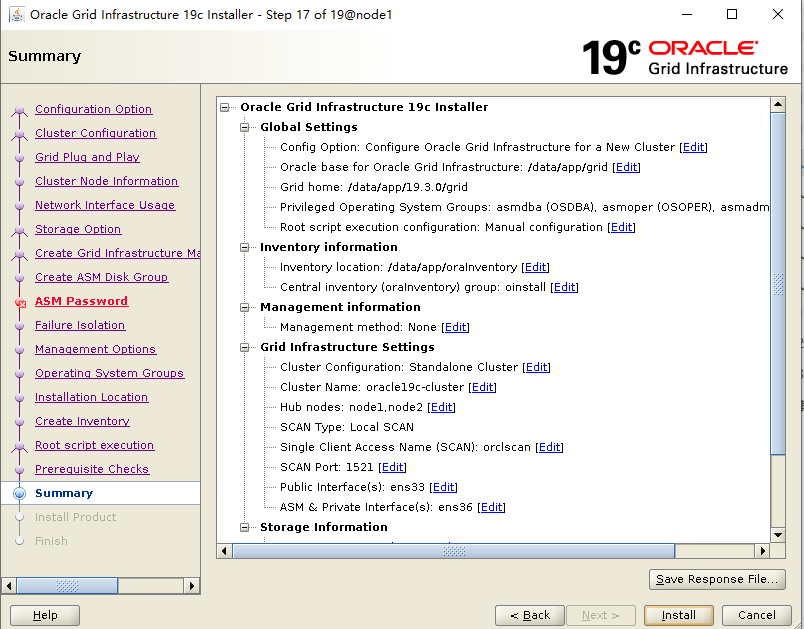

填寫集群名稱和scan名字,scan名字和/etc/hosts一致

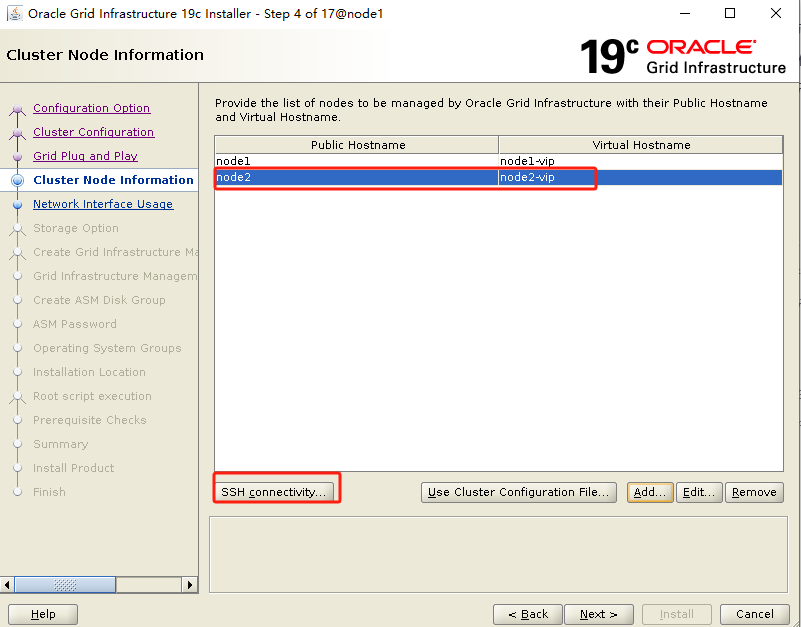

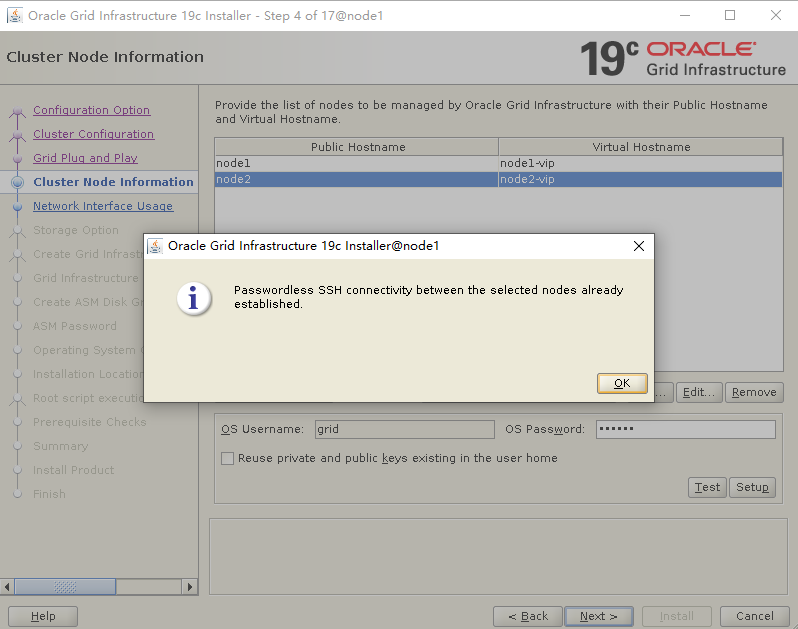

添加node2節(jié)點(diǎn)信息,進(jìn)行互信

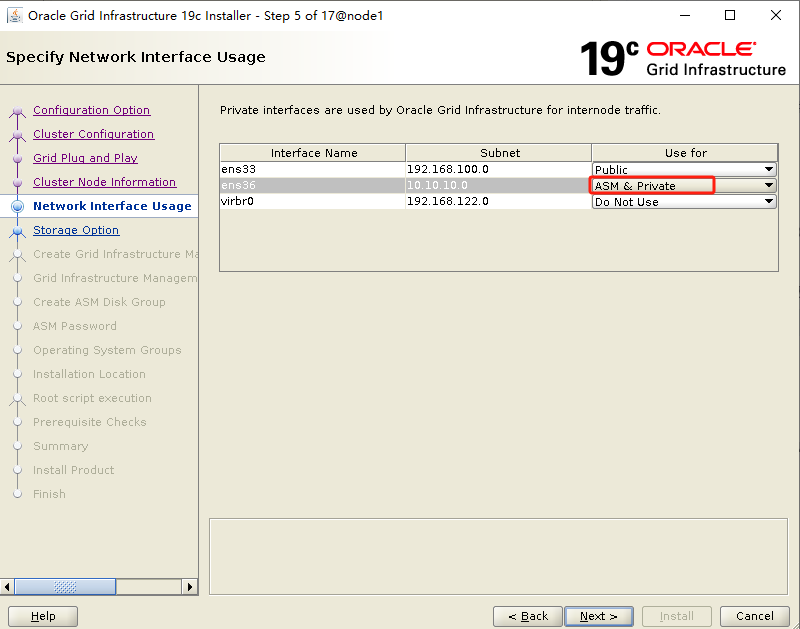

確保對(duì)應(yīng)網(wǎng)卡和IP網(wǎng)段對(duì)應(yīng)即可,19C心跳網(wǎng)段需要選ASM & Private,用于ASM實(shí)例的托管

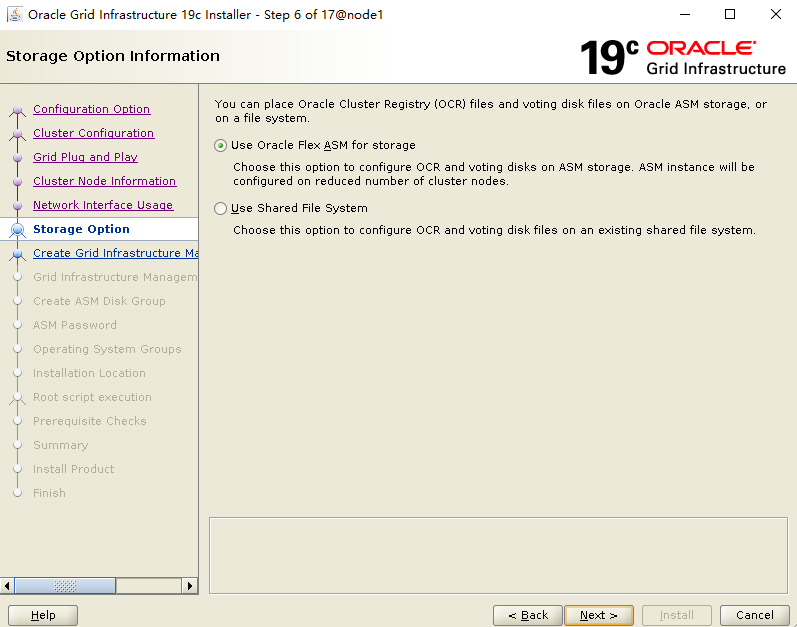

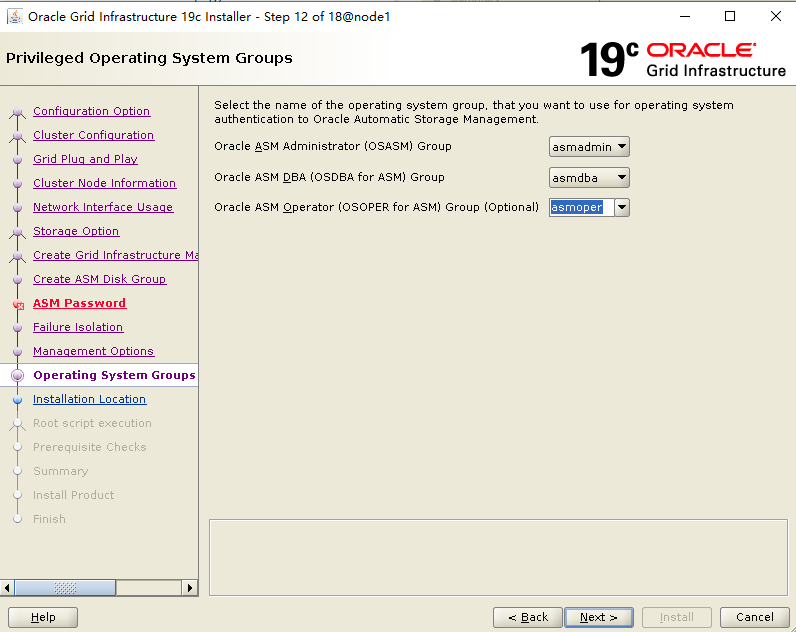

選擇ASM

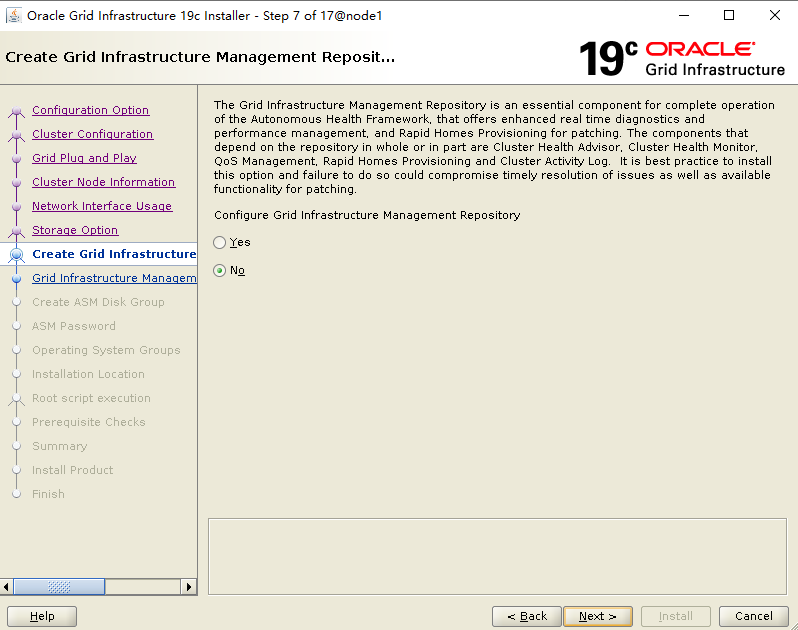

不安裝GIMR

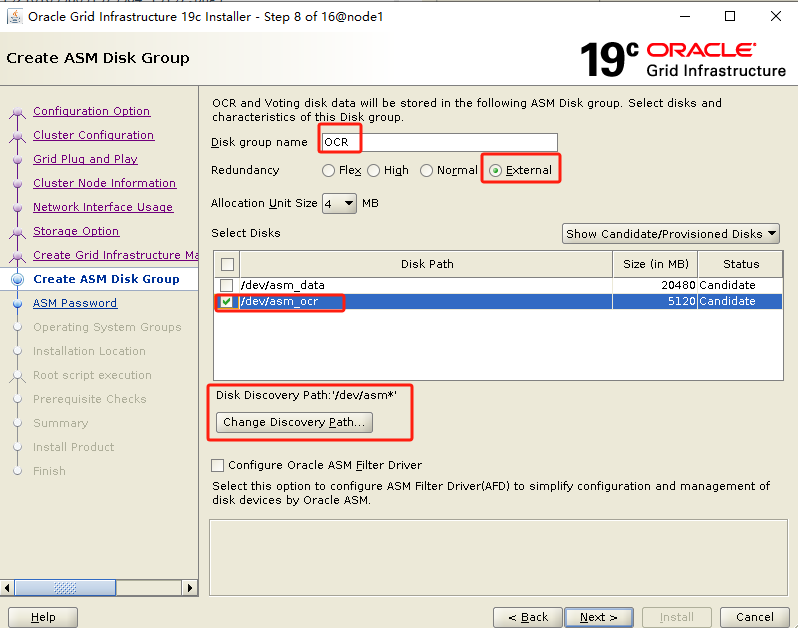

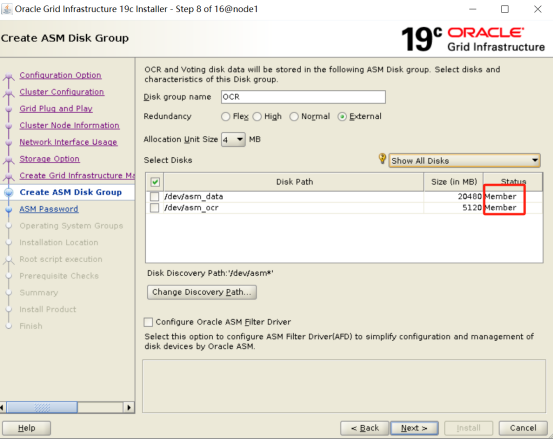

修改路徑,只有1塊OCR盤,選擇external

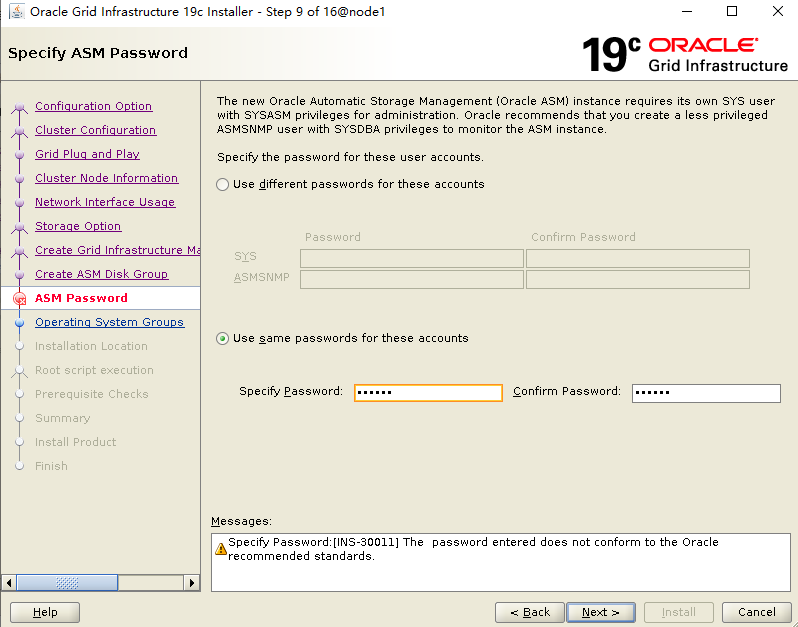

設(shè)置 sys/system 密碼

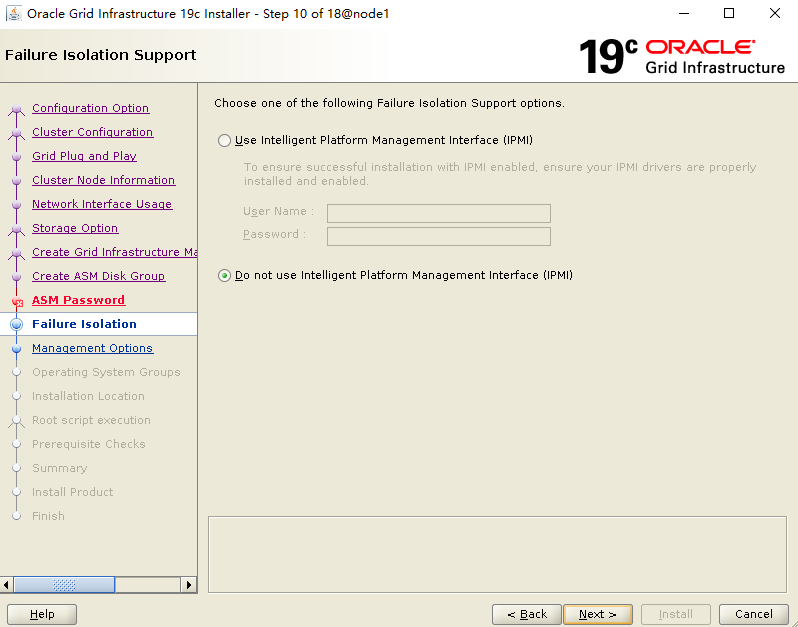

不使用IPMI

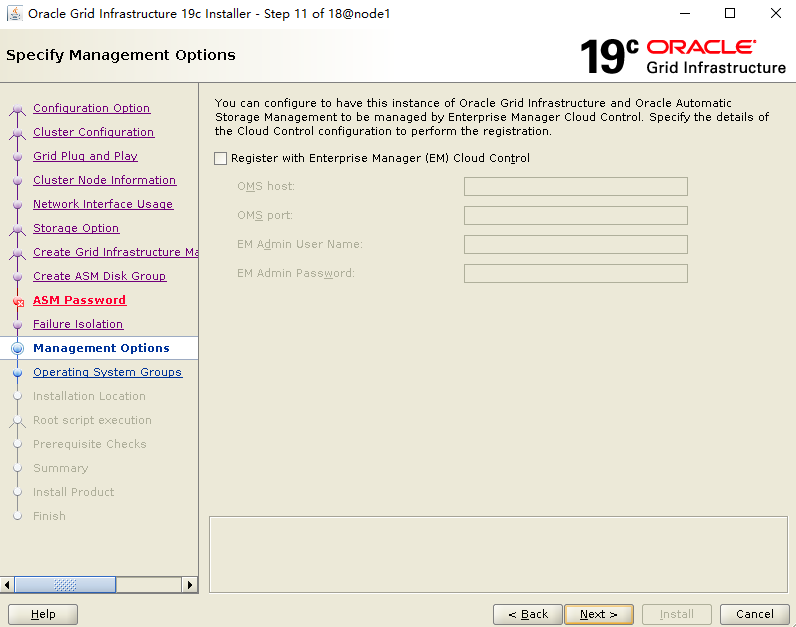

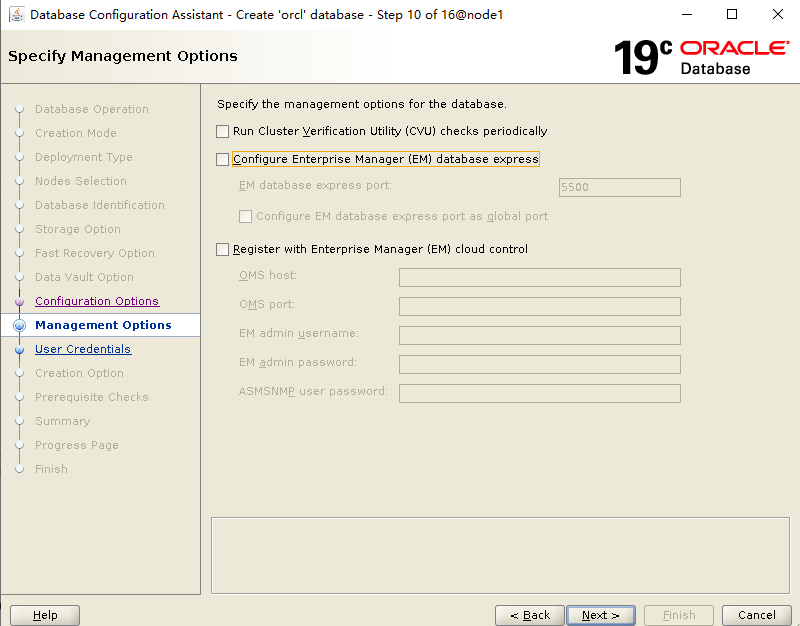

不注冊(cè)EM

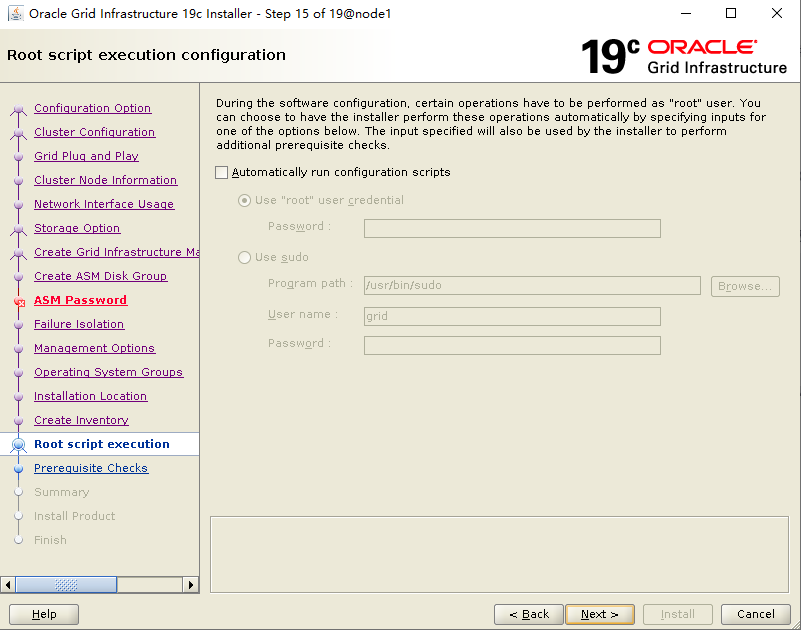

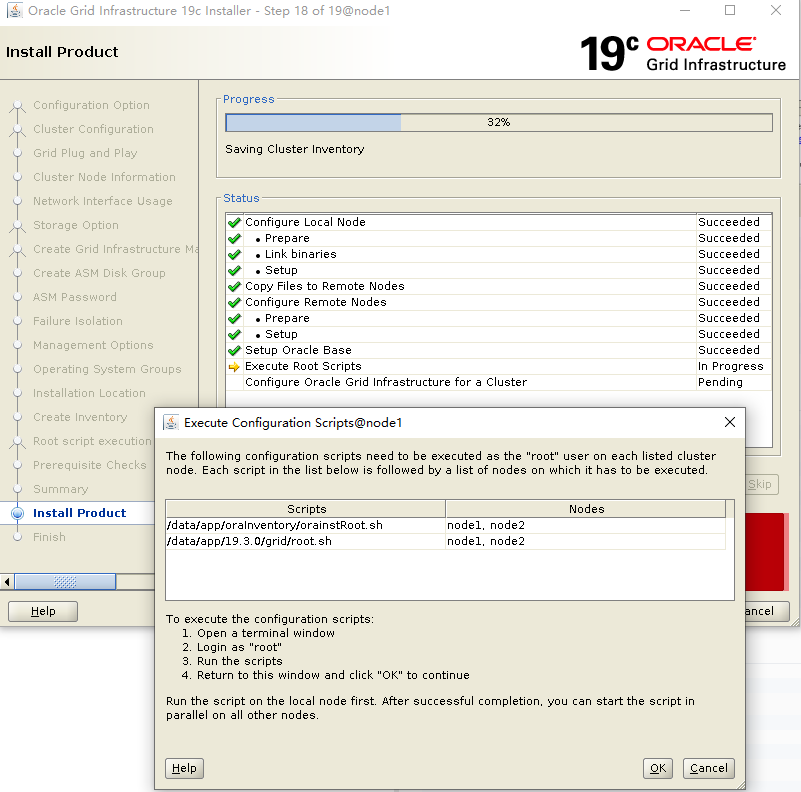

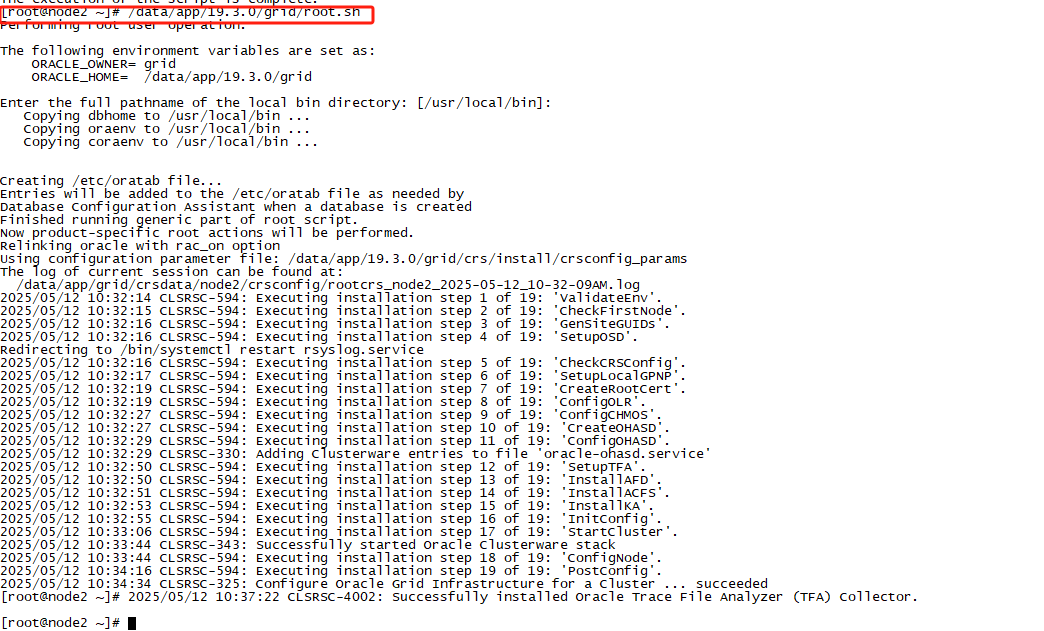

root用戶下,按順序執(zhí)行腳本:

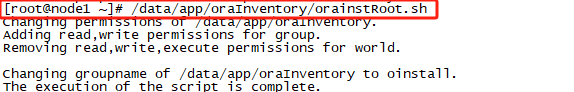

#node1節(jié)點(diǎn)執(zhí)行

/data/app/oraInventory/orainstRoot.sh

#node2節(jié)點(diǎn)執(zhí)行

/data/app/oraInventory/orainstRoot.sh

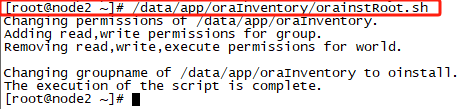

#node1節(jié)點(diǎn)執(zhí)行

/data/app/19.3.0/grid/root.sh

#node2節(jié)點(diǎn)執(zhí)行

/data/app/19.3.0/grid/root.sh

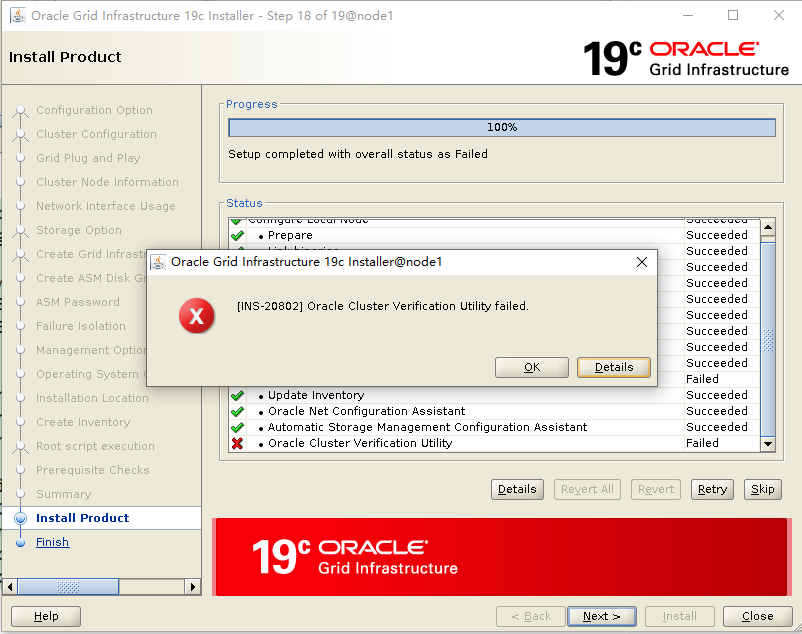

安裝繼續(xù):

這個(gè)報(bào)錯(cuò)可以忽略!

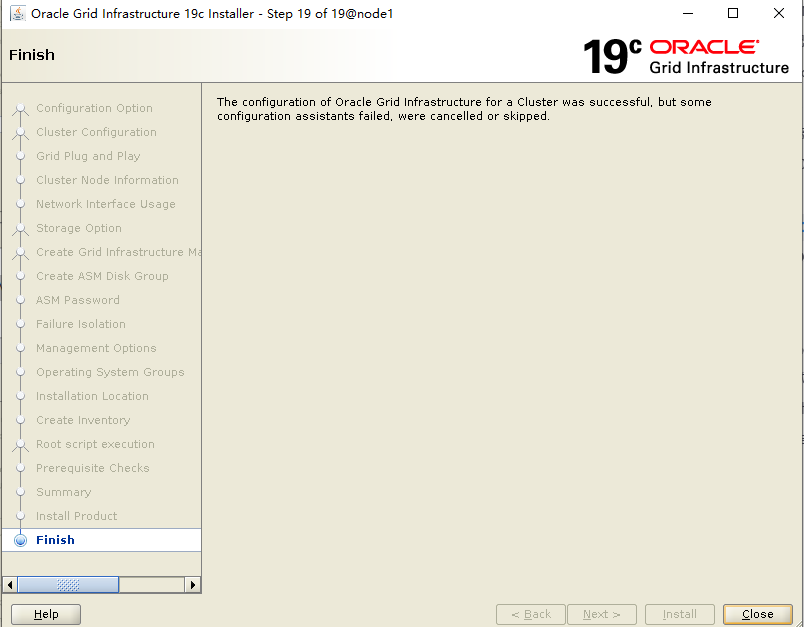

安裝完成:

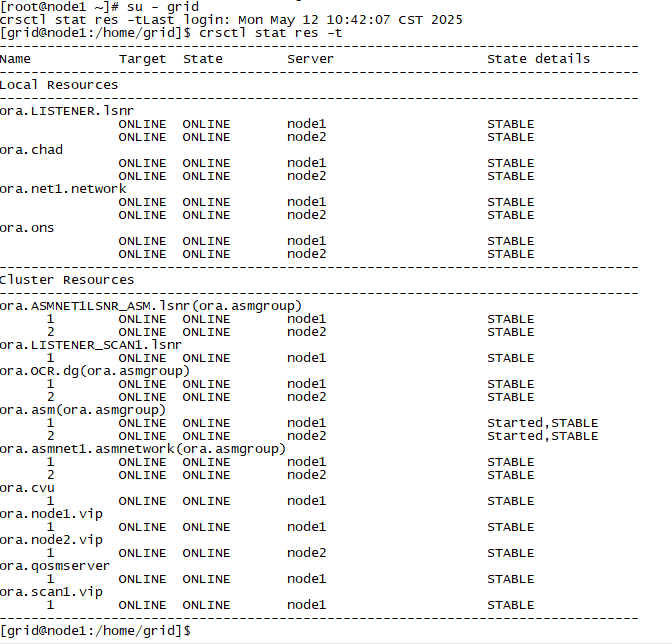

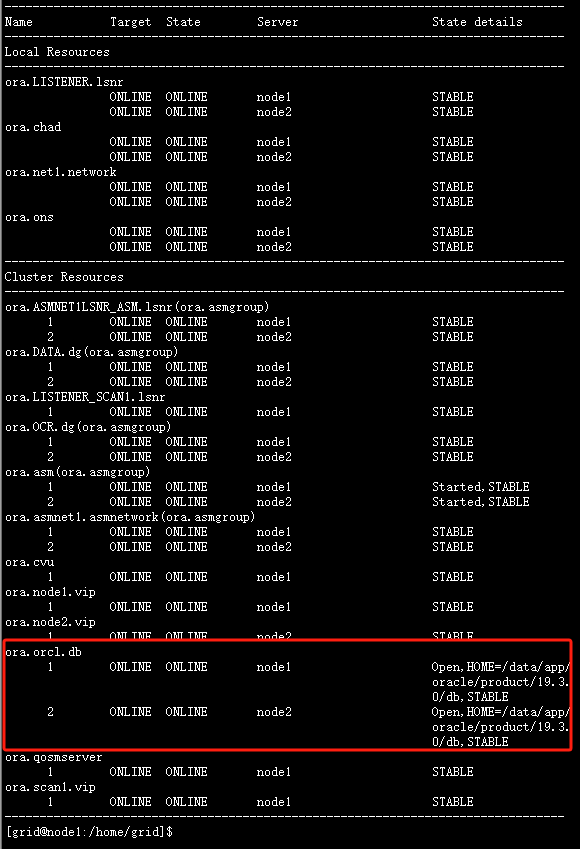

檢查集群狀態(tài):

su - grid

crsctl stat res -t

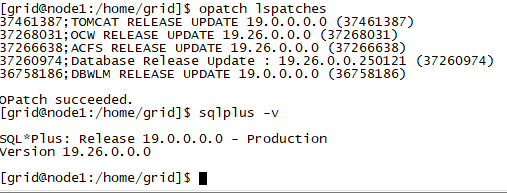

檢查 grid 補(bǔ)丁:

su - grid opatch lspatches sqlplus -v

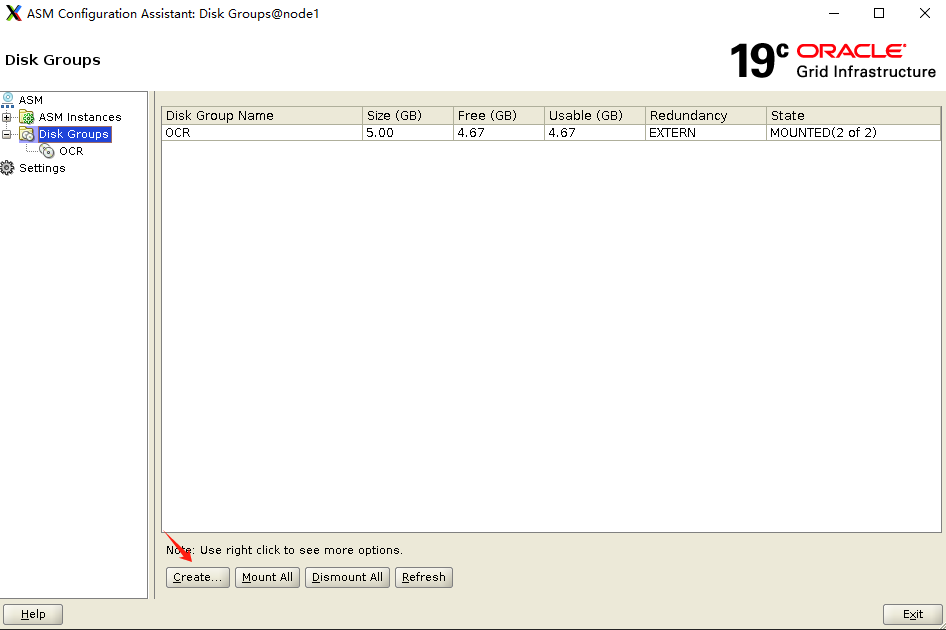

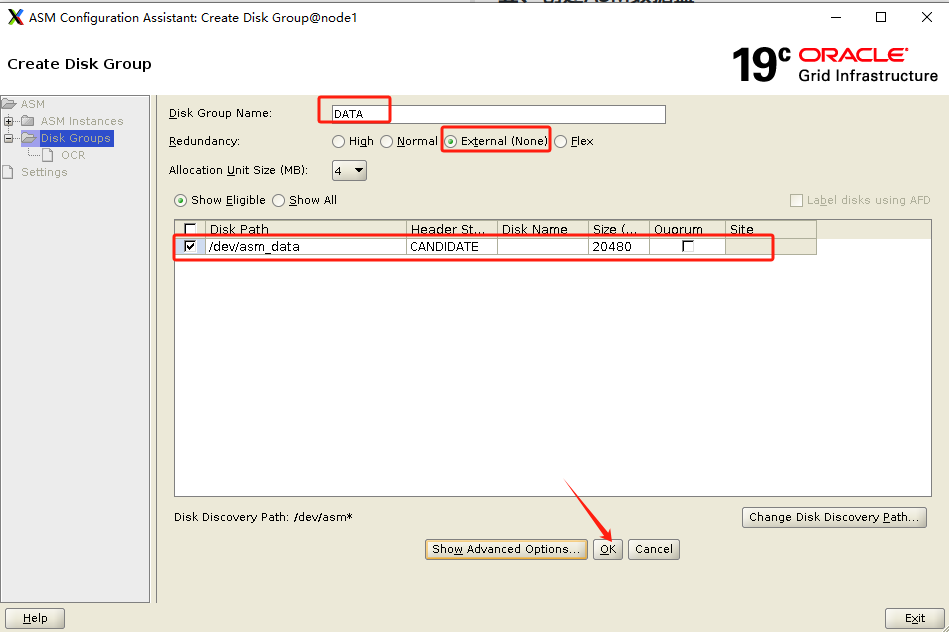

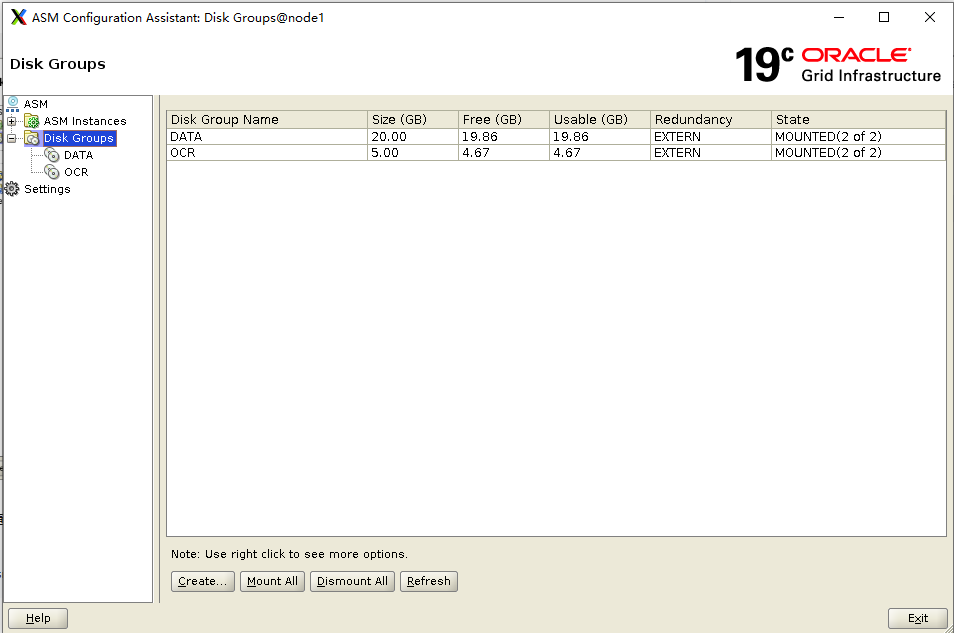

五、創(chuàng)建ASM數(shù)據(jù)盤

asmca

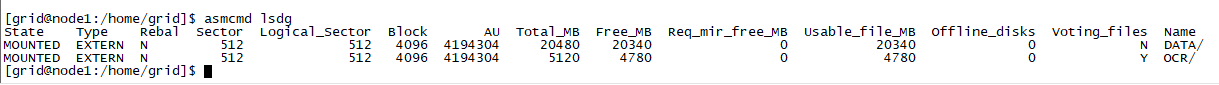

檢查 asm 磁盤:

asmcmd lsdg

建議重啟兩臺(tái)主機(jī),檢查重啟后 Grid 集群是否正常運(yùn)行!

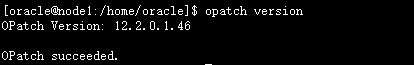

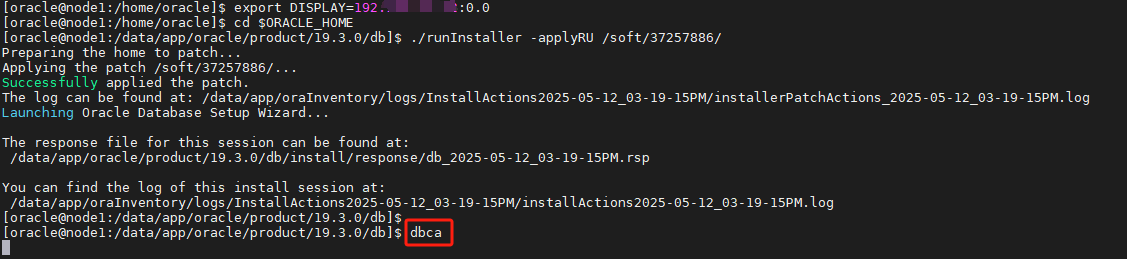

六、安裝oracle軟件(node1)+ RU

chown -R oracle:oinstall /soft

#root用戶下切換到oracle用戶

su - oracle

export DISPLAY=192.*.*.15:0.0

#進(jìn)入ORACLE_HOME目錄

cd $ORACLE_HOME

#檢查opatch版本

opatch version

##執(zhí)行安裝程序開始安裝

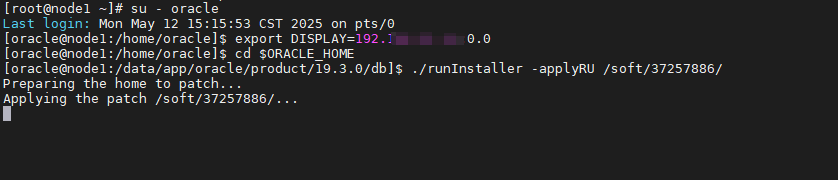

./runInstaller -applyRU /soft/37257886/

注意: 可以看到,已經(jīng)開始對(duì) ORACLE_HOME 進(jìn)行補(bǔ)丁安裝!

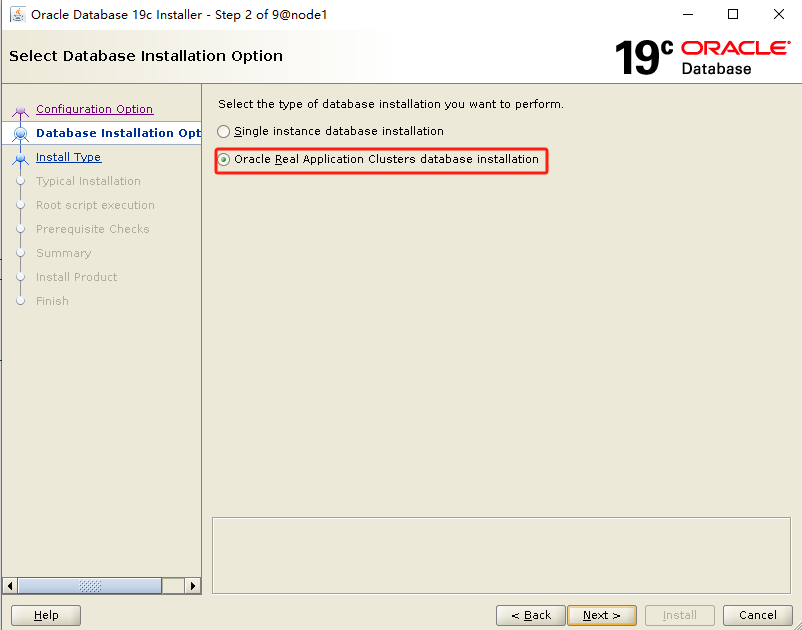

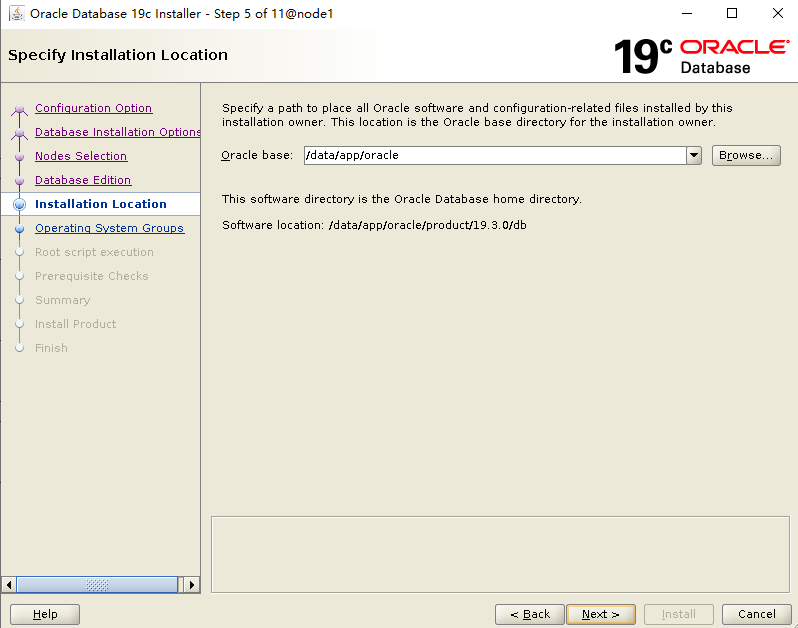

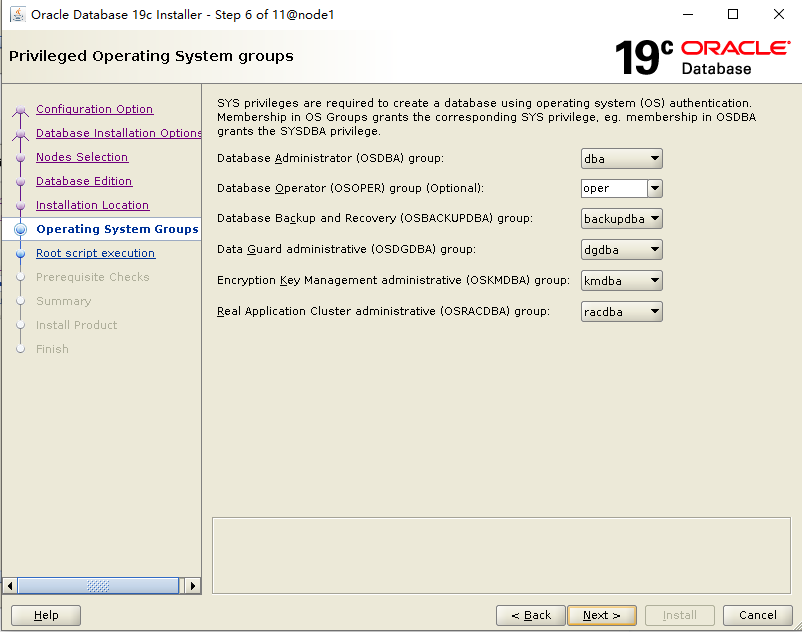

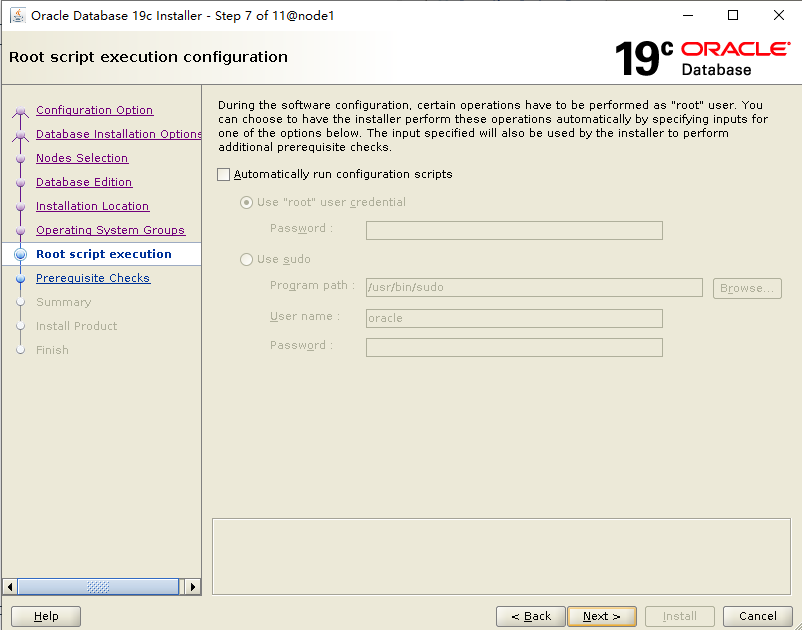

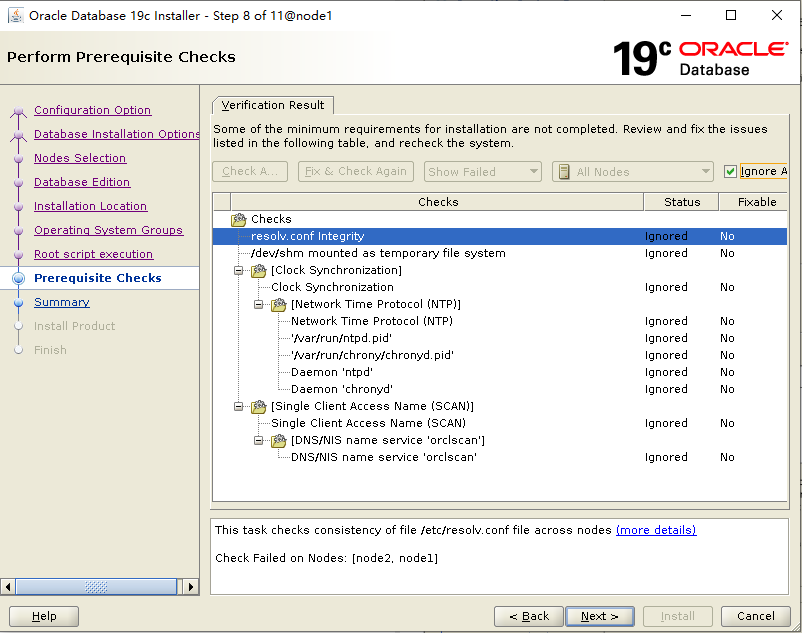

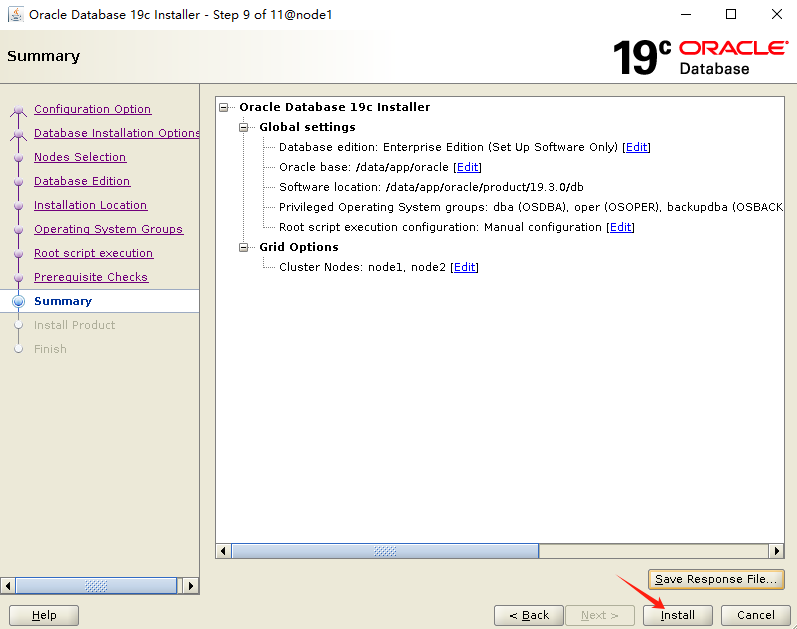

補(bǔ)丁打完,進(jìn)入安裝界面,選擇僅安裝 Oracle 軟件:

選擇集群模式:

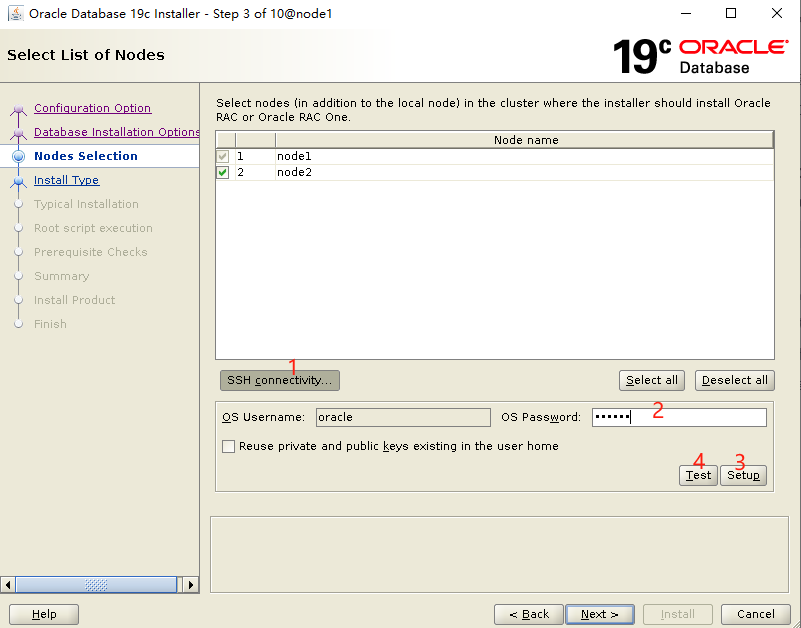

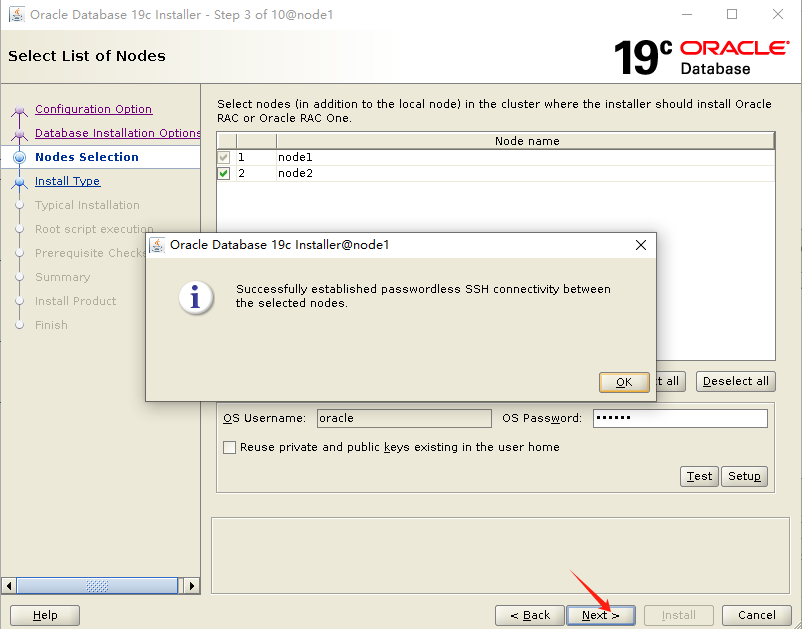

選擇節(jié)點(diǎn),設(shè)置ssh互信:

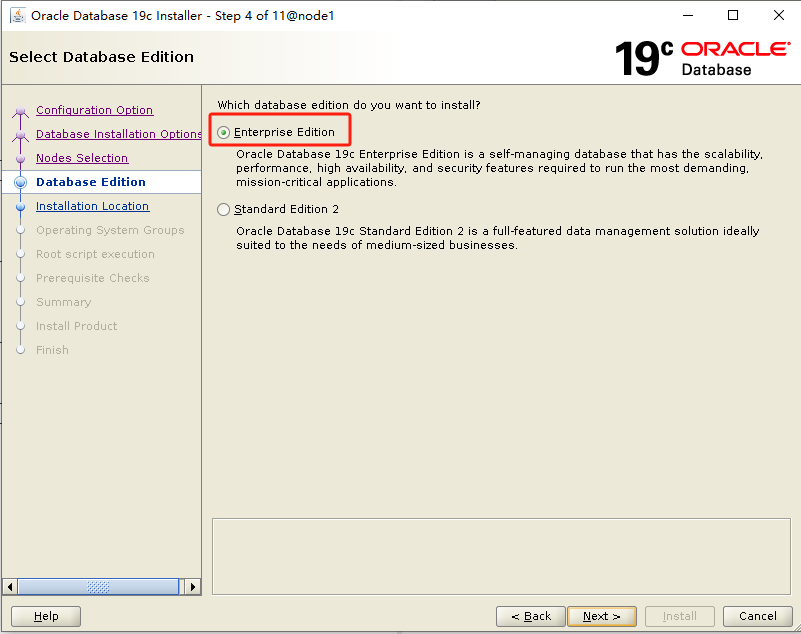

選擇企業(yè)版:

開始安裝:

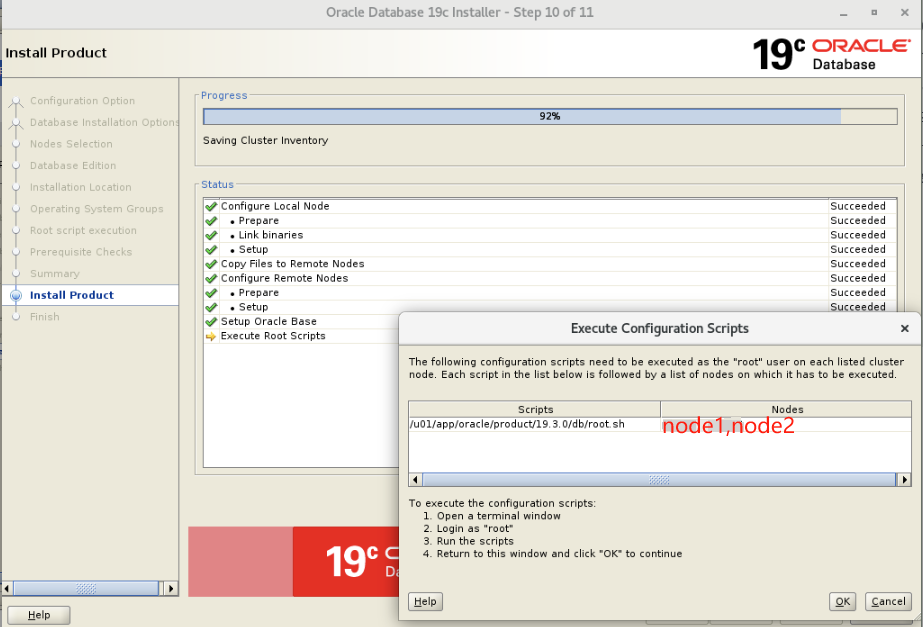

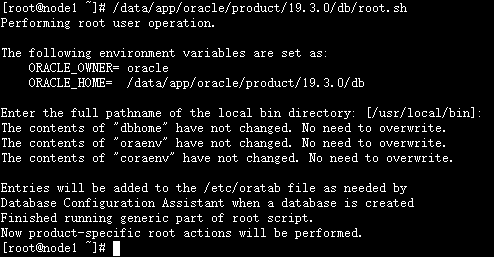

root 用戶下,兩個(gè)節(jié)點(diǎn)順序執(zhí)行 root.sh:

#節(jié)點(diǎn)1執(zhí)行

/data/app/oracle/product/19.3.0/db/root.sh

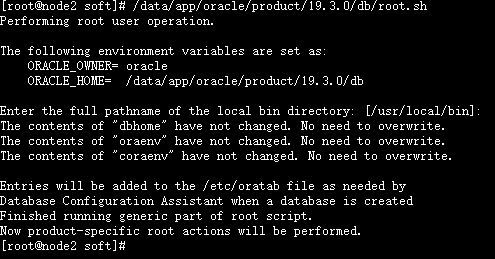

#節(jié)點(diǎn)2執(zhí)行

/data/app/oracle/product/19.3.0/db/root.sh

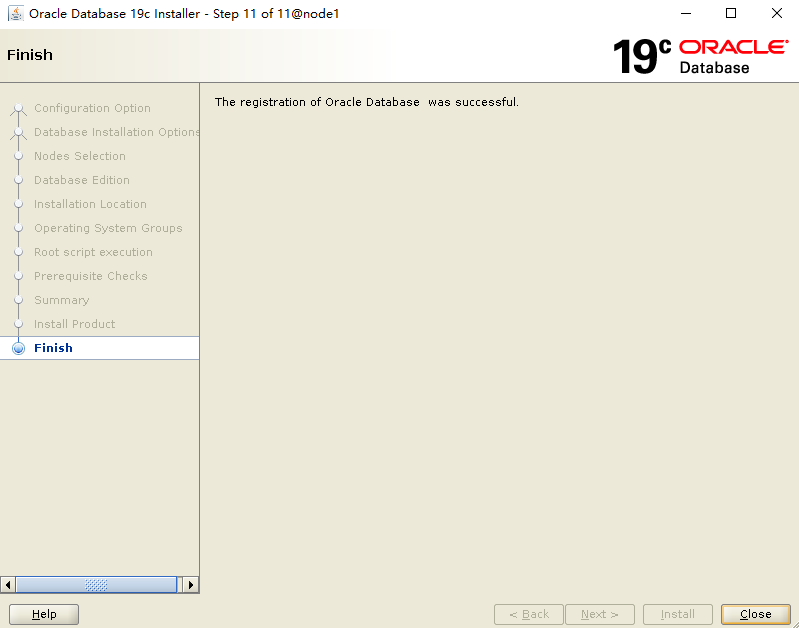

安裝完成:

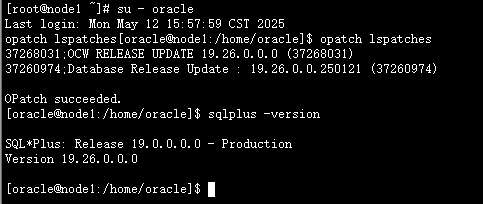

檢查補(bǔ)丁版本:

su - oracle opatch lspatches sqlplus -version

至此,Oracle 軟件已成功安裝!

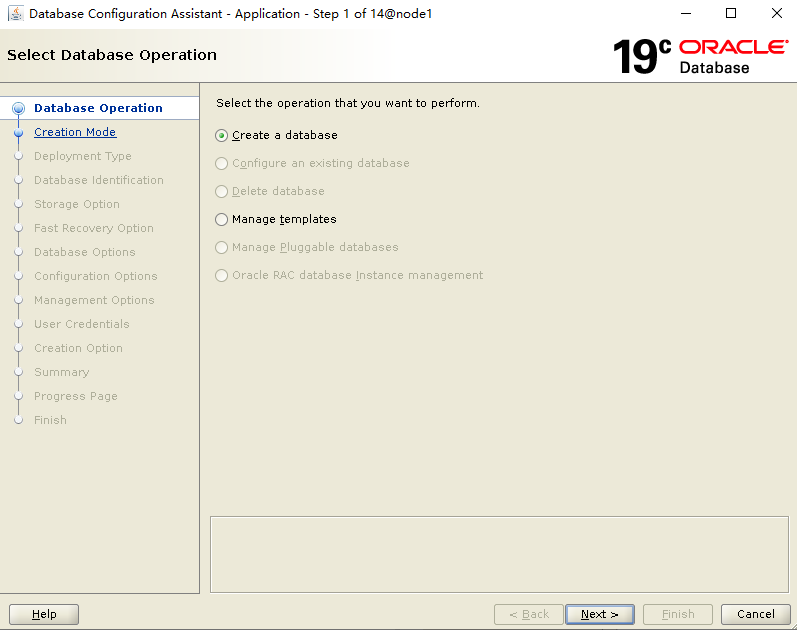

七、建庫

dbca

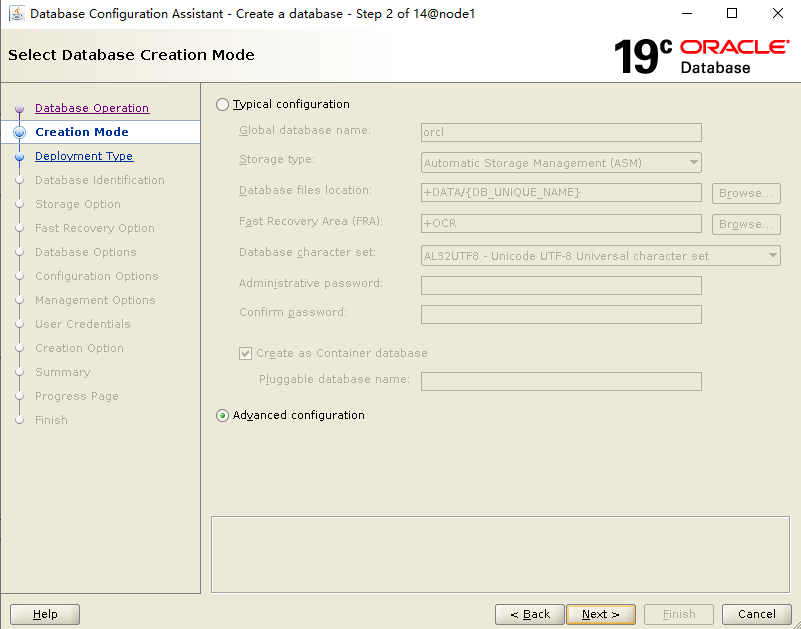

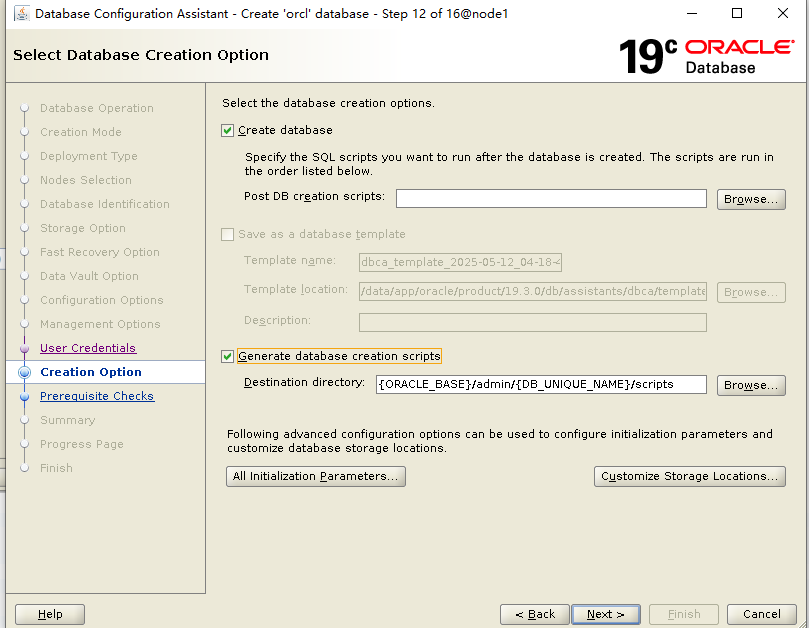

選擇Advanced configuration:

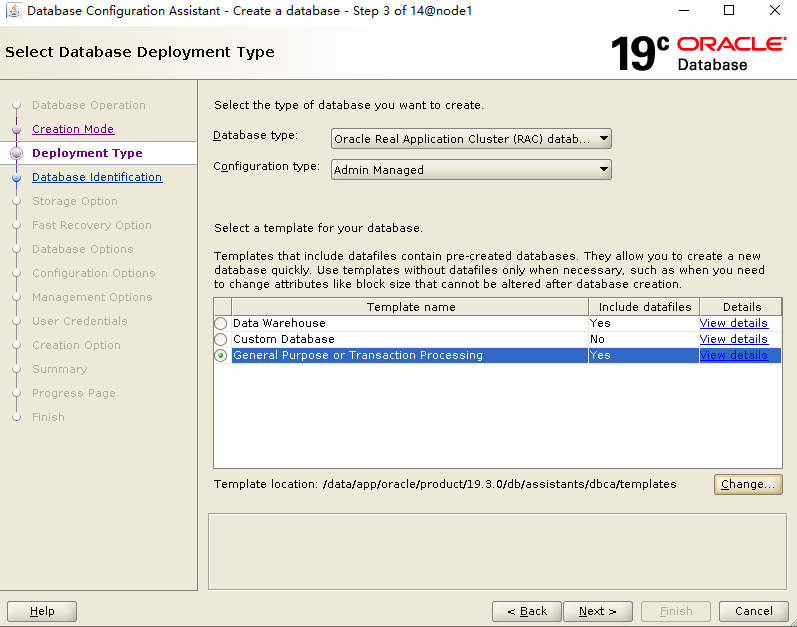

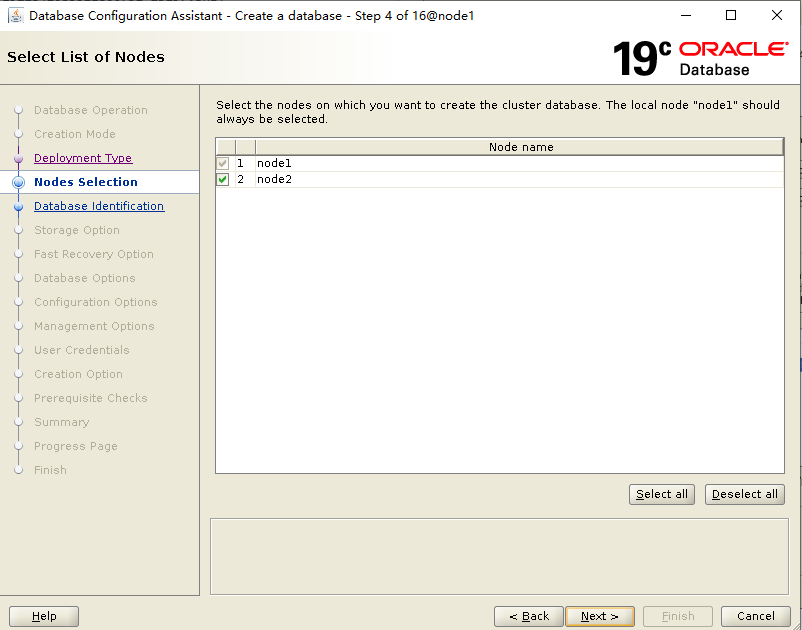

選擇節(jié)點(diǎn):

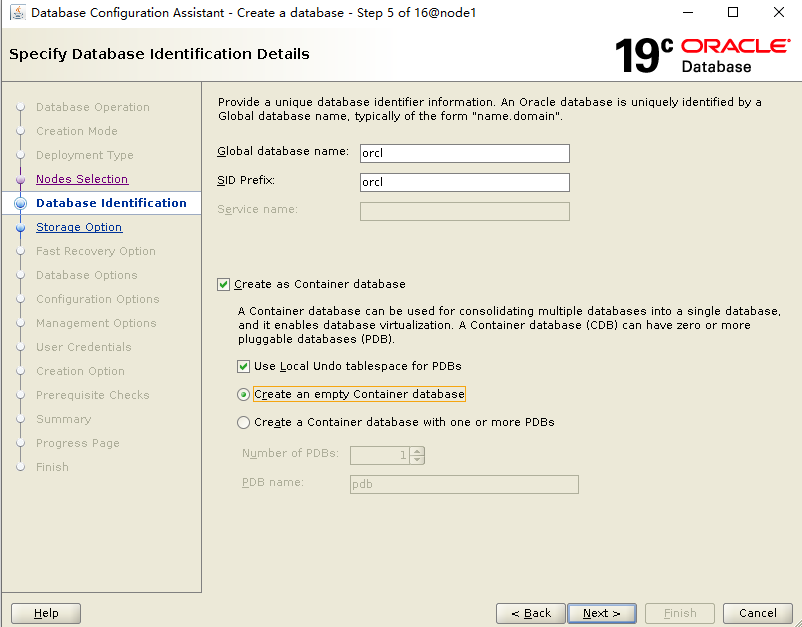

填寫實(shí)例名 orcl;選擇安裝 CDB 模式,不創(chuàng)建PDB:

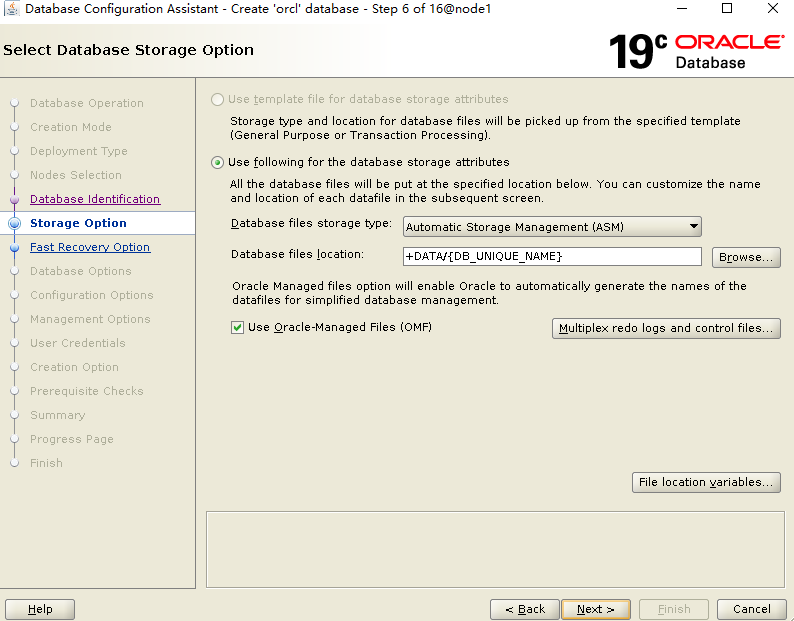

默認(rèn)即可,使用 OMF 模式:

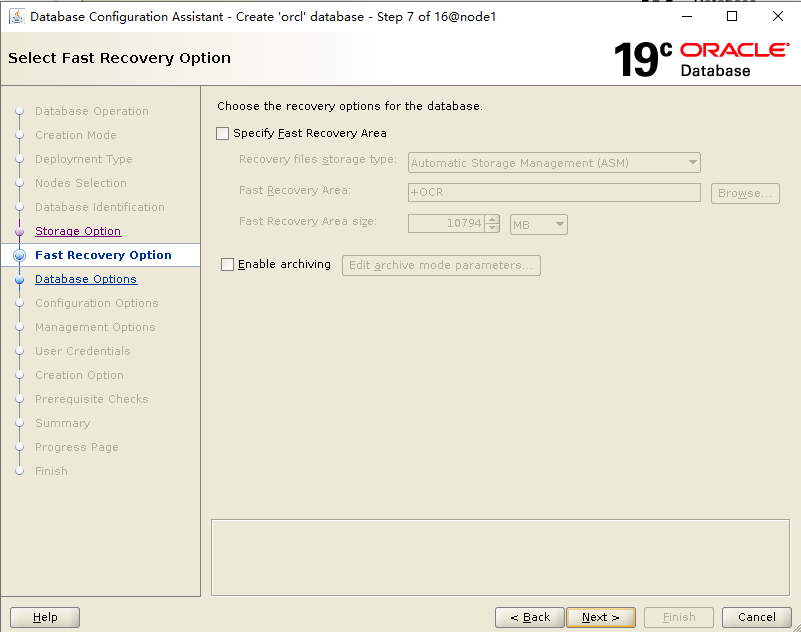

不開閃回,不開歸檔,可以建完實(shí)例后再配置:

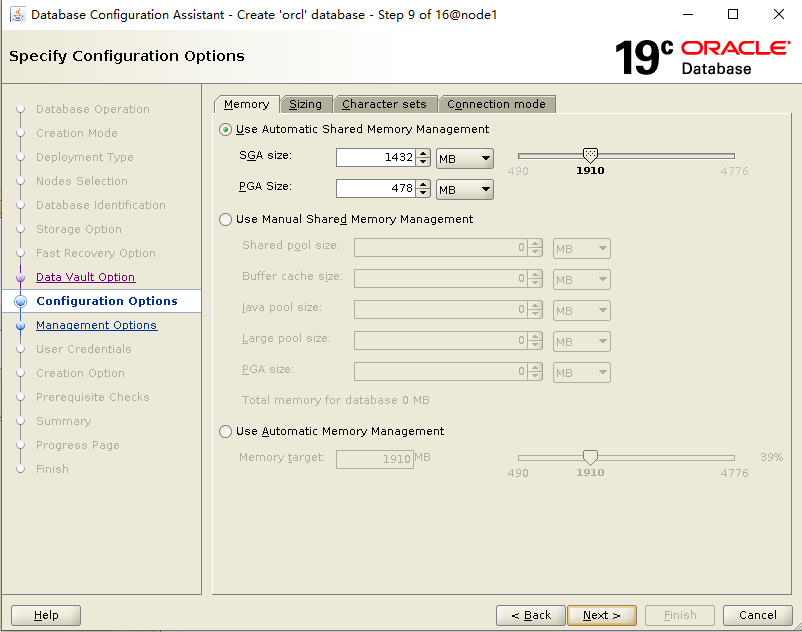

配置內(nèi)存,使用 ASMM 模式:

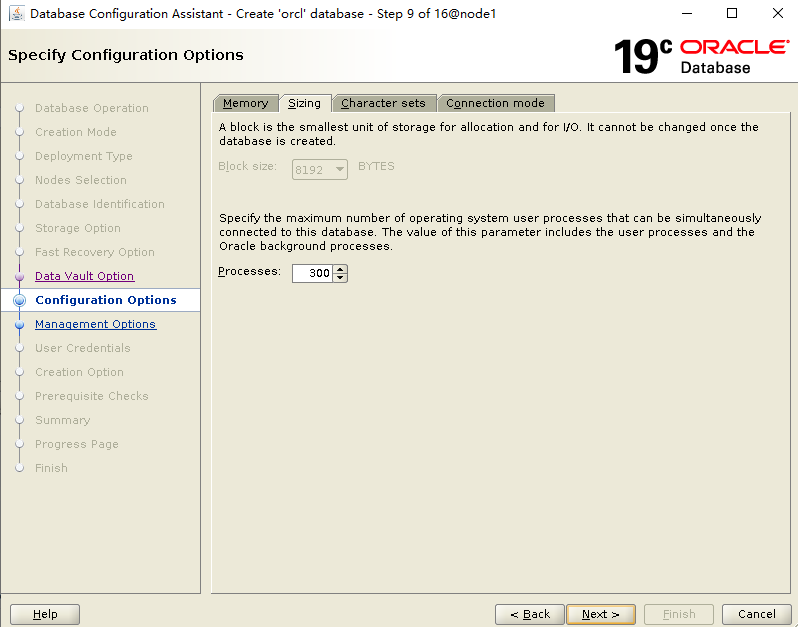

配置Processes:

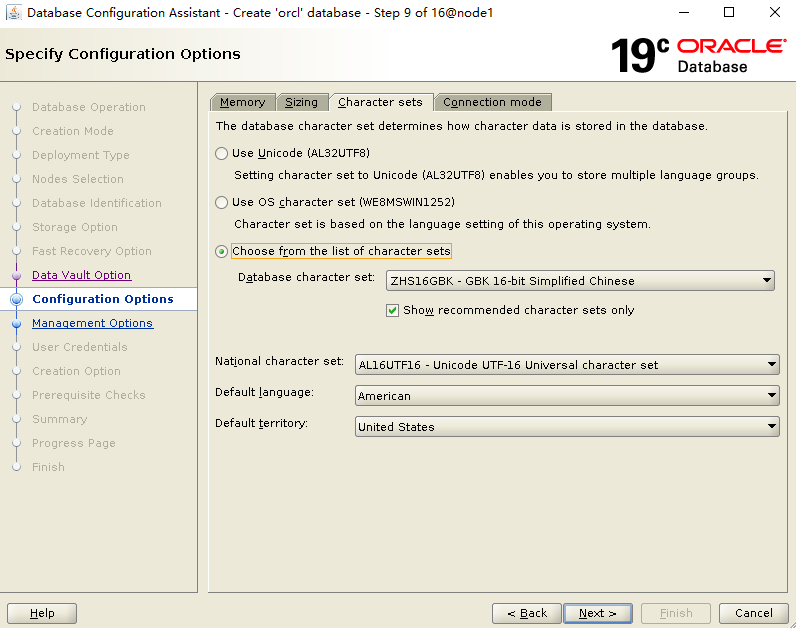

配置字符集:

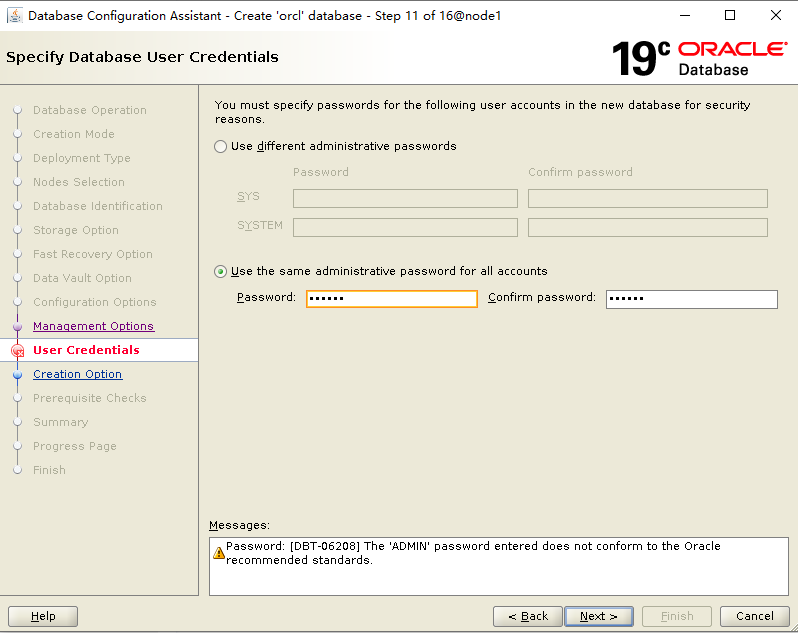

指定密碼:

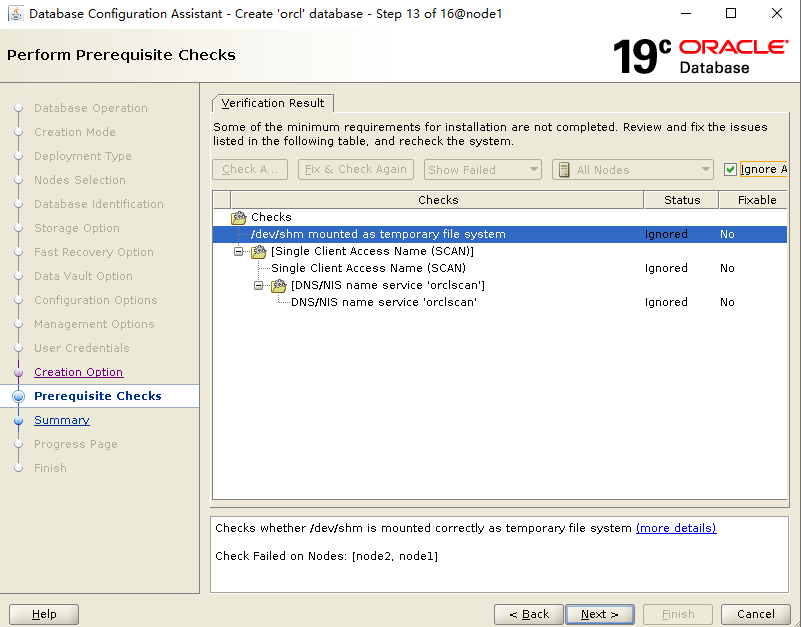

安裝預(yù)檢查,DNS 相關(guān)忽略:

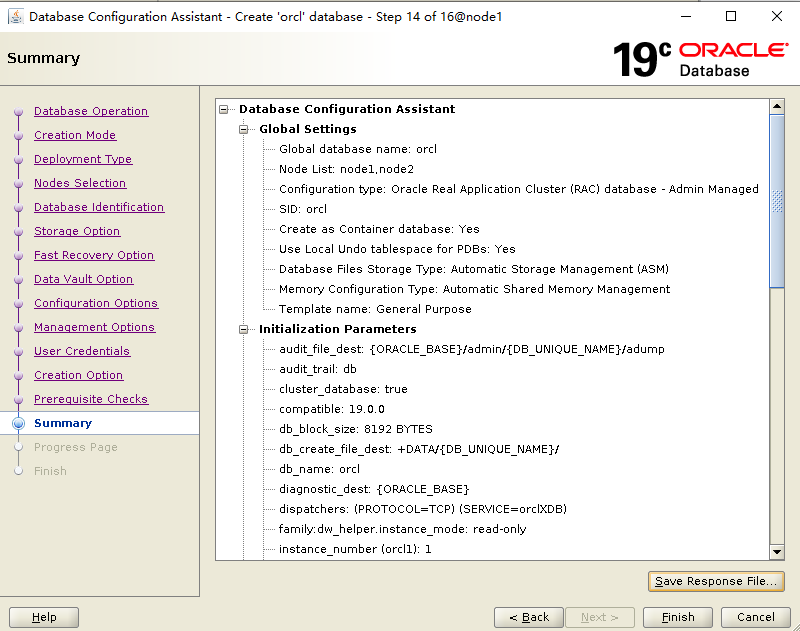

安裝匯總信息:

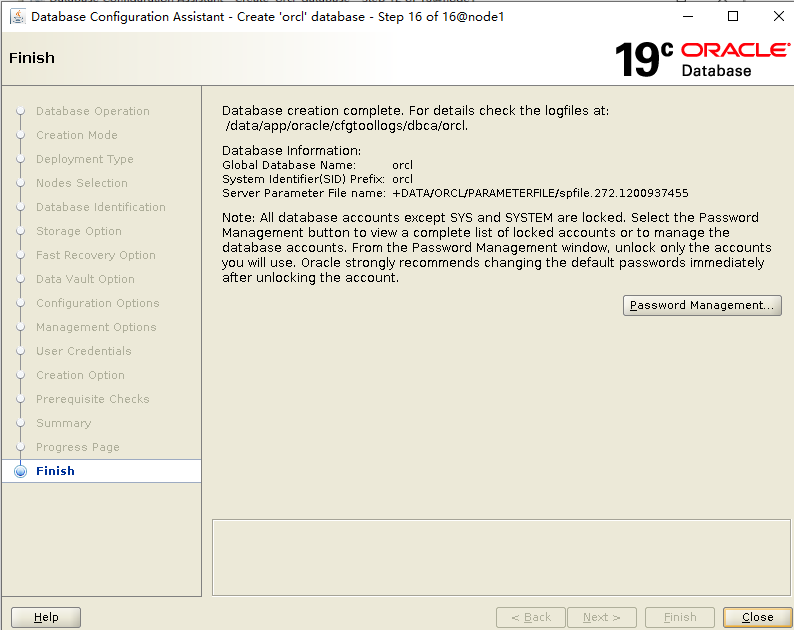

經(jīng)過漫長的等待,建庫結(jié)束了!

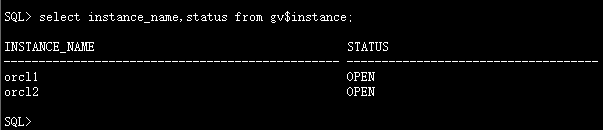

檢查數(shù)據(jù)庫實(shí)例信息:

至此,數(shù)據(jù)庫創(chuàng)建完成!

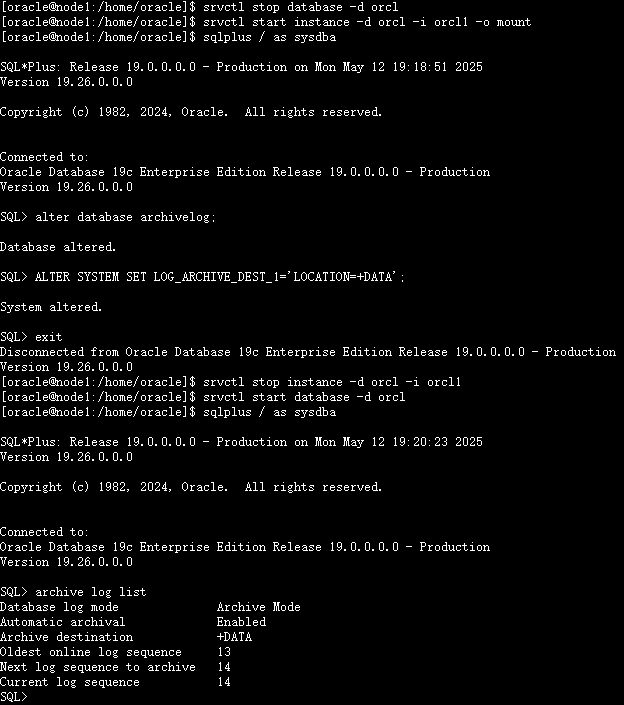

設(shè)置數(shù)據(jù)庫歸檔

1、開啟歸檔模式

#關(guān)閉數(shù)據(jù)庫實(shí)例

srvctl stop database -d orcl

#啟動(dòng)單個(gè)節(jié)點(diǎn)到mount模式

srvctl start instance -d orcl -i orcl1 -o mount

#開啟歸檔

sqlplus / as sysdba

alter database archivelog;

#設(shè)置歸檔路徑

ALTER SYSTEM SET LOG_ARCHIVE_DEST_1='LOCATION=+DATA';

exit;

##重啟數(shù)據(jù)庫實(shí)例

srvctl stop instance -d orcl -i orcl1

srvctl start database -d orcl

##檢查歸檔

sqlplus / as sysdba

archive log list

2、配置定期刪除歸檔計(jì)劃任務(wù)

##進(jìn)入oracle用戶

su - oracle

mkdir -p /home/oracle/scripts/

##寫入腳本

{

echo '#!/bin/bash'

echo 'source ~/.bash_profile'

echo 'deltime=`date +"20%y%m%d%H%M%S"`'

echo "rman target / nocatalog msglog /home/oracle/scripts/del_arch_\${deltime}.log<<EOF"

echo 'crosscheck archivelog all;'

echo "delete noprompt archivelog until time 'sysdate-7';"

echo "delete noprompt force archivelog until time 'SYSDATE-10';"

echo 'EOF'

} >>/home/oracle/scripts/del_arch.sh

chmod +x /home/oracle/scripts/del_arch.sh

切換到 oracle 用戶寫入計(jì)劃任務(wù):

cat >>/var/spool/cron/oracle<<EOF

12 00 * * * /home/oracle/scripts/del_arch.sh

EOF

##手動(dòng)執(zhí)行測(cè)試

su - oracle

/home/oracle/scripts/del_arch.sh

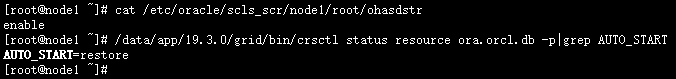

配置數(shù)據(jù)庫開機(jī)自啟

##查看數(shù)據(jù)庫是否隨機(jī)器啟動(dòng)自動(dòng)啟動(dòng)

## root用戶執(zhí)行

/data/app/19.3.0/grid/bin/crsctl status resource ora.orcl.db -p|grep AUTO_START

##查看crs是否隨機(jī)器啟動(dòng)自動(dòng)啟動(dòng)

##cat /etc/oracle/scls_scr/<node_name>/root/ohasdstr

cat /etc/oracle/scls_scr/node1/root/ohasdstr

或者使用下面命令查看crs是否隨機(jī)器啟動(dòng)自動(dòng)啟動(dòng)

crsctl query crs autostart

配置數(shù)據(jù)庫實(shí)例隨集群服務(wù)自啟動(dòng):

#root用戶下執(zhí)行

/data/app/19.3.0/grid/bin/crsctl modify resource "ora.orcl.db" -attr "AUTO_START=always" -unsupported

重啟主機(jī),測(cè)試集群都正常,至此,安裝工作結(jié)束。

八、啟動(dòng)和關(guān)閉RAC

/data/app/19.3.0/grid/bin/crsctl stop cluster -all /data/app/19.3.0/grid/bin/crsctl start cluster -all

其他命令:

-關(guān)閉\啟動(dòng)單個(gè)實(shí)例 $ srvctl stop\start instance -d orcl -i orcl1 --關(guān)閉\啟動(dòng)所有實(shí)例 $ srvctl stop\start database -d orcl --關(guān)閉\啟動(dòng)CRS $ crsctl stop\start crs --關(guān)閉\啟動(dòng)集群服務(wù) $ crsctl stop\start cluster -all crsctl start\stop crs 是單節(jié)管理 crsctl start\stop cluster [-all 所有節(jié)點(diǎn)] 可以管理多個(gè)節(jié)點(diǎn) crsctl start\stop crs 管理crs 包含進(jìn)程 OHASD crsctl start\stop cluster 不包含OHASD進(jìn)程 要先啟動(dòng) OHASD進(jìn)程才可以使用 srvctl stop\start database 啟動(dòng)\停止所有實(shí)例及其啟用的服務(wù)

九、碰到的問題

1、/usr/lib/udev/scsi_id -g -u /dev/sdc 命令獲取不到設(shè)備的UUID信息。

解決辦法:

打開虛擬機(jī)的vmx文件,加入下面內(nèi)容,并重啟虛擬機(jī):

disk.EnableUUID = "TRUE"

2、安裝grid軟件,執(zhí)行root.sh報(bào)錯(cuò):CLSRSC-184: Configuration of ASM failed ,CLSRSC-258: Failed to configure and start ASM

[root@node1 ~]# /data/app/19.3.0/grid/root.sh

Performing root user operation.

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /data/app/19.3.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Relinking oracle with rac_on option

Using configuration parameter file: /data/app/19.3.0/grid/crs/install/crsconfig_params

The log of current session can be found at:

/data/app/grid/crsdata/node1/crsconfig/rootcrs_node1_2025-05-12_02-05-11AM.log

2025/05/12 02:05:20 CLSRSC-594: Executing installation step 1 of 19: 'ValidateEnv'.

2025/05/12 02:05:20 CLSRSC-594: Executing installation step 2 of 19: 'CheckFirstNode'.

2025/05/12 02:05:23 CLSRSC-594: Executing installation step 3 of 19: 'GenSiteGUIDs'.

2025/05/12 02:05:25 CLSRSC-594: Executing installation step 4 of 19: 'SetupOSD'.

Redirecting to /bin/systemctl restart rsyslog.service

2025/05/12 02:05:25 CLSRSC-594: Executing installation step 5 of 19: 'CheckCRSConfig'.

2025/05/12 02:05:25 CLSRSC-594: Executing installation step 6 of 19: 'SetupLocalGPNP'.

2025/05/12 02:05:42 CLSRSC-594: Executing installation step 7 of 19: 'CreateRootCert'.

2025/05/12 02:05:49 CLSRSC-594: Executing installation step 8 of 19: 'ConfigOLR'.

2025/05/12 02:06:05 CLSRSC-594: Executing installation step 9 of 19: 'ConfigCHMOS'.

2025/05/12 02:06:05 CLSRSC-594: Executing installation step 10 of 19: 'CreateOHASD'.

2025/05/12 02:06:12 CLSRSC-594: Executing installation step 11 of 19: 'ConfigOHASD'.

2025/05/12 02:06:12 CLSRSC-330: Adding Clusterware entries to file 'oracle-ohasd.service'

2025/05/12 02:06:40 CLSRSC-594: Executing installation step 12 of 19: 'SetupTFA'.

2025/05/12 02:06:40 CLSRSC-594: Executing installation step 13 of 19: 'InstallAFD'.

2025/05/12 02:06:40 CLSRSC-594: Executing installation step 14 of 19: 'InstallACFS'.

2025/05/12 02:06:50 CLSRSC-594: Executing installation step 15 of 19: 'InstallKA'.

2025/05/12 02:06:59 CLSRSC-594: Executing installation step 16 of 19: 'InitConfig'.

ASM failed to start. Check /data/app/grid/cfgtoollogs/asmca/asmca-250512AM020731.log for details.

2025/05/12 02:07:55 CLSRSC-184: Configuration of ASM failed

2025/05/12 02:08:00 CLSRSC-258: Failed to configure and start ASM

Died at /data/app/19.3.0/grid/crs/install/crsinstall.pm line 2628.

解決辦法:虛擬機(jī)內(nèi)存4G不夠用,調(diào)整到5G后root.sh成功執(zhí)行。

3、創(chuàng)建磁盤組時(shí)由于asm磁盤member狀態(tài),無法使用相應(yīng)的asm磁盤:

解決辦法:

dd if=/dev/zero of=/dev/asm_ocr bs=512 count=1024

dd if=/dev/zero of=/dev/asm_data bs=512 count=1024000

#例子說明:

dd if=/dev/zero of=/dev/asm-disk1 bs=512 count=1024

if=文件名:輸入文件名,缺省為標(biāo)準(zhǔn)輸入。即指定源文件。

of=文件名:輸出文件名,缺省為標(biāo)準(zhǔn)輸出。即指定目的文件。

bs=bytes:同時(shí)設(shè)置讀入/輸出的塊大小為bytes個(gè)字節(jié)。

count=1024指拷貝1024塊

/dev/zero是一個(gè)輸入設(shè)備,可以用來初始化文件;

4、oracle軟件安裝時(shí)異常,重新安裝時(shí)報(bào)錯(cuò):

ERROR: The home is not clean. This home cannot be used since there was a failed OPatch execution in this home. Use a different home to proceed.

解決辦法:$ORACLE_HOME/install/patch文件大小為0,刪除掉。

十、參考文檔

https://docs.oracle.com/en/database/oracle/oracle-database/19/rilin/index.html

關(guān)于作者:

網(wǎng)名:飛天,墨天輪2024年度優(yōu)秀原創(chuàng)作者,擁有 Oracle 10g OCM 認(rèn)證、PGCE認(rèn)證、MySQL 8.0 OCP認(rèn)證以及OBCA、KCP、KCSM、ACP、磐維等眾多國產(chǎn)數(shù)據(jù)庫認(rèn)證證書,目前從事Oracle、Mysql、PostgresSQL、磐維數(shù)據(jù)庫管理運(yùn)維工作,喜歡結(jié)交更多志同道合的朋友,熱衷于研究、分享數(shù)據(jù)庫技術(shù)。

微信公眾號(hào):飛天online

墨天輪:http://www.sunline.cc/u/15197

如有任何疑問,歡迎大家留言,共同探討~~~