目錄

- 前言

- 將Oracle數(shù)據(jù)庫的MBPS&IOPS By Function查詢導(dǎo)入Vertica數(shù)據(jù)庫

- 用Tableau可視化Vertica數(shù)據(jù)庫的表

- 參考內(nèi)容

前言

- 什么是Vertica數(shù)據(jù)庫(英文版)

- 用Tableau可視化Vertica數(shù)據(jù)庫之“DB Time”篇

- 用Tableau可視化Vertica數(shù)據(jù)庫之“LPS&TPS”篇

DESC v$iostat_function

Name Null? Type

----------------------------------------------------------------- -------- --------------------------------------------

FUNCTION_ID NUMBER

FUNCTION_NAME VARCHAR2(18)

SMALL_READ_MEGABYTES NUMBER

SMALL_WRITE_MEGABYTES NUMBER

LARGE_READ_MEGABYTES NUMBER

LARGE_WRITE_MEGABYTES NUMBER

SMALL_READ_REQS NUMBER

SMALL_WRITE_REQS NUMBER

LARGE_READ_REQS NUMBER

LARGE_WRITE_REQS NUMBER

NUMBER_OF_WAITS NUMBER

WAIT_TIME NUMBER其中,我們使用SQL語句查詢V$IOSTAT_FUNCTION中的FUNCTION_ID和FUNCTION_NAME后發(fā)現(xiàn)Oracle的IO按FUNCTION分類總共有14個(gè)不同的類別,請(qǐng)看下面的查詢語句和結(jié)果:

SET PAGESIZE 30

COLUMN function_name FOR a18

SELECT function_id, function_name FROM v$iostat_function ORDER BY 1;

FUNCTION_ID FUNCTION_NAME

----------- ------------------------------------

0 RMAN

1 DBWR

2 LGWR

3 ARCH

4 XDB

5 Streams AQ

6 Data Pump

7 Recovery

8 Buffer Cache Reads

9 Direct Reads

10 Direct Writes

11 Smart Scan

12 Archive Manager

13 Others

14 rows selected.因此,我們需要從兩個(gè)維度(最近1分鐘和最近1小時(shí))可視化我們所提到的14個(gè)類別(在圖表中也可將其稱作“圖例”)的IO(IO分為MBPS和IOPS兩類,即每秒的IO讀寫容量和每秒的IO讀寫請(qǐng)求)情況。其中,最近1分鐘的數(shù)據(jù)保存在動(dòng)態(tài)性能視圖V$IOFUNCMETRIC里,最近1小時(shí)的數(shù)據(jù)保存在動(dòng)態(tài)性能視圖V$IOFUNCMETRIC_HISTORY上。所以兩個(gè)維度和兩個(gè)IO類別的互相組合,我們將要使用四個(gè)SQL查詢來實(shí)現(xiàn)我們的業(yè)務(wù)需求。

將Oracle數(shù)據(jù)庫的MBPS&IOPS By Function查詢導(dǎo)入Vertica數(shù)據(jù)庫

- 將Oracle數(shù)據(jù)庫的MBPS&IOPS By Function查詢保存為CSV文件

- 將所有CSV文件上傳到Vertica服務(wù)器的/home/dbadmin目錄下

- 用vsql客戶端命令連接到Vertica數(shù)據(jù)庫

- 在Vertica數(shù)據(jù)庫中創(chuàng)建相關(guān)的MBPS&IOPS By Function表

- 使用COPY命令將CSV文件導(dǎo)入剛創(chuàng)建的表中

將Oracle數(shù)據(jù)庫的MBPS&IOPS By Function查詢保存為CSV文件

和前兩篇文章的方法相同,我們?cè)?b>SQL Develooper中分別以腳本方式運(yùn)行下面的四個(gè)SQL查詢并將其保存為CSV文件。具體的操作步驟有些繁瑣,所以這里只貼出SQL代碼,依次(首先,最近1分鐘和最近1小時(shí)的MBPS;其次,最近1分鐘和最近1小時(shí)的IOPS)如下所示:

-- Converting rows to columns Based on I/O Megabytes per Second in Last 1 Minute.

-- Vertical Axis Name: MB Per Sec

SET FEEDBACK off;

SET SQLFORMAT csv;

SET LINESIZE 200

SET PAGESIZE 10

COLUMN sample_time FORMAT a11

COLUMN function_name FORMAT a18

COLUMN io_mbps FORMAT 999,999,999.999

ALTER SESSION SET nls_date_format = 'yyyy-mm-dd hh24:mi:ss';

WITH ifm AS

(

SELECT TO_CHAR(end_time, 'yyyy-mm-dd hh24:mi:ss') sample_time

, function_name

, ROUND((small_read_mbps+small_write_mbps+large_read_mbps+large_write_mbps), 3) io_mbps

FROM v$iofuncmetric

)

SELECT * FROM ifm

PIVOT ( MAX(io_mbps)

FOR function_name IN

( 'Buffer Cache Reads' AS "Buffer Cache Reads"

, 'Direct Reads' AS "Direct Reads"

, 'Direct Writes' AS "Direct Writes"

, 'DBWR' AS "DBWR"

, 'LGWR' AS "LGWR"

, 'ARCH' AS "ARCH"

, 'RMAN' AS "RMAN"

, 'Recovery' AS "Recovery"

, 'Data Pump' AS "Data Pump"

, 'Streams AQ' AS "Streams AQ"

, 'XDB' AS "XDB"

, 'Others' AS "Others"

, 'Archive Manager' AS "Archive Manager"

, 'Smart Scan' AS "Smart Scan"

)

)

ORDER BY sample_time

;-- Converting rows to columns Based on I/O Megabytes per Second in Last 1 Hour (interval by each minute).

-- Vertical Axis Name: MB Per Sec

SET FEEDBACK off;

SET SQLFORMAT csv;

SET LINESIZE 200

SET PAGESIZE 80

COLUMN sample_time FORMAT a11

COLUMN function_name FORMAT a18

COLUMN io_mbps FORMAT 999,999,999.999

ALTER SESSION SET nls_date_format = 'yyyy-mm-dd hh24:mi:ss';

WITH ifmh AS

(

SELECT TO_CHAR(end_time, 'yyyy-mm-dd hh24:mi:ss') sample_time

, function_name

, ROUND((small_read_mbps+small_write_mbps+large_read_mbps+large_write_mbps), 3) io_mbps

FROM v$iofuncmetric_history

)

SELECT * FROM ifmh

PIVOT ( MAX(io_mbps)

FOR function_name IN

( 'Buffer Cache Reads' AS "Buffer Cache Reads"

, 'Direct Reads' AS "Direct Reads"

, 'Direct Writes' AS "Direct Writes"

, 'DBWR' AS "DBWR"

, 'LGWR' AS "LGWR"

, 'ARCH' AS "ARCH"

, 'RMAN' AS "RMAN"

, 'Recovery' AS "Recovery"

, 'Data Pump' AS "Data Pump"

, 'Streams AQ' AS "Streams AQ"

, 'XDB' AS "XDB"

, 'Others' AS "Others"

, 'Archive Manager' AS "Archive Manager"

, 'Smart Scan' AS "Smart Scan"

)

)

ORDER BY sample_time

;-- Converting rows to columns Based on I/O Requests per Second in Last 1 Minute.

-- Horizontal Axis Name: I/O Per Sec

SET FEEDBACK off;

SET SQLFORMAT csv;

SET LINESIZE 200

SET PAGESIZE 10

COLUMN sample_time FORMAT a11

COLUMN function_name FORMAT a18

COLUMN iops FORMAT 999,999,999.999

ALTER SESSION SET nls_date_format = 'yyyy-mm-dd hh24:mi:ss';

WITH ifm AS

(

SELECT TO_CHAR(end_time, 'yyyy-mm-dd hh24:mi:ss') sample_time

, function_name

, ROUND((small_read_iops+small_write_iops+large_read_iops+large_write_iops), 3) iops

FROM v$iofuncmetric

)

SELECT * FROM ifm

PIVOT ( MAX(iops)

FOR function_name IN

( 'Buffer Cache Reads' AS "Buffer Cache Reads"

, 'Direct Reads' AS "Direct Reads"

, 'Direct Writes' AS "Direct Writes"

, 'DBWR' AS "DBWR"

, 'LGWR' AS "LGWR"

, 'ARCH' AS "ARCH"

, 'RMAN' AS "RMAN"

, 'Recovery' AS "Recovery"

, 'Data Pump' AS "Data Pump"

, 'Streams AQ' AS "Streams AQ"

, 'XDB' AS "XDB"

, 'Others' AS "Others"

, 'Archive Manager' AS "Archive Manager"

, 'Smart Scan' AS "Smart Scan"

)

)

ORDER BY sample_time

;-- Converting rows to columns Based on I/O Requests per Second in Last 1 Hour (interval by each minute).

-- Horizontal Axis Name: I/O Per Sec

SET FEEDBACK off;

SET SQLFORMAT csv;

SET LINESIZE 200

SET PAGESIZE 80

COLUMN sample_time FORMAT a11

COLUMN function_name FORMAT a18

COLUMN iops FORMAT 999,999,999.999

ALTER SESSION SET nls_date_format = 'yyyy-mm-dd hh24:mi:ss';

WITH ifmh AS

(

SELECT TO_CHAR(end_time, 'yyyy-mm-dd hh24:mi:ss') sample_time

, function_name

, ROUND((small_read_iops+small_write_iops+large_read_iops+large_write_iops), 3) iops

FROM v$iofuncmetric_history

)

SELECT * FROM ifmh

PIVOT ( MAX(iops)

FOR function_name IN

( 'Buffer Cache Reads' AS "Buffer Cache Reads"

, 'Direct Reads' AS "Direct Reads"

, 'Direct Writes' AS "Direct Writes"

, 'DBWR' AS "DBWR"

, 'LGWR' AS "LGWR"

, 'ARCH' AS "ARCH"

, 'RMAN' AS "RMAN"

, 'Recovery' AS "Recovery"

, 'Data Pump' AS "Data Pump"

, 'Streams AQ' AS "Streams AQ"

, 'XDB' AS "XDB"

, 'Others' AS "Others"

, 'Archive Manager' AS "Archive Manager"

, 'Smart Scan' AS "Smart Scan"

)

)

ORDER BY sample_time

;由于CSV文件的內(nèi)容過多,所以我把它們分別上傳到了我的GitHub,您可以查看這4個(gè)文件:crtc_oracle_io_mbps_in_last_1_minute.csv,crtc_oracle_io_mbps_in_last_1_hour.csv,crtc_oracle_iops_in_last_1_minute.csv和crtc_oracle_iops_in_last_1_hour.csv。

將所有CSV文件上傳到Vertica服務(wù)器的/home/dbadmin目錄下

這里,省略具體的上傳步驟和相關(guān)授權(quán)操作,最終的上傳結(jié)果如下所示(用“<<==”標(biāo)明):

[dbadmin@test ~]$ ls -lrht

total 184K

drwxr-xr-x 5 dbadmin verticadba 134 Dec 15 14:06 vdb_oracle_perf

......

-rw-r--r-- 1 dbadmin verticadba 225 Dec 23 10:54 crtc_oracle_io_mbps_in_last_1_minute.csv <<==

-rw-r--r-- 1 dbadmin verticadba 3.0K Dec 23 10:56 crtc_oracle_io_mbps_in_last_1_hour.csv <<==

-rw-r--r-- 1 dbadmin verticadba 233 Dec 23 10:57 crtc_oracle_iops_in_last_1_minute.csv <<==

-rw-r--r-- 1 dbadmin verticadba 3.6K Dec 23 10:59 crtc_oracle_iops_in_last_1_hour.csv <<==用vsql客戶端命令連接到Vertica數(shù)據(jù)庫

用Linux命令su切換Vertica數(shù)據(jù)庫服務(wù)器的root用戶到dbadmin用戶,然后用vsql命令進(jìn)行連接,下面是具體的操作過程:

[root@test ~]# su - dbadmin

[dbadmin@test ~]$

[dbadmin@test ~]$ /opt/vertica/bin/vsql -h 127.0.0.1 vdb_oracle_perf dbadmin

Password:

Welcome to vsql, the Vertica Analytic Database interactive terminal.

Type: \h or \? for help with vsql commands

\g or terminate with semicolon to execute query

\q to quit

vdb_oracle_perf=> 在Vertica數(shù)據(jù)庫中創(chuàng)建相關(guān)的MBPS&IOPS By Function表

在public的schema下,分別創(chuàng)建表crtc_oracle_io_mbps_in_last_1_minute,crtc_oracle_io_mbps_in_last_1_hour,crtc_oracle_iops_in_last_1_minute和crtc_oracle_iops_in_last_1_hour。

vdb_oracle_perf=>

vdb_oracle_perf=> CREATE TABLE public.crtc_oracle_io_mbps_in_last_1_minute

vdb_oracle_perf-> (sample_time TIMESTAMP,

vdb_oracle_perf(> buffer_cache_reads NUMBER(12,3),

vdb_oracle_perf(> direct_reads NUMBER(12,3),

vdb_oracle_perf(> direct_writes NUMBER(12,3),

vdb_oracle_perf(> dbwr NUMBER(12,3),

vdb_oracle_perf(> lgwr NUMBER(12,3),

vdb_oracle_perf(> arch NUMBER(12,3),

vdb_oracle_perf(> rman NUMBER(12,3),

vdb_oracle_perf(> recovery NUMBER(12,3),

vdb_oracle_perf(> data_pump NUMBER(12,3),

vdb_oracle_perf(> streams_aq NUMBER(12,3),

vdb_oracle_perf(> xdb NUMBER(12,3),

vdb_oracle_perf(> others NUMBER(12,3),

vdb_oracle_perf(> archive_manager NUMBER(12,3),

vdb_oracle_perf(> smart_scan NUMBER(12,3)

vdb_oracle_perf(> );

CREATE TABLE

vdb_oracle_perf=> vdb_oracle_perf=>

vdb_oracle_perf=> CREATE TABLE public.crtc_oracle_io_mbps_in_last_1_hour

vdb_oracle_perf-> (sample_time TIMESTAMP,

vdb_oracle_perf(> buffer_cache_reads NUMBER(12,3),

vdb_oracle_perf(> direct_reads NUMBER(12,3),

vdb_oracle_perf(> direct_writes NUMBER(12,3),

vdb_oracle_perf(> dbwr NUMBER(12,3),

vdb_oracle_perf(> lgwr NUMBER(12,3),

vdb_oracle_perf(> arch NUMBER(12,3),

vdb_oracle_perf(> rman NUMBER(12,3),

vdb_oracle_perf(> recovery NUMBER(12,3),

vdb_oracle_perf(> data_pump NUMBER(12,3),

vdb_oracle_perf(> streams_aq NUMBER(12,3),

vdb_oracle_perf(> xdb NUMBER(12,3),

vdb_oracle_perf(> others NUMBER(12,3),

vdb_oracle_perf(> archive_manager NUMBER(12,3),

vdb_oracle_perf(> smart_scan NUMBER(12,3)

vdb_oracle_perf(> );

CREATE TABLE

vdb_oracle_perf=> vdb_oracle_perf=>

vdb_oracle_perf=> CREATE TABLE public.crtc_oracle_iops_in_last_1_minute

vdb_oracle_perf-> (sample_time TIMESTAMP,

vdb_oracle_perf(> buffer_cache_reads NUMBER(12,3),

vdb_oracle_perf(> direct_reads NUMBER(12,3),

vdb_oracle_perf(> direct_writes NUMBER(12,3),

vdb_oracle_perf(> dbwr NUMBER(12,3),

vdb_oracle_perf(> lgwr NUMBER(12,3),

vdb_oracle_perf(> arch NUMBER(12,3),

vdb_oracle_perf(> rman NUMBER(12,3),

vdb_oracle_perf(> recovery NUMBER(12,3),

vdb_oracle_perf(> data_pump NUMBER(12,3),

vdb_oracle_perf(> streams_aq NUMBER(12,3),

vdb_oracle_perf(> xdb NUMBER(12,3),

vdb_oracle_perf(> others NUMBER(12,3),

vdb_oracle_perf(> archive_manager NUMBER(12,3),

vdb_oracle_perf(> smart_scan NUMBER(12,3)

vdb_oracle_perf(> );

CREATE TABLE

vdb_oracle_perf=> vdb_oracle_perf=>

vdb_oracle_perf=> CREATE TABLE public.crtc_oracle_iops_in_last_1_hour

vdb_oracle_perf-> (sample_time TIMESTAMP,

vdb_oracle_perf(> buffer_cache_reads NUMBER(12,3),

vdb_oracle_perf(> direct_reads NUMBER(12,3),

vdb_oracle_perf(> direct_writes NUMBER(12,3),

vdb_oracle_perf(> dbwr NUMBER(12,3),

vdb_oracle_perf(> lgwr NUMBER(12,3),

vdb_oracle_perf(> arch NUMBER(12,3),

vdb_oracle_perf(> rman NUMBER(12,3),

vdb_oracle_perf(> recovery NUMBER(12,3),

vdb_oracle_perf(> data_pump NUMBER(12,3),

vdb_oracle_perf(> streams_aq NUMBER(12,3),

vdb_oracle_perf(> xdb NUMBER(12,3),

vdb_oracle_perf(> others NUMBER(12,3),

vdb_oracle_perf(> archive_manager NUMBER(12,3),

vdb_oracle_perf(> smart_scan NUMBER(12,3)

vdb_oracle_perf(> );

CREATE TABLE

vdb_oracle_perf=> 使用COPY命令將CSV文件導(dǎo)入剛創(chuàng)建的表中

在上一步操作中,我們已經(jīng)創(chuàng)建成功了4個(gè)表。現(xiàn)在我們用COPY命令將上傳到Vertica數(shù)據(jù)庫服務(wù)器的4個(gè)CSV文件分別導(dǎo)入到那4個(gè)表中。操作步驟依次為:

vdb_oracle_perf=>

vdb_oracle_perf=> COPY public.crtc_oracle_io_mbps_in_last_1_minute FROM '/home/dbadmin/crtc_oracle_io_mbps_in_last_1_minute.csv' EXCEPTIONS '/home/dbadmin/imp_io_mbps_1.log' DELIMITER AS ',';

NOTICE 7850: In a multi-threaded load, rejected record data may be written to additional files

HINT: Exceptions may be written to files [/home/dbadmin/imp_io_mbps_1.log], [/home/dbadmin/imp_io_mbps_1.log.1], etc

Rows Loaded

-------------

1

(1 row)vdb_oracle_perf=>

vdb_oracle_perf=> COPY public.crtc_oracle_io_mbps_in_last_1_hour FROM '/home/dbadmin/crtc_oracle_io_mbps_in_last_1_hour.csv' EXCEPTIONS '/home/dbadmin/imp_io_mbps_2.log' DELIMITER AS ',';

NOTICE 7850: In a multi-threaded load, rejected record data may be written to additional files

HINT: Exceptions may be written to files [/home/dbadmin/imp_io_mbps_2.log], [/home/dbadmin/imp_io_mbps_2.log.1], etc

Rows Loaded

-------------

61

(1 row)vdb_oracle_perf=>

vdb_oracle_perf=> COPY public.crtc_oracle_iops_in_last_1_minute FROM '/home/dbadmin/crtc_oracle_iops_in_last_1_minute.csv' EXCEPTIONS '/home/dbadmin/imp_iops_1.log' DELIMITER AS ',';

NOTICE 7850: In a multi-threaded load, rejected record data may be written to additional files

HINT: Exceptions may be written to files [/home/dbadmin/imp_iops_1.log], [/home/dbadmin/imp_iops_1.log.1], etc

Rows Loaded

-------------

1

(1 row)vdb_oracle_perf=>

vdb_oracle_perf=> COPY public.crtc_oracle_iops_in_last_1_hour FROM '/home/dbadmin/crtc_oracle_iops_in_last_1_hour.csv' EXCEPTIONS '/home/dbadmin/imp_iops_2.log' DELIMITER AS ',';

NOTICE 7850: In a multi-threaded load, rejected record data may be written to additional files

HINT: Exceptions may be written to files [/home/dbadmin/imp_iops_2.log], [/home/dbadmin/imp_iops_2.log.1], etc

Rows Loaded

-------------

61

(1 row)

vdb_oracle_perf=> 用Tableau可視化Vertica數(shù)據(jù)庫的表

- 按Function Name分類的最近1分鐘的IO MBPS

- 按Function Name分類的最近1小時(shí)的IO MBPS

- 按Function Name分類的最近1分鐘的IOPS

- 按Function Name分類的最近1小時(shí)的IOPS

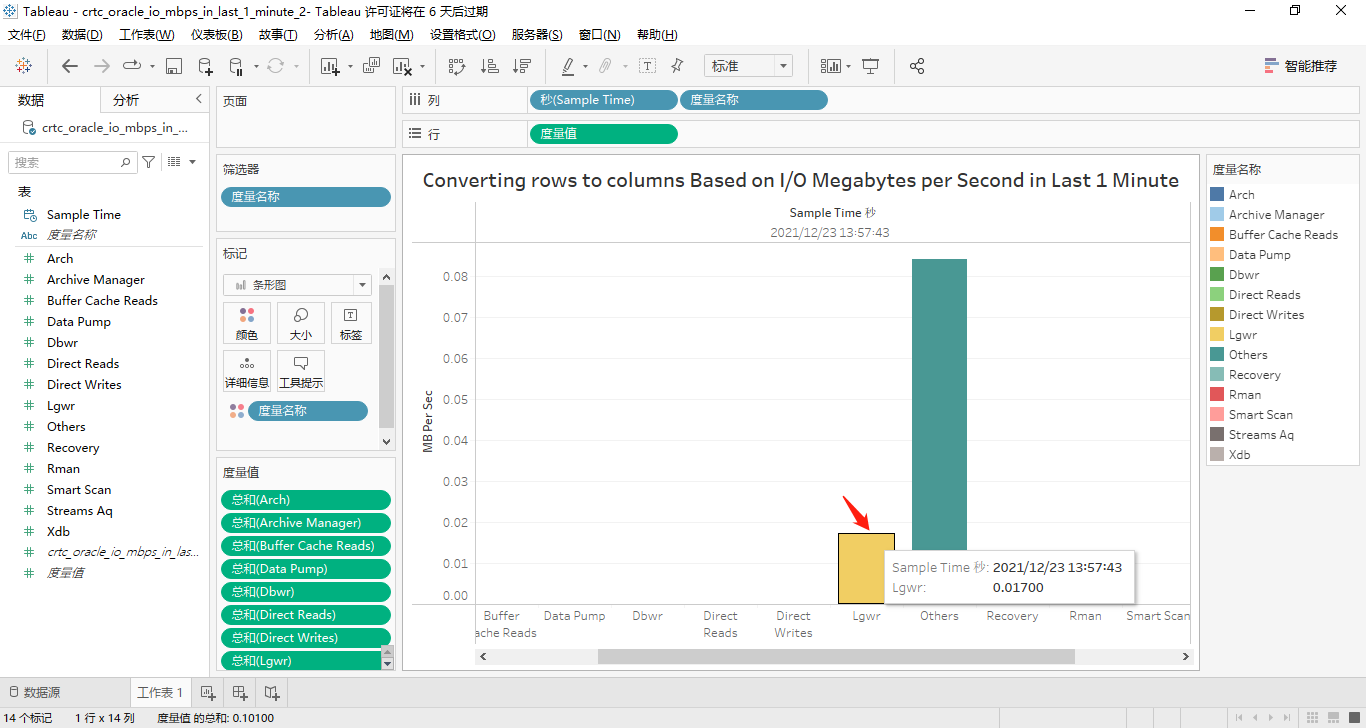

按Function Name分類的最近1分鐘的IO MBPS

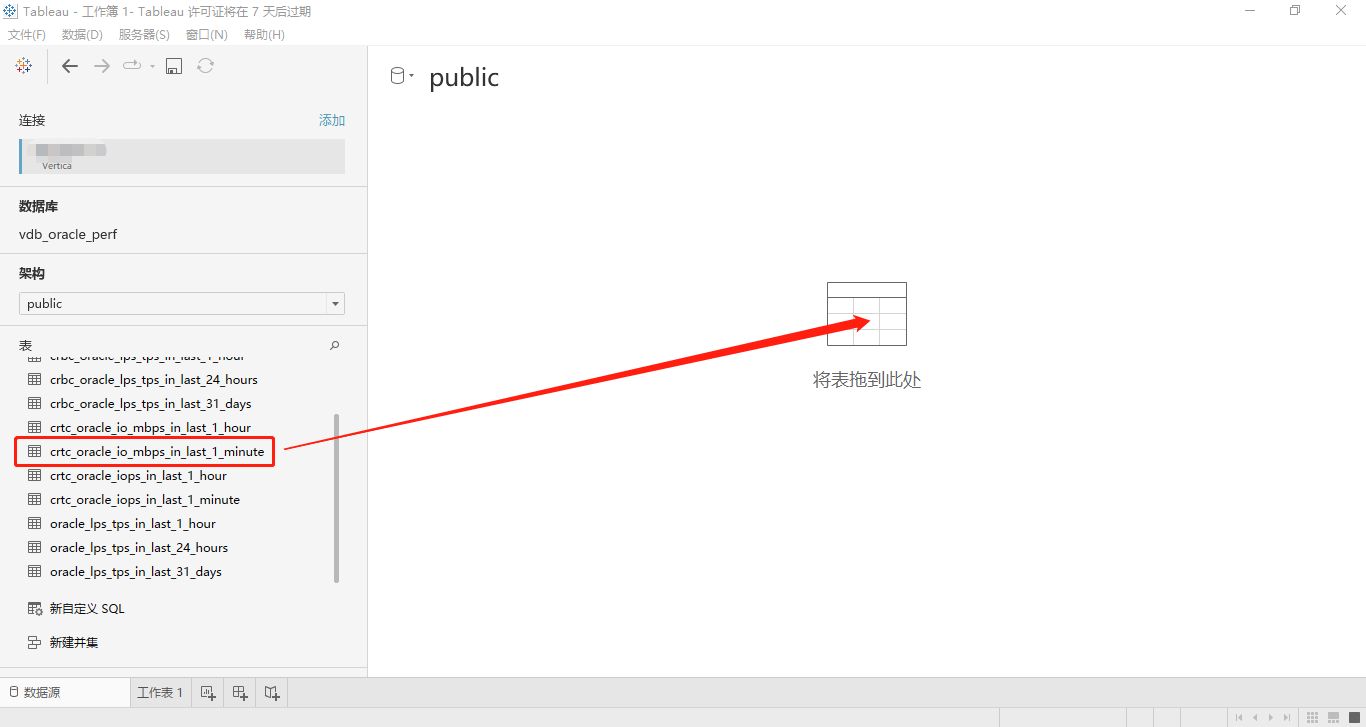

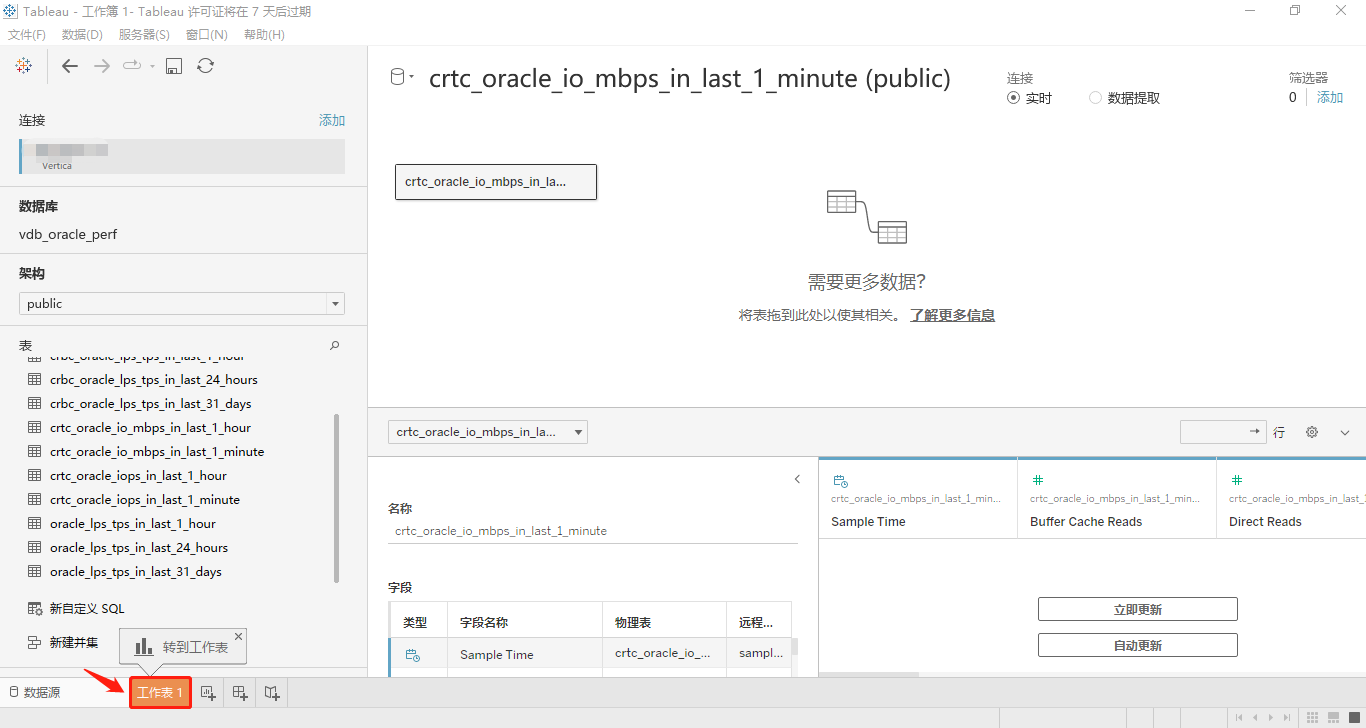

打開Tableau Desktop工具,然后連接到Vertica數(shù)據(jù)庫,選擇schema為public,然后將表crtc_oracle_io_mbps_in_last_1_minute拖動(dòng)到指定位置,點(diǎn)擊底部的工作表,進(jìn)入工作表編輯區(qū)。詳見下面兩個(gè)屏幕截圖:

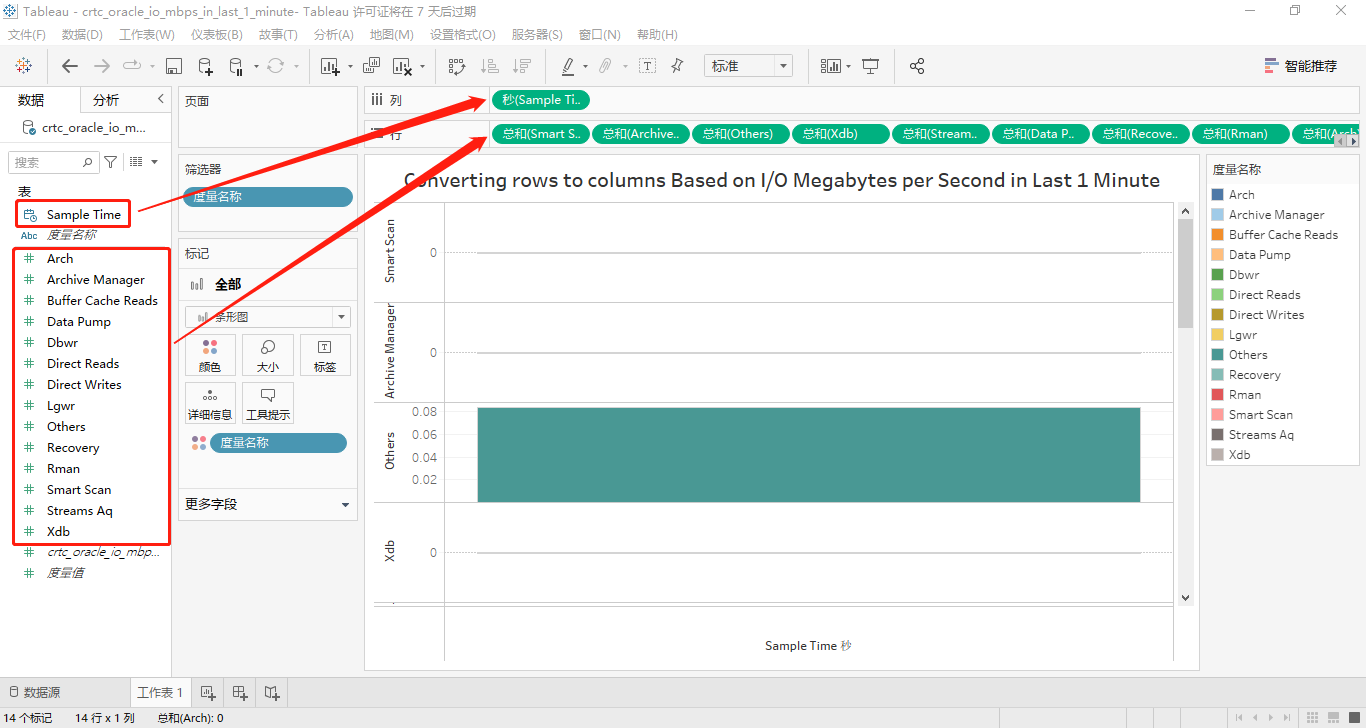

接著,將工作區(qū)中左側(cè)“數(shù)據(jù)”標(biāo)簽卡內(nèi)“表”的度量名稱Sample Time用鼠標(biāo)拖到位于工作區(qū)上方標(biāo)簽名為“列”的右側(cè)“標(biāo)簽框”中,同樣的方法,將“表”的14個(gè)度量值分別拖到位于工作區(qū)上方標(biāo)簽名為“行”的右側(cè)“標(biāo)簽框”中,屏幕截圖如下所示:

然后將標(biāo)簽名為“行”內(nèi)的其余13個(gè)度量依次用鼠標(biāo)拖動(dòng)到工作區(qū)中部的圖表縱坐標(biāo)軸名稱為“Smart Scan”的區(qū)域,也就是將這14個(gè)度量都合并到一個(gè)縱坐標(biāo)軸上,順便修改圖表的名稱和縱坐標(biāo)軸名稱,最終的效果如圖所示:

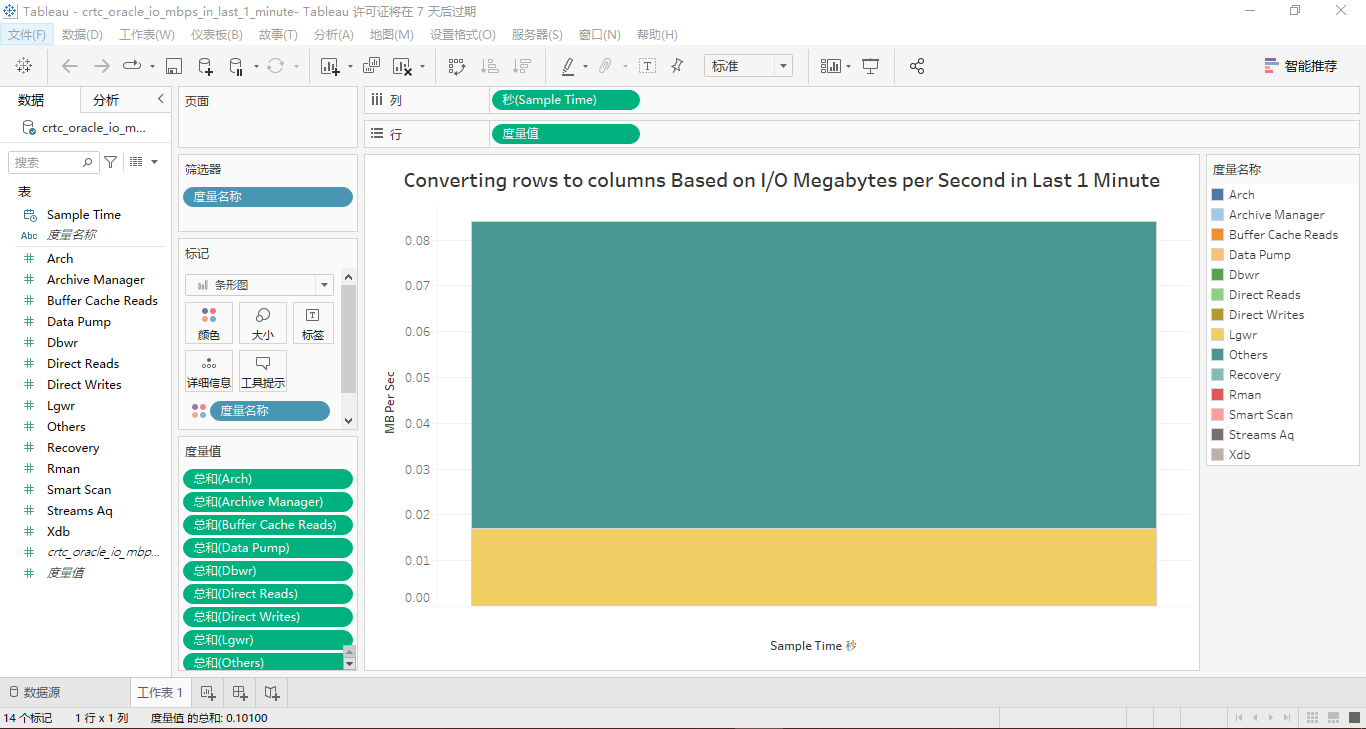

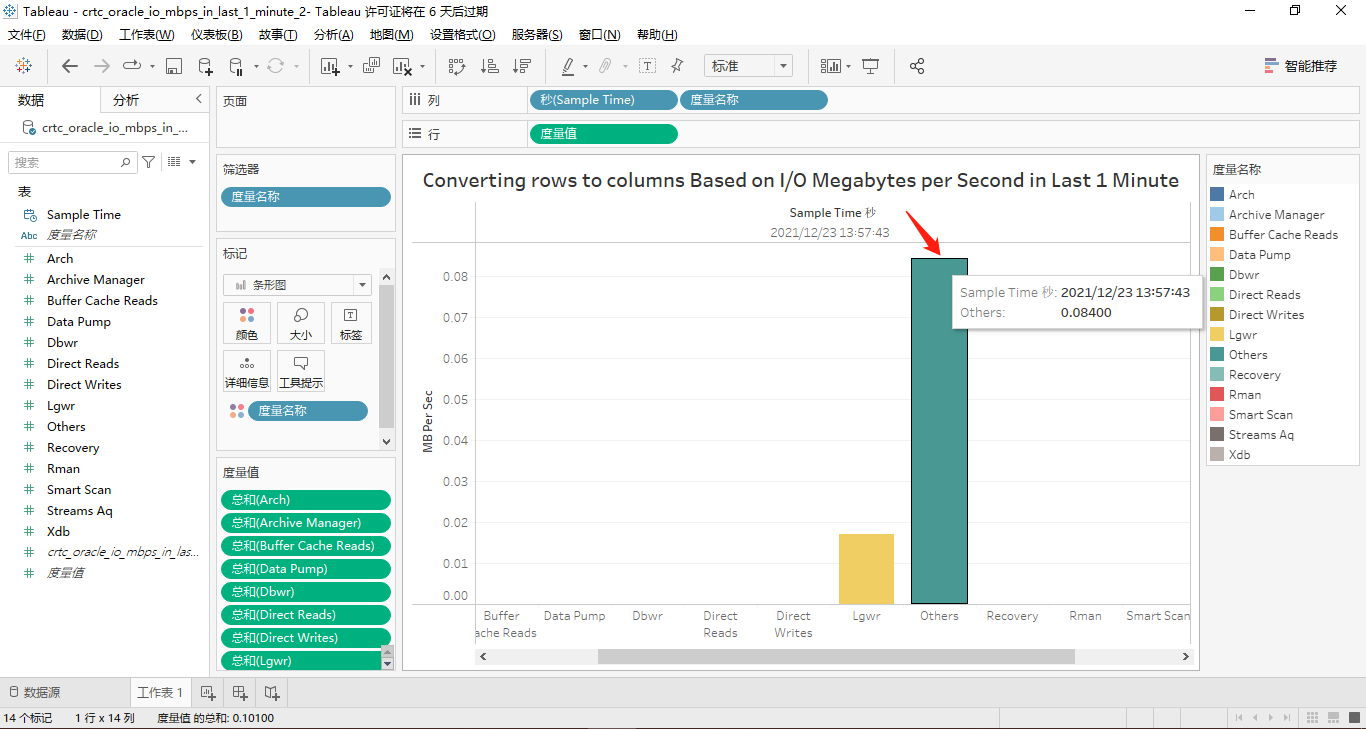

因?yàn)槊總€(gè)度量在最近1分鐘的數(shù)據(jù)只有一個(gè)值顯示,顯然,所有度量在縱坐標(biāo)軸上顯示的話,這個(gè)柱狀條形圖看起來很臃腫!因此,將這個(gè)14個(gè)度量換到橫坐標(biāo)軸上,詳見下面的兩個(gè)屏幕截圖:

正如我們所看到的,只有Lgwr和Others這兩個(gè)度量有取值。

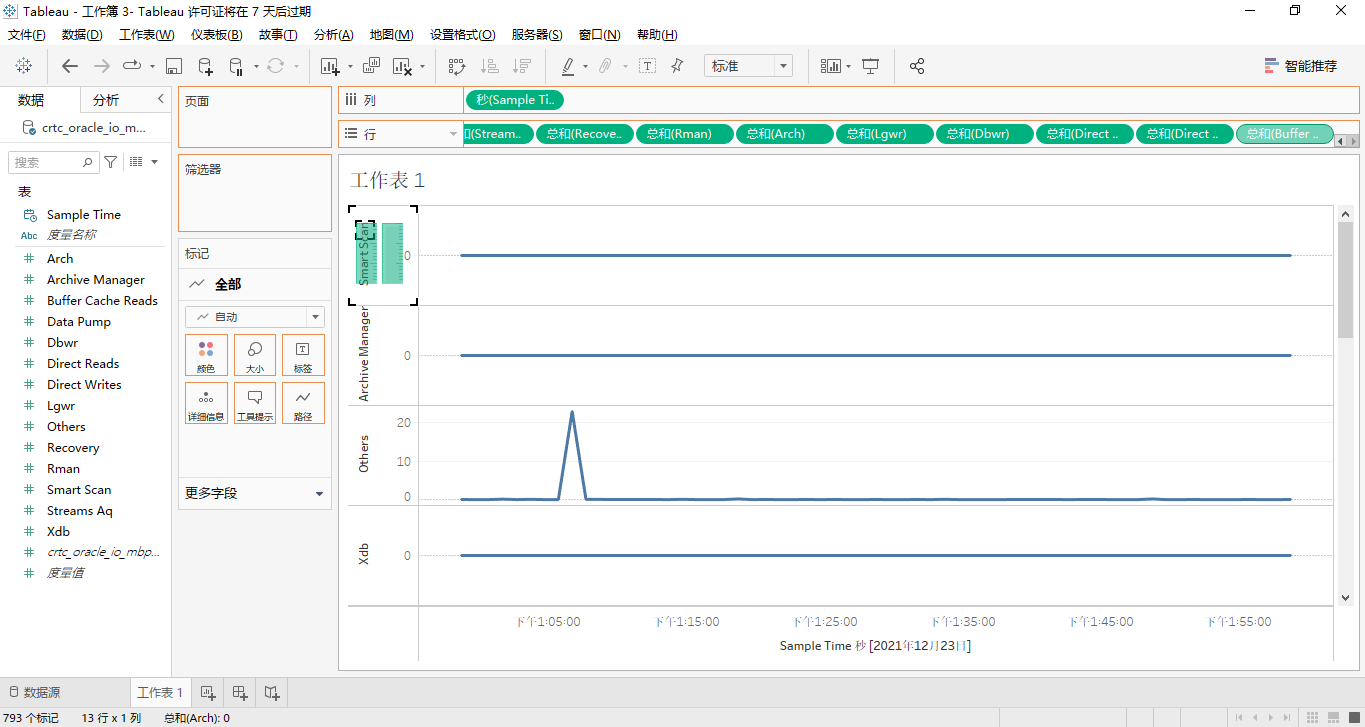

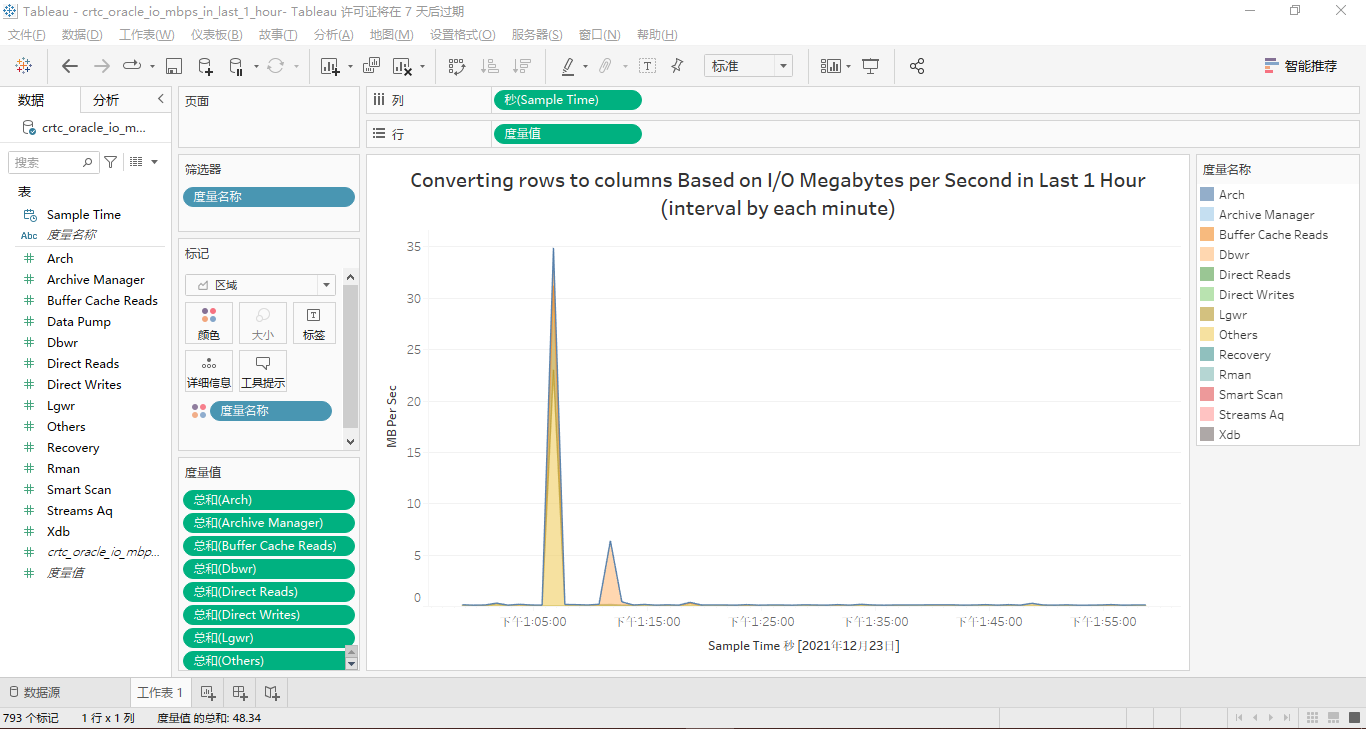

按Function Name分類的最近1小時(shí)的IO MBPS

因?yàn)樯弦画h(huán)節(jié)我們已經(jīng)非常詳細(xì)地說明了在Tablesau Desktop中可視化表crtc_oracle_io_mbps_in_last_1_minute的每一步驟,所以在這里,我們進(jìn)行快速地操作,見如下屏幕截圖。

最終,按Function Name分類的最近1小時(shí)的IO MBPS的面積堆疊圖是這樣的:

按Function Name分類的最近1分鐘的IOPS

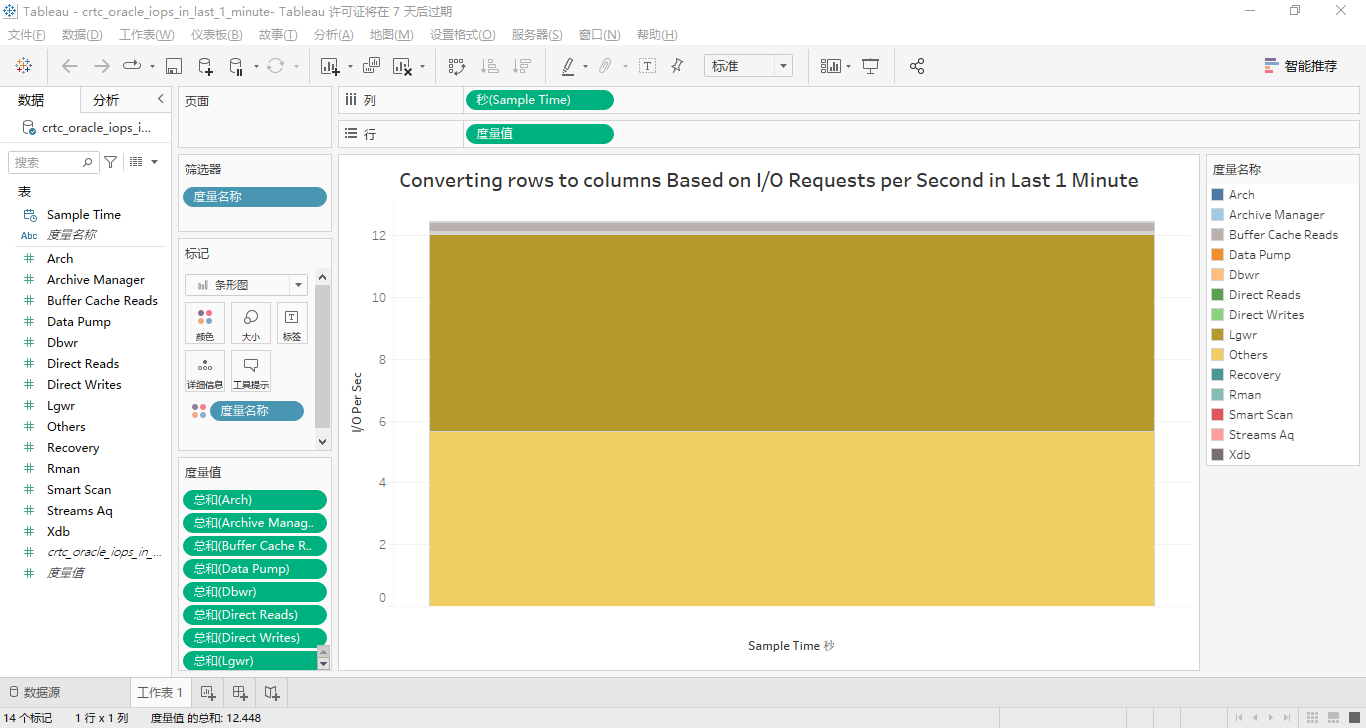

這14個(gè)度量均在縱坐標(biāo)軸上顯示的條形柱狀圖為:

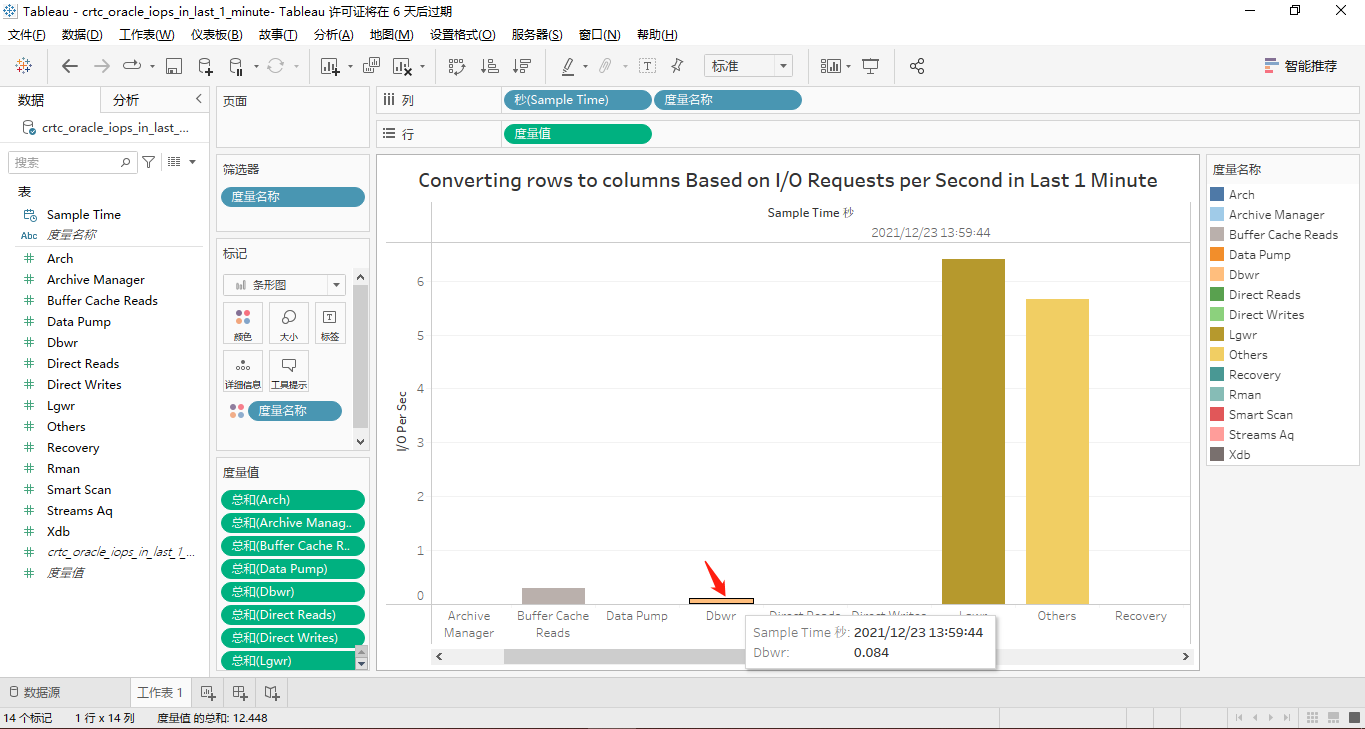

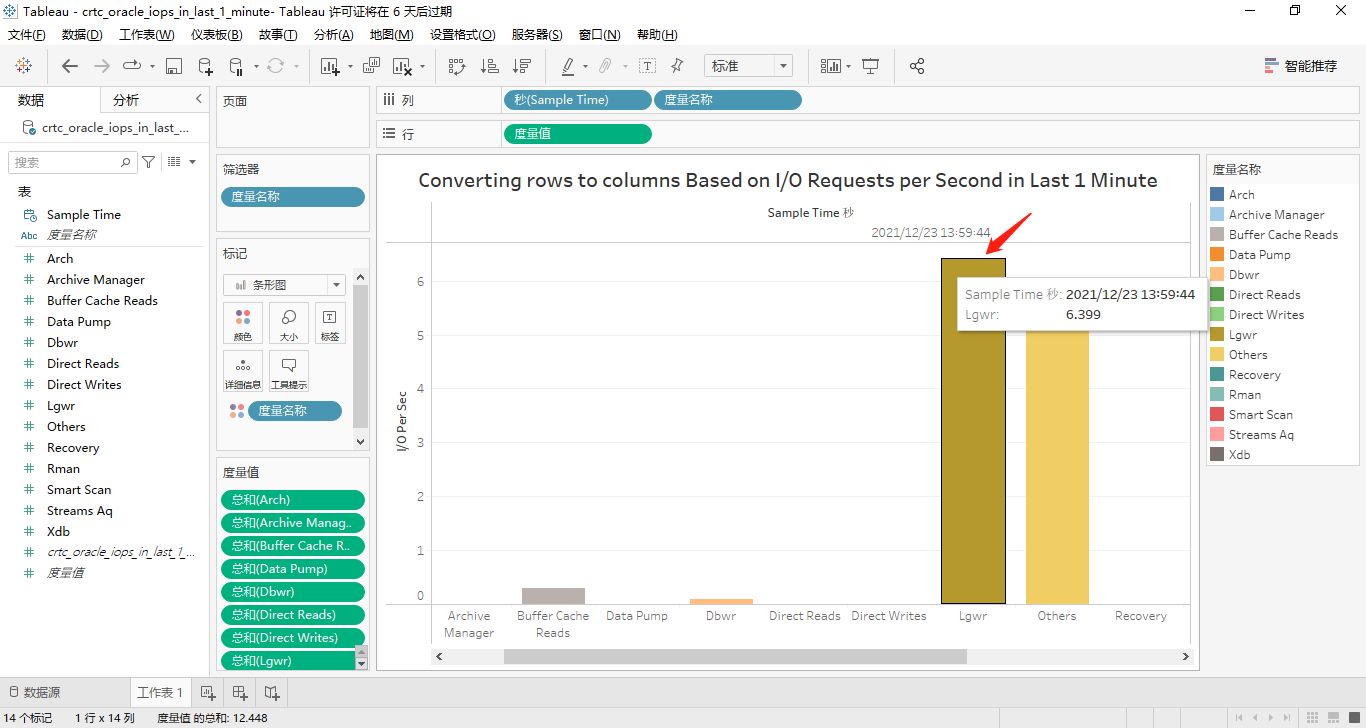

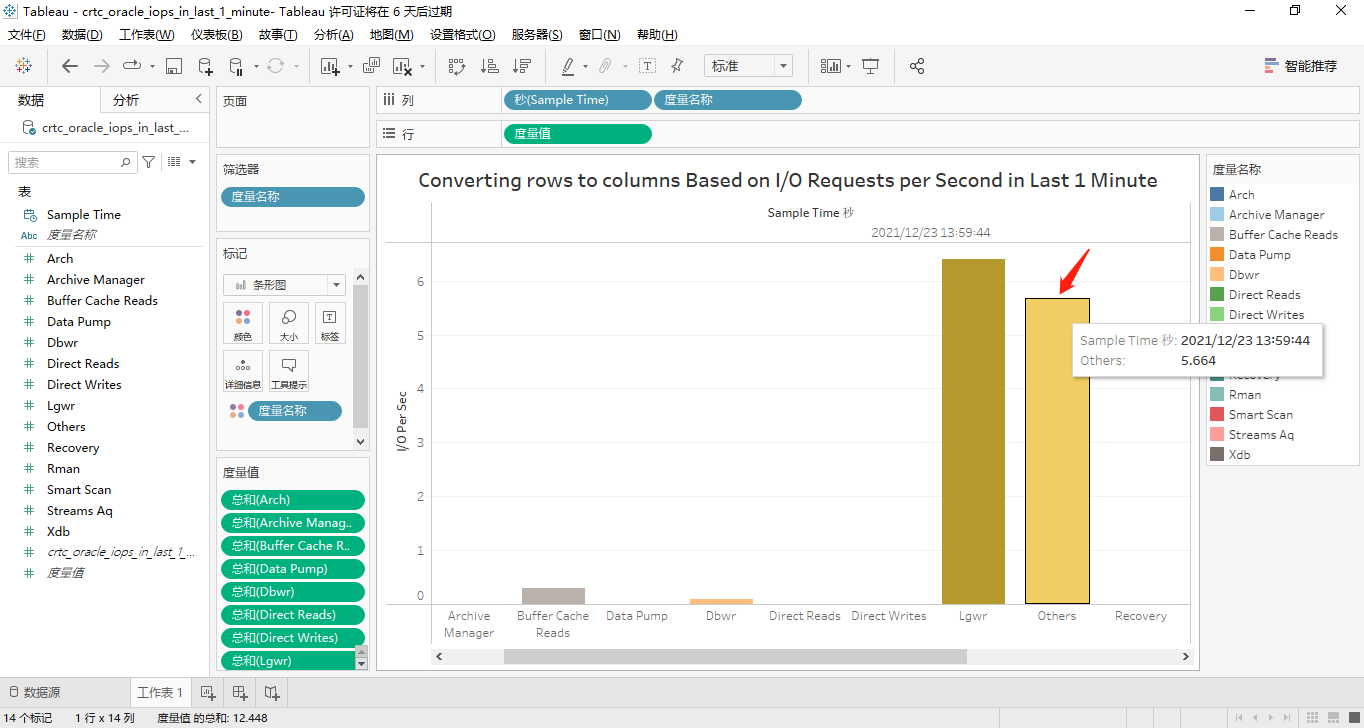

接著,我們將那14個(gè)度量都轉(zhuǎn)換到橫坐標(biāo)軸上顯示。其中,有取值的4個(gè)度量對(duì)應(yīng)的屏幕截圖分別如下所示:

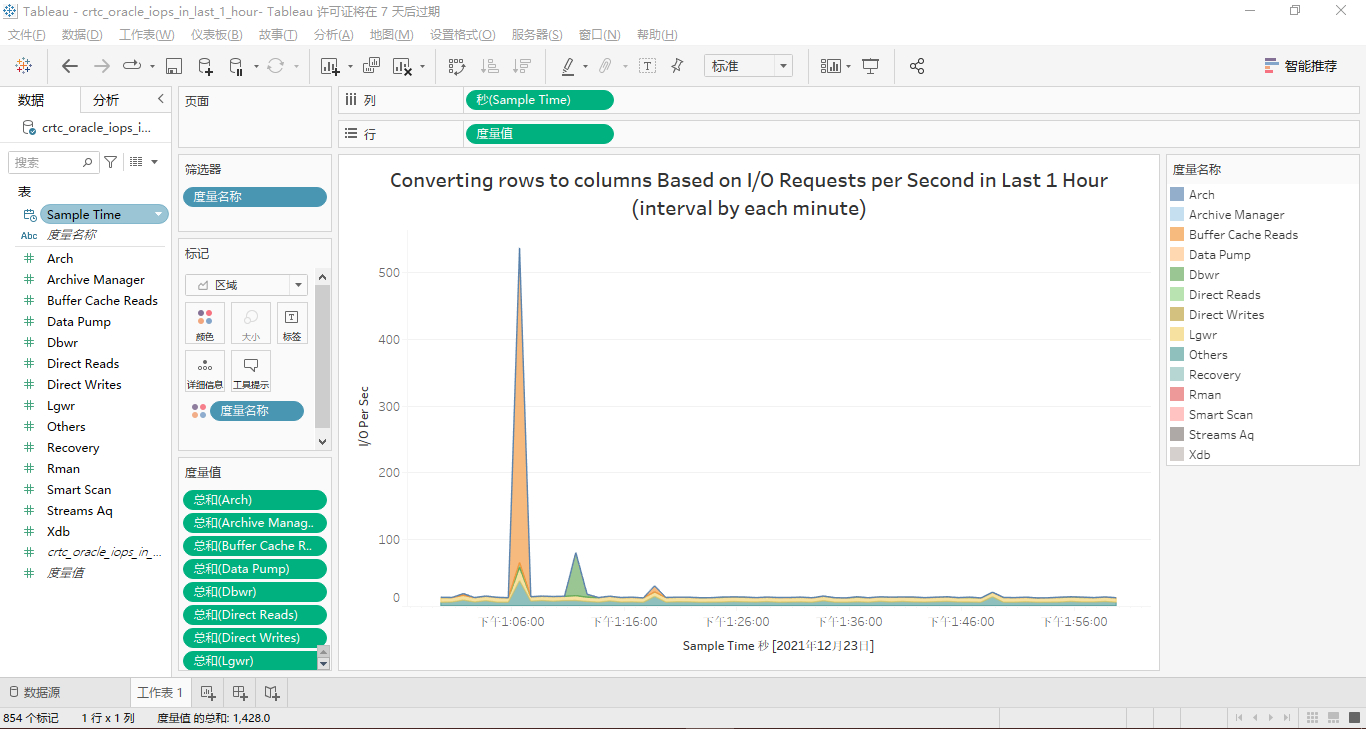

按Function Name分類的最近1小時(shí)的IOPS

最近1小時(shí)的IOPS的面積堆疊圖設(shè)置相對(duì)簡(jiǎn)單,最終效果見下圖:

以上就是這篇文章用Tableau可視化Vertica數(shù)據(jù)庫之“MBPS&IOPS By Function”篇的所有內(nèi)容。另外,您也可以從acquire_io_mbps_by_function.sql和acquire_iops_by_function.sql查看我前面提到的所有SQL源代碼。如果您有好的建議或意見,歡迎在文章底部的評(píng)論區(qū)提出,我將逐條閱讀,并在最快時(shí)間內(nèi)回復(fù)。