1、寫在前面

MogDB的擴容和縮容是針對于備機而言,擴容最多支持一主八備,縮容支持只剩一個主庫,擴容縮容也比較方便,MogDB提供了gs_expansion和gs_dropnode兩個工具,我的環境清單如下:

NODE1(主庫) | NODE2(從庫) | |

Hostname | pkt_mogdb1 | pkt_mogdb2 |

IP | 10.80.9.249 | 10.80.9.250 |

磁盤 | 20G | 20G |

內存 | 2G | 2G |

2、gs_expansion擴容

擴容后的架構如下:

NODE1(主庫) | NODE2(從庫) | NODE3(新從庫) | |

Hostname | pkt_mogdb1 | pkt_mogdb2 | pkt_mogdb3 |

IP | 10.80.9.249 | 10.80.9.250 | 10.80.9.251 |

磁盤 | 20G | 20G | 20G |

內存 | 2G | 2G | 2G |

一、擴容

1、準備服務器

參考前面的安裝備機,配置好ip、hostname,安裝依賴包,配置chronyd時間同步,關閉防火墻等

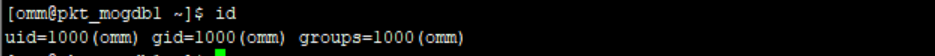

2、創建用戶和組

待擴容服務器創建與主機一樣的用戶和組,登錄主機查看omm的用戶和組id。

待擴容服務器創建用戶和組

groupadd omm -g 1000 useradd omm -g 1000 -u 1000 #修改密碼 passwd omm |

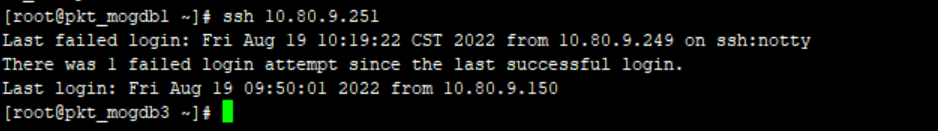

3、配置主機到新從庫的ssh互信

由于在擴容過程中會拷貝文件到新的從庫,因此需要配置ssh互信,root和omm用戶都需要配置

#一 主機檢查是否已經存在秘鑰 ls ~/.ssh/id_rsa.pub #二 如果秘鑰不存在重新生成秘鑰文件 ssh-keygen -t rsa #三 拷貝秘鑰問價到待擴容的機器 scp -r ~/.ssh/id_rsa.pub root@10.80.9.251:~/.ssh/ #四 在目標機器將主機秘鑰寫入文件中 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys #五 主機驗證ssh互信 ssh 10.80.9.251 |

沒有輸入密碼提示表示配置成功

相同的操作使用omm用戶在執行一遍

如果免密不好用可能是authorized_keys文件權限的問題,權限需要是700;chmod 700 authorized_keys。

注意node1到node2和node3的互信必須都配置

4、配置主庫服務器上的配置文件,增加擴容的機器

[omm@pkt_mogdb1 software]$ cat cluster3.xml <?xml version="1.0" encoding="UTF-8"?> <ROOT> <!-- MogDB整體信息 --> <CLUSTER> <PARAM name="clusterName" value="mogdb_cluster1" /> <PARAM name="nodeNames" value="pkt_mogdb1,pkt_mogdb2,pkt_mogdb3" /> <PARAM name="gaussdbToolPath" value="/opt/mogdb/data/tool" /> <PARAM name="corePath" value="/opt/mogdb/corefile"/> <PARAM name="gaussdbAppPath" value="/opt/mogdb/data/app" /> <PARAM name="gaussdbLogPath" value="/opt/mogdb/data/log" /> <PARAM name="tmpMppdbPath" value="/opt/mogdb/data/tmp"/> <PARAM name="backIp1s" value="10.80.9.249,10.80.9.250,10.80.9.251"/> </CLUSTER> <!-- 每臺服務器上的節點部署信息 --> <DEVICELIST> <!-- node1上的節點部署信息 --> <DEVICE sn="pkt_mogdb1"> <!-- 節點1的主機名稱 --> <PARAM name="name" value="pkt_mogdb1"/> <!-- 節點1所在的AZ及AZ優先級 --> <PARAM name="azName" value="AZ1"/> <PARAM name="azPriority" value="1"/> <!-- 節點1的IP,如果服務器只有一個網卡可用,將backIP1和sshIP1配置成同一個IP --> <PARAM name="backIp1" value="10.80.9.249"/> <PARAM name="sshIp1" value="10.80.9.249"/> <!--dn--> <PARAM name="dataNum" value="1"/> <PARAM name="dataNode1" value="/opt/mogdb/data/data,pkt_mogdb2,/opt/mogdb/data/data,pkt_mogdb3,/opt/mogdb/data/data"/> </DEVICE> <!-- 節點2上的節點部署信息,其中“name”的值配置為主機名稱 --> <DEVICE sn="pkt_mogdb2"> <!-- 節點2的主機名稱 --> <PARAM name="name" value="pkt_mogdb2"/> <!-- 節點2所在的AZ及AZ優先級 --> <PARAM name="azName" value="AZ1"/> <PARAM name="azPriority" value="1"/> <!-- 節點2的IP,如果服務器只有一個網卡可用,將backIP1和sshIP1配置成同一個IP --> <PARAM name="backIp1" value="10.80.9.250"/> <PARAM name="sshIp1" value="10.80.9.250"/> </DEVICE> <DEVICE sn="pkt_mogdb3"> <!-- 節點2的主機名稱 --> <PARAM name="name" value="pkt_mogdb3"/> <!-- 節點2所在的AZ及AZ優先級 --> <PARAM name="azName" value="AZ1"/> <PARAM name="azPriority" value="1"/> <!-- 節點2的IP,如果服務器只有一個網卡可用,將backIP1和sshIP1配置成同一個IP --> <PARAM name="backIp1" value="10.80.9.251"/> <PARAM name="sshIp1" value="10.80.9.251"/> </DEVICE> </DEVICELIST> </ROOT> |

5、插播一個小插曲(可以跳過廣告);

摸爬滾打過坑一:我的主從環境是ptk安裝的,從主機到備機的ssh互信都是ptk生成的,因為我在配置待擴容備機(node3)互信的時候,修改了node2的omm密碼導致gs_om需要輸入密碼。于是重新修改node1到node2的互信。在修改node2的互信的時候需要注意~/.ssh/config文件里的信息,需要修改成新的密鑰名稱。

摸爬滾打過坑二:莫名奇妙我的主庫啟動不了了,看日志也沒發現什么原因,但是從庫可以啟動,啟動日志如下:

[omm@pkt_mogdb1 data]$ gs_om -t start Starting cluster. ========================================= [SUCCESS] pkt_mogdb2 2022-08-19 12:55:17.312 [unknown] [unknown] localhost 47526557277696 0[0:0#0] 0 [BACKEND] WARNING: could not create any HA TCP/IP sockets ========================================= [GAUSS-53600]: Can not start the database, the cmd is . /home/omm/.bashrc; python3 '/opt/mogdb/data/tool/script/local/StartInstance.py' -U omm -R /opt/mogdb/data/app -t 300 --security-mode=off, Error: [FAILURE] pkt_mogdb1: [GAUSS-51607] : Failed to start instance. Error: Please check the gs_ctl log for failure details. [2022-08-19 12:55:13.709][16249][][gs_ctl]: gs_ctl started,datadir is /opt/mogdb/data/data [2022-08-19 12:55:13.808][16249][][gs_ctl]: waiting for server to start... .0 LOG: [Alarm Module]can not read GAUSS_WARNING_TYPE env.

0 LOG: [Alarm Module]Host Name: pkt_mogdb1

0 LOG: [Alarm Module]Host IP: 10.80.9.249

0 LOG: [Alarm Module]Cluster Name: mogdb_cluster1

0 WARNING: failed to open feature control file, please check whether it exists: FileName=gaussdb.version, Errno=2, Errmessage=No such file or directory. 0 WARNING: failed to parse feature control file: gaussdb.version. 0 WARNING: Failed to load the product control file, so gaussdb cannot distinguish product version. The core dump path from /proc/sys/kernel/core_pattern is an invalid directory:|/usr/libexec/ 2022-08-19 12:55:14.017 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: when starting as multi_standby mode, we couldn't support data replicaton. 2022-08-19 12:55:14.023 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: [Alarm Module]can not read GAUSS_WARNING_TYPE env.

2022-08-19 12:55:14.023 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: [Alarm Module]Host Name: pkt_mogdb1

2022-08-19 12:55:14.023 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: [Alarm Module]Host IP: 10.80.9.249

2022-08-19 12:55:14.023 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: [Alarm Module]Cluster Name: mogdb_cluster1

2022-08-19 12:55:14.027 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: loaded library "security_plugin" 2022-08-19 12:55:14.028 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] WARNING: could not create any HA TCP/IP sockets 2022-08-19 12:55:14.031 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: gstrace initializes with failure. errno = 1. 2022-08-19 12:55:14.031 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: InitNuma numaNodeNum: 1 numa_distribute_mode: none inheritThreadPool: 0. 2022-08-19 12:55:14.031 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: reserved memory for backend threads is: 220 MB 2022-08-19 12:55:14.031 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: reserved memory for WAL buffers is: 128 MB 2022-08-19 12:55:14.031 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: Set max backend reserve memory is: 348 MB, max dynamic memory is: 11067 MB 2022-08-19 12:55:14.031 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: shared memory 360 Mbytes, memory context 11415 Mbytes, max process memory 12288 Mbytes 2022-08-19 12:55:14.057 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [CACHE] LOG: set data cache size(402653184) 2022-08-19 12:55:14.067 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [CACHE] LOG: set metadata cache size(134217728) 2022-08-19 12:55:14.109 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [SEGMENT_PAGE] LOG: Segment-page constants: DF_MAP_SIZE: 8156, DF_MAP_BIT_CNT: 65248, DF_MAP_GROUP_EXTENTS: 4175872, IPBLOCK_SIZE: 8168, EXTENTS_PER_IPBLOCK: 1021, IPBLOCK_GROUP_SIZE: 4090, BMT_HEADER_LEVEL0_TOTAL_PAGES: 8323072, BktMapEntryNumberPerBlock: 2038, BktMapBlockNumber: 25, BktBitMaxMapCnt: 512 2022-08-19 12:55:14.114 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: mogdb: fsync file "/opt/mogdb/data/data/gaussdb.state.temp" success 2022-08-19 12:55:14.114 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: create gaussdb state file success: db state(STARTING_STATE), server mode(Primary), connection index(1) 2022-08-19 12:55:14.116 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: max_safe_fds = 976, usable_fds = 1000, already_open = 14 The core dump path from /proc/sys/kernel/core_pattern is an invalid directory:|/usr/libexec/ 2022-08-19 12:55:14.118 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: the configure file /opt/mogdb/data/app/etc/gscgroup_omm.cfg doesn't exist or the size of configure file has changed. Please create it by root user! 2022-08-19 12:55:14.118 [unknown] [unknown] localhost 47691868622336 0[0:0#0] 0 [BACKEND] LOG: Failed to parse cgroup config file. .[2022-08-19 12:55:15.813][16249][][gs_ctl]: waitpid 16252 failed, exitstatus is 256, ret is 2 [2022-08-19 12:55:15.813][16249][][gs_ctl]: stopped waiting [2022-08-19 12:55:15.813][16249][][gs_ctl]: could not start server |

看gs_ctl日志、om日志沒有發現明顯的錯誤,只是pg_log中關于MOT相關的日志比較可疑,因為我上一次測試MOT表,并且臨時擴大了內存,而且關機的時候也沒有手工停止MogDB。為了測試擴容我把node1的內存又縮小了,懷疑是這個導致的問題。

我的解決步驟如下:

一 mot.conf配置文件注釋掉

二 臨時啟動node2節點為primary

gs_ctl restart -D /opt/mogdb/data/data/ -M primary |

三 重建主庫為從庫

gs_ctl build -D /opt/mogdb/data/data -b full |

四 重啟集群,由于沒有執行gs_om -t refreshconf更新主從節點信息,所以啟動還是以node1為primary。

gs_om -t restart |

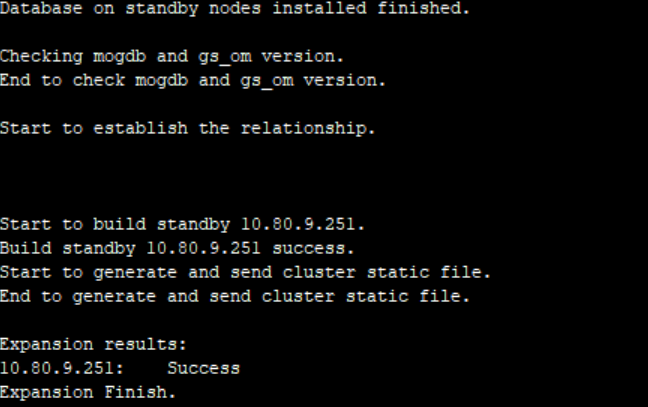

6、開始擴容,必須使用root用戶

#因為root用戶沒有設置環境變量,需要執行omm下的環境變量 source /home/omm/.bashrc gs_expansion -U omm -G omm -X /opt/mogdb/software/cluster3.xml -h 10.80.9.251 |

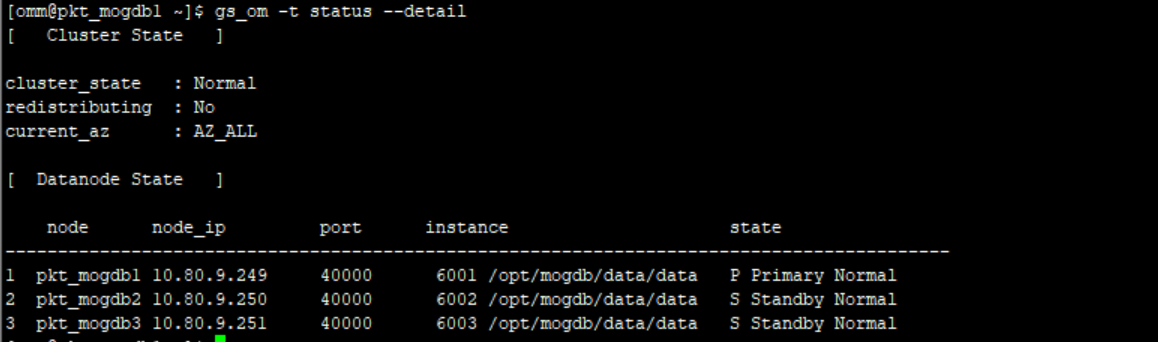

7、檢查擴容后的集群狀態

8、說說我在擴容的時候遇到的問題

1)[GAUSS-35706] Fail to preinstall on all new hosts.

這個問題是因為在新備庫上無法執行預安裝命令,我的新備庫中沒有安裝python3,安裝完python3解決

2)[GAUSS-51100] : Failed to verify SSH trust on these nodes: pkt_mogdb1, pkt_mogdb2, 10.80.9.249, 10.80.9.250 by root.

這個問題是因為主節點到其他備機的ssh互信沒有配置,逐一檢查配置互信即可解決,,還需要配置主節點的/etc/hosts 添加新增的節點名

- [GAUSS-50021] : Failed to query wal_keep_segments parameter.

這個問題是因為我的xml配置文件中dataNode1參數配置失敗,導致gs_expansion無法讀取到postgresql.conf

4)Build standby 10.80.9.251 failed.

[GAUSS-35706] Fail to build on all new hosts.

這個問題實在不知道什么原因,我是使用了一臺新的服務器,然后預先安裝了MogDB才成功,在擴容的過程中遇到了挺多問題,建議新備機先使用ptk安裝好MogDB單實例,然后然進行擴容,這樣環境變量什么的都沒有問題,只需要在最后加入一個-L參數即可.

gs_expansion -U omm -G omm -X /opt/mogdb/software/cluster3.xml -h 10.80.9.251 -L |

3、縮容

縮容就比較簡單了,必須在主節點上的omm用戶下執行

gs_dropnode -U omm -G omm -h 10.80.9.251 |

5、寫在最后

這次筆記測試了擴容和縮容,擴容的時候建議待擴容的備機上先使用ptk預安裝MogDB,然后再進行擴容,可以避免很多粗心導致的問題.另外還需要注意pg_hba.conf認證文件,否則也會出現一些問題。