1.測試環(huán)境

本文主要在mogdb 3.0.1版本下使用ptk安裝3節(jié)點帶CM集群管理軟件的高可用環(huán)境下進行測試,文章從數(shù)據(jù)庫實例,網(wǎng)絡(luò)、主機方面的常見異常,共測試12個高可用場景。測試過程中較為詳細記錄了的測試的方法,過程和結(jié)論,供各位讀者進行參考。

1.1數(shù)據(jù)庫軟硬件環(huán)境

| 主機名 | IP | az | dn | 角色-默認 | db版本 | CM版本 | 操作系統(tǒng) |

|---|---|---|---|---|---|---|---|

| mogdbv3m1 | 192.168.5.30 | az1 | 6001 | 主庫 | gsql ((MogDB 3.0.1 build 1a363ea9) compiled at 2022-08-05 17:31:04 commit 0 last mr ) | cm_ctl ( build d1f619ad) compiled at 2022-08-05 17:30:34 Release | CentOS Linux release 7.9.2009 (Core) |

| mogdbv3s1 | 192.168.5.31 | az1 | 6002 | 同步從 | gsql ((MogDB 3.0.1 build 1a363ea9) compiled at 2022-08-05 17:31:04 commit 0 last mr ) | cm_ctl ( build d1f619ad) compiled at 2022-08-05 17:30:34 Release | CentOS Linux release 7.9.2009 (Core) |

| mogdbv3s2 | 192.168.5.32 | az1 | 6003 | Potential從 | gsql ((MogDB 3.0.1 build 1a363ea9) compiled at 2022-08-05 17:31:04 commit 0 last mr ) | cm_ctl ( build d1f619ad) compiled at 2022-08-05 17:30:34 Release | CentOS Linux release 7.9.2009 (Core) |

1.2測試軟件

壓測主機

mogdb-single-v3b2:192.168.5.20

壓力模擬工具,模擬壓測期間的DML事務(wù)

benchmarksql-5.0-x86,參數(shù)如下:

db=postgres

driver=org.postgresql.Driver

#conn=jdbc:postgresql://1:26000/tpcc_db?prepareThreshold=1&batchMode=on&fetchsiz

e=10&loggerLevel=offconn=jdbc:postgresql://192.168.5.30:26000,192.168.5.31:26000,192.168.5.32:26000/

tpcc_db?prepareThreshold=1&batchMode=on&fetchsize=10&loggerLevel=off&targetServerType=masteruser=tpcc_usr

password=tpcc@123

warehouses=3

terminals=2

runMins=50

runTxnsPerTerminal=0

loadWorkers=50

limitTxnsPerMin=0

terminalWarehouseFixed=false

newOrderWeight=45

paymentWeight=43

orderStatusWeight=4

deliveryWeight=4

stockLevelWeight=4`

jdbc模擬連接工具,模擬不間斷連接數(shù)據(jù)庫進行查詢,代碼如下:

import java.sql.DriverManager;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

public class Test7CommitAndRollback {

public static void main(String[] args) throws Exception{

Class.forName("org.opengauss.Driver");

Connection conn = DriverManager.getConnection("jdbc:opengauss://192.168.5.30:26000

,192.168.5.31:26000,192.168.5.32:26000/tpcc_db?prepareThreshold=1&batchMode=on&fetchsize=10&loggerLevel=off&targetServerType=master", "tpcc_usr","tpcc@123");

conn.setAutoCommit(false);

PreparedStatement stmt = null;

//stmt = conn.prepareStatement("insert into tab_insert(data) values(?)");

//stmt.setString(1, "test commit");

//stmt.executeUpdate();

//查詢

stmt = conn.prepareStatement("select * from bmsql_new_order order by random()

limit 3;"); ResultSet rs =stmt.executeQuery();

while(rs.next()){

Integer d_id = (Integer) rs.getInt(1);

Integer o_id = (Integer) rs.getInt(2);

Integer w_id = (Integer) rs.getInt(3);

System.out.println("d_id = "+d_id+", o_id = "+o_id+", w_id = "+w_id);

}

System.out.println("查詢結(jié)果");

conn.close();

}

}

* 數(shù)據(jù)庫參數(shù)

2.測試場景

2.1主機問題

2.1.1 Potential從庫主機宕機

測試目的:模擬Potential角色的從庫主機硬件故障,出現(xiàn)宕機。

測試方法:選定一個Potential從庫,直接關(guān)閉電源,觀察業(yè)務(wù)情況。

預(yù)期結(jié)果:任意一個從庫故障期間,主讀寫業(yè)務(wù)和另一個從庫只讀業(yè)務(wù)均正常,故障主機恢復(fù)后,宕機從庫自動追平延遲。

測試過程和結(jié)論:

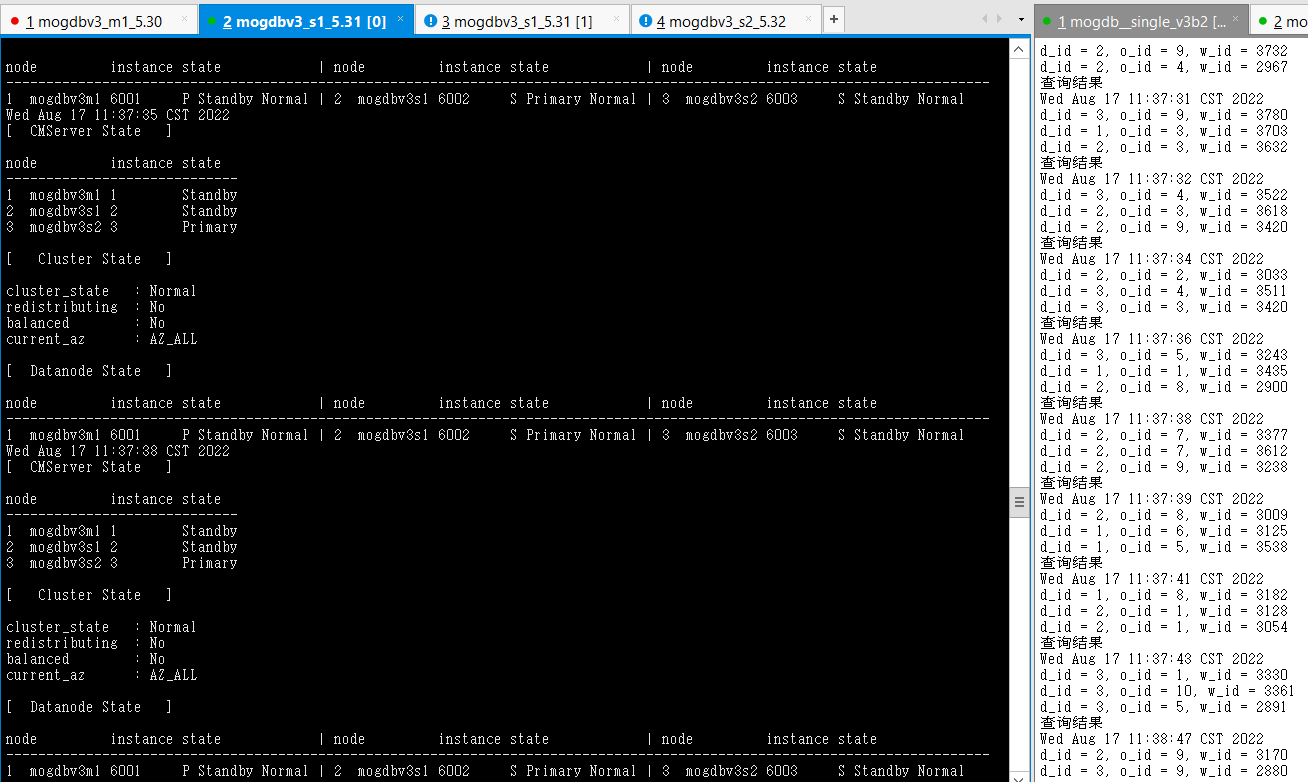

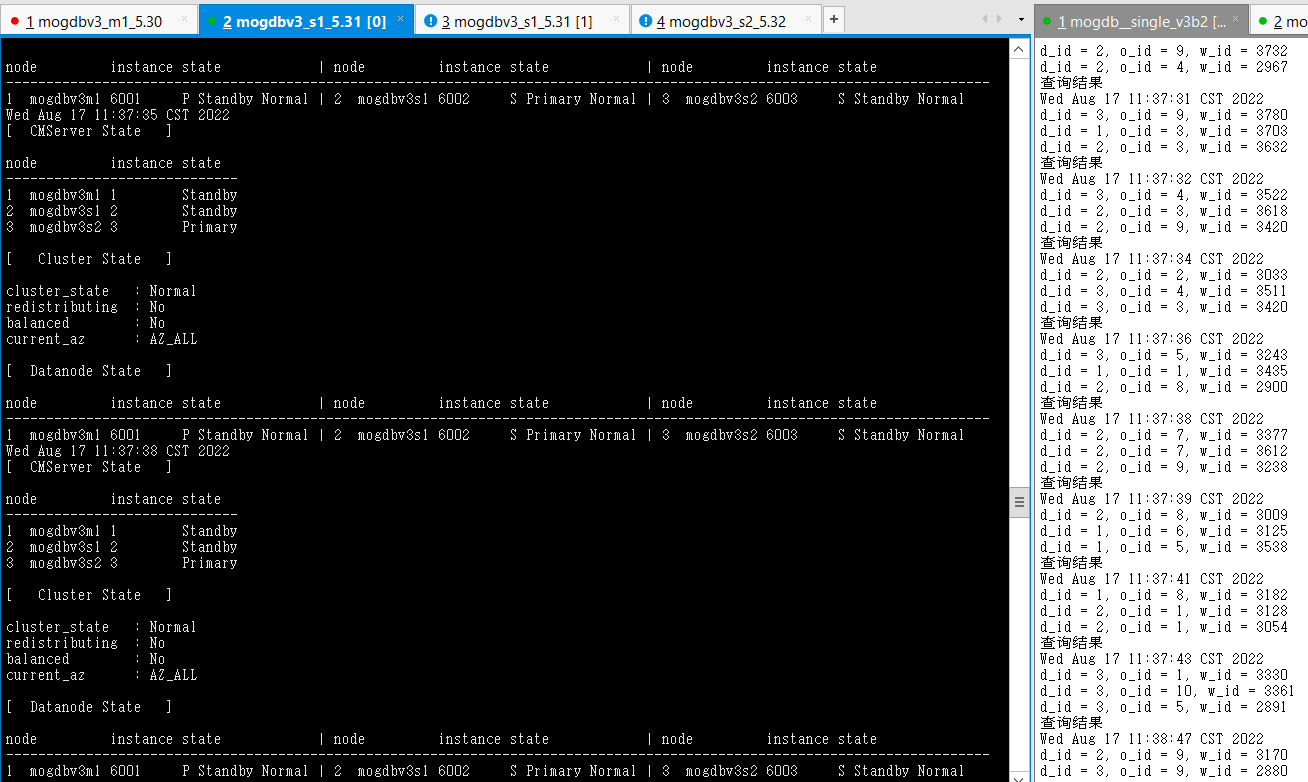

- 尚未進行測試正常環(huán)境。

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

sender_pid : 2614

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 1/4027F300

sender_write_location : 1/4027F300

sender_flush_location : 1/4027F300

sender_replay_location : 1/4027F300

receiver_received_location : 1/4027EB30

receiver_write_location : 1/4027DFD8

receiver_flush_location : 1/4027DFD8

receiver_replay_location : 1/40269CD0

sync_percent : 99%

sync_state : Sync

sync_priority : 1

sync_most_available : On

channel : 192.168.5.31:26001-->192.168.5.32:47642

sender_pid : 29676

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 1/4027F300

sender_write_location : 1/4027F300

sender_flush_location : 1/4027F300

sender_replay_location : 1/4027F300

receiver_received_location : 1/4027EB30

receiver_write_location : 1/4025B848

receiver_flush_location : 1/4025B848

receiver_replay_location : 1/38F9D560

sync_percent : 99%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.31:26001-->192.168.5.30:51958

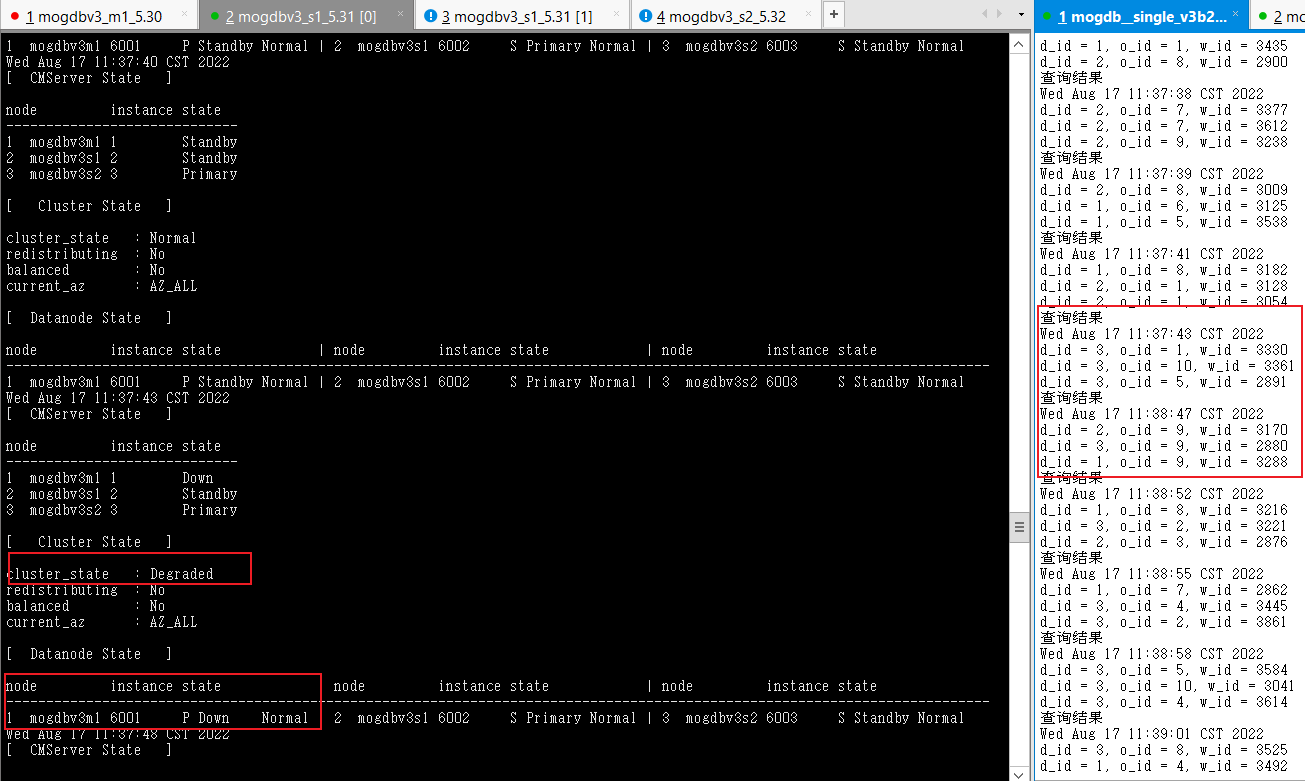

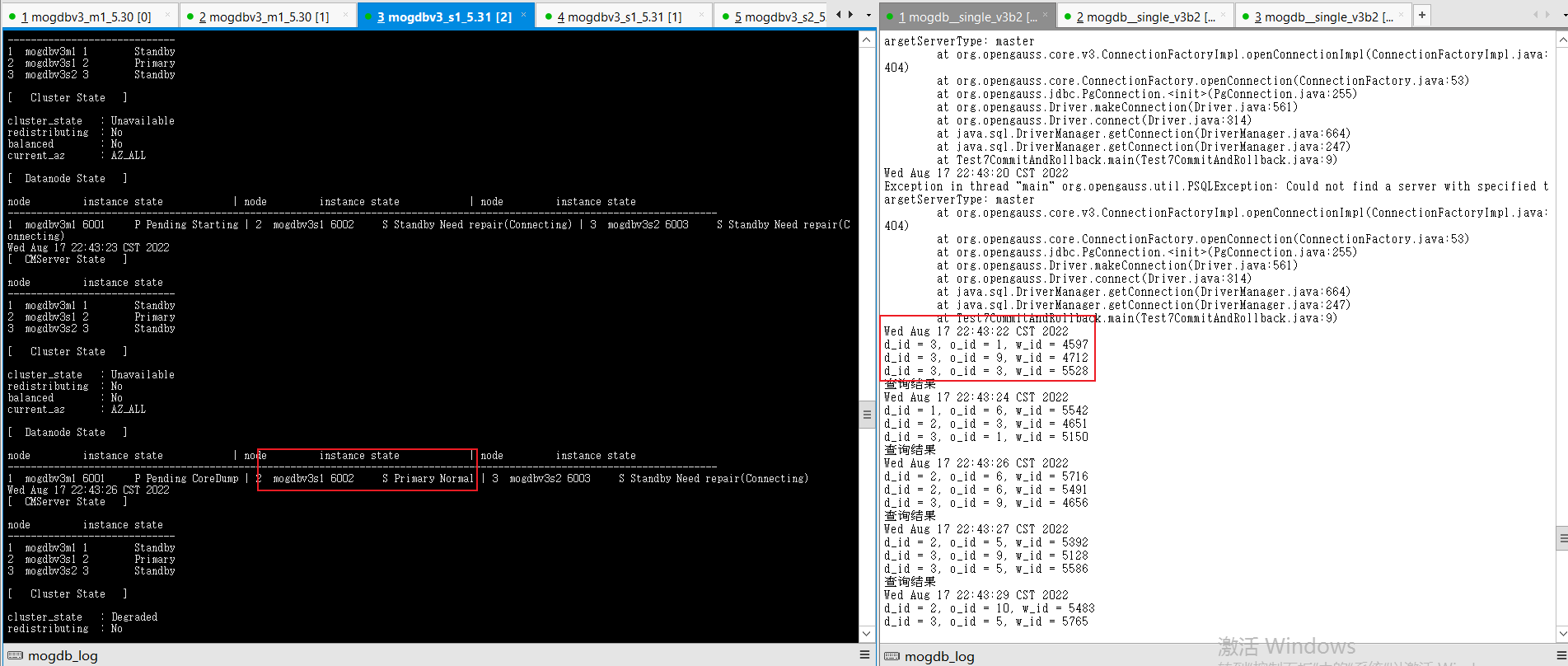

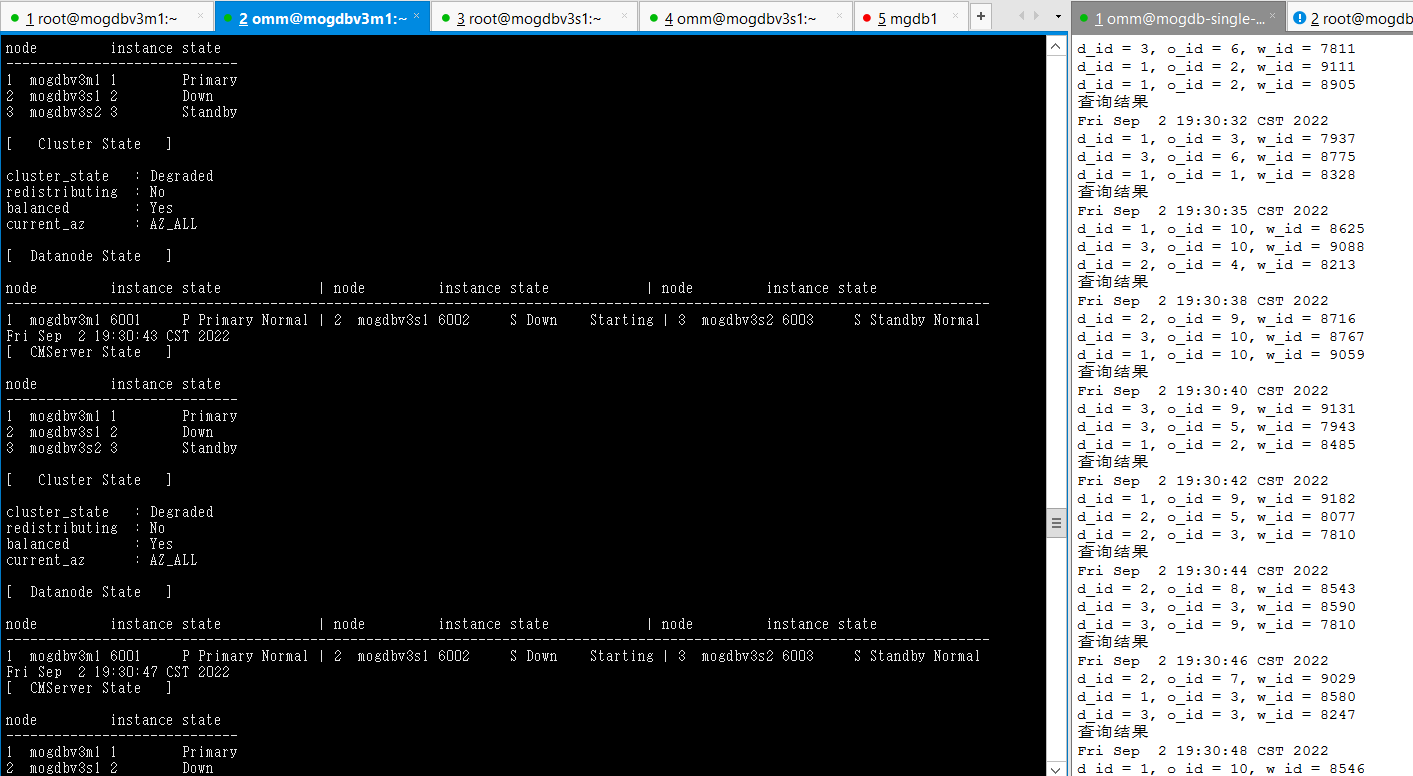

2. 關(guān)閉mogdbv3m1 為從庫的node節(jié)點,查看數(shù)據(jù)庫狀態(tài)和業(yè)務(wù)中斷情況,業(yè)務(wù)測試存在64s的等待,但并未發(fā)生連接報錯。

- 恢復(fù)宕機備庫mogdbv3m1 ,恢復(fù)過程業(yè)務(wù)未出現(xiàn)等待。

- 結(jié)論:符合預(yù)期,但切換期間業(yè)務(wù)阻塞時間偏長。

2.1.2Sync從庫主機宕機

測試目的:模擬Sync從庫主機硬件故障,出現(xiàn)宕機。

測試方法:選定一個Sync從庫,直接關(guān)閉電源,觀察業(yè)務(wù)情況。

預(yù)期結(jié)果:任意一個從庫故障期間,主庫讀寫業(yè)務(wù)和另一個從庫只讀業(yè)務(wù)均正常,故障主機恢復(fù)后,宕機從庫自動追平延遲

測試過程和結(jié)論:

- 切換前環(huán)境狀態(tài)

[omm@mogdbv3s1 ~]$ gs_ctl query

[2022-08-17 12:01:13.723][8405][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

sender_pid : 2614

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 1/6708EA60

sender_write_location : 1/6708EA60

sender_flush_location : 1/6708EA60

sender_replay_location : 1/6708EA60

receiver_received_location : 1/6708EA60

receiver_write_location : 1/6708EA60

receiver_flush_location : 1/6708EA60

receiver_replay_location : 1/6708EA60

sync_percent : 100%

sync_state : Sync

sync_priority : 1

sync_most_available : On

channel : 192.168.5.31:26001-->192.168.5.32:47642

sender_pid : 6036

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 1/6708EA60

sender_write_location : 1/6708EA60

sender_flush_location : 1/6708EA60

sender_replay_location : 1/6708EA60

receiver_received_location : 1/6708EA60

receiver_write_location : 1/6708EA60

receiver_flush_location : 1/6708EA60

receiver_replay_location : 1/6708EA60

sync_percent : 100%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.31:26001-->192.168.5.30:58258

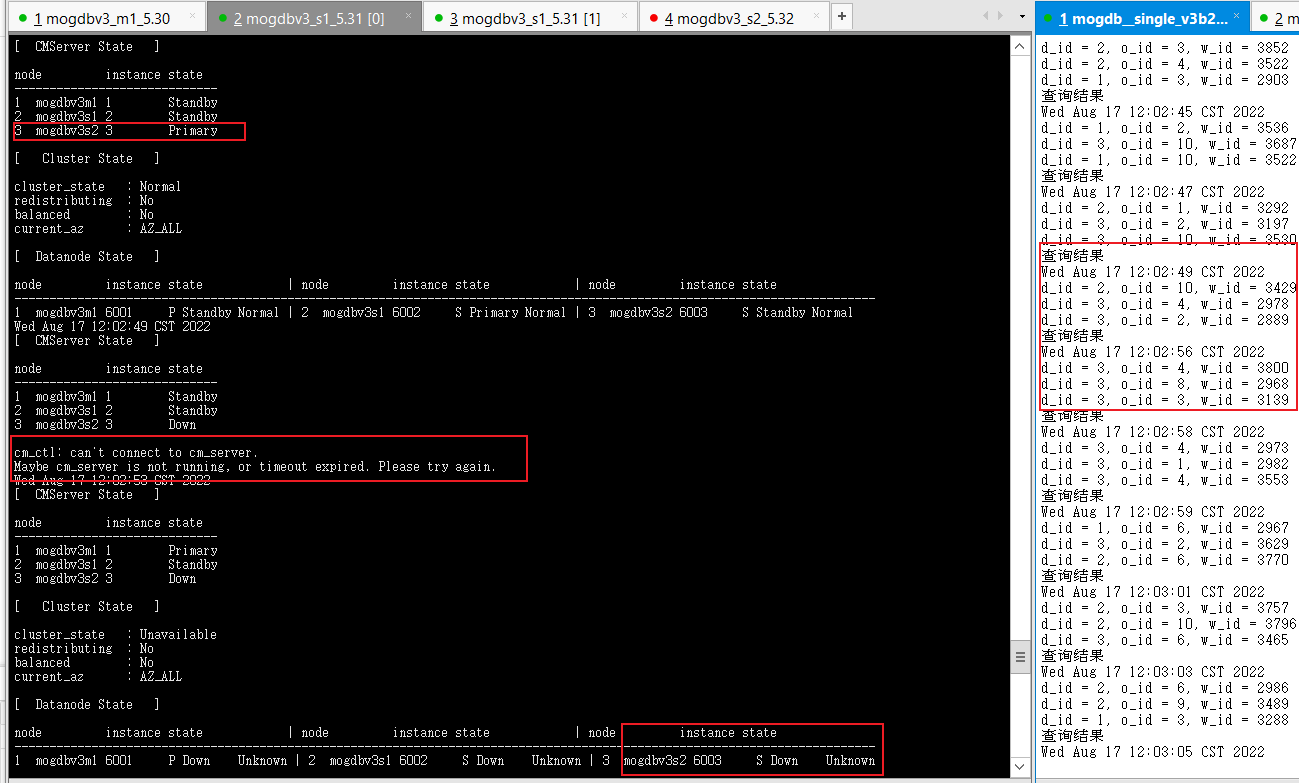

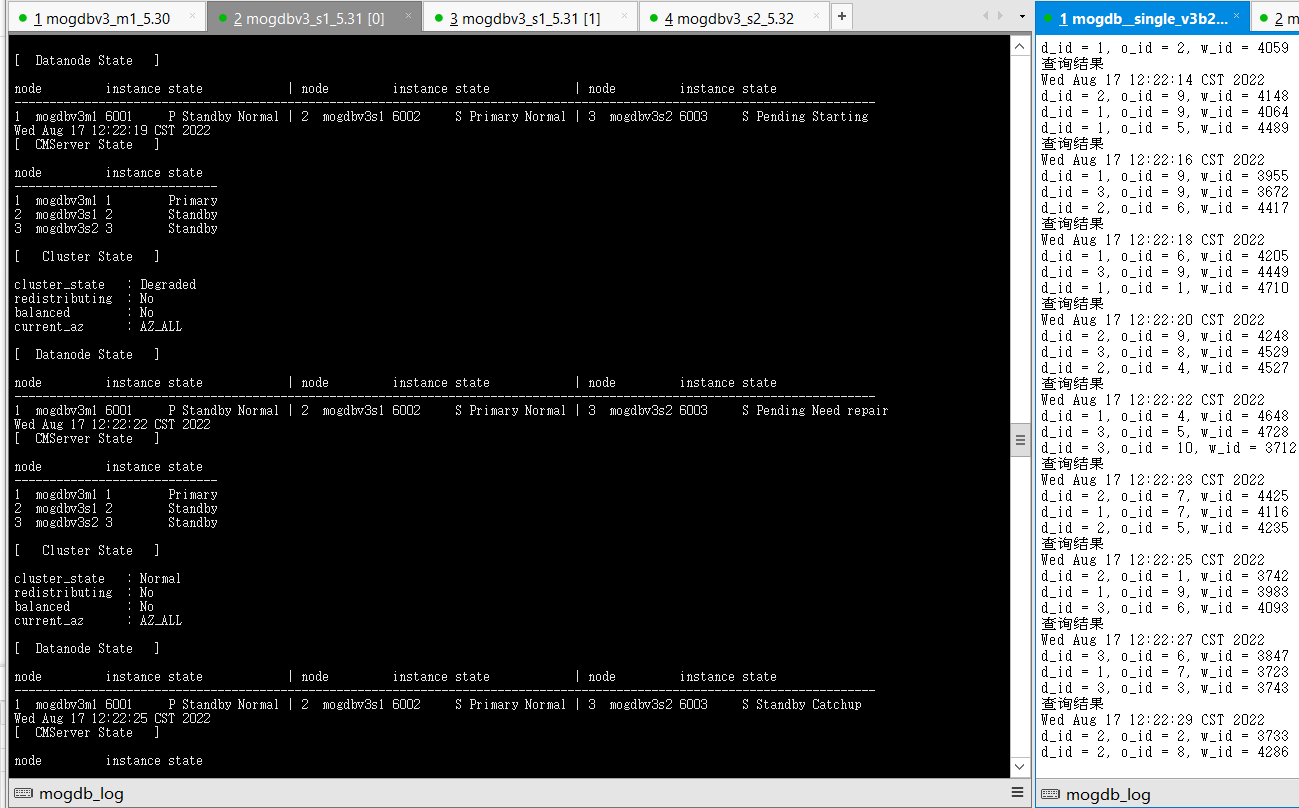

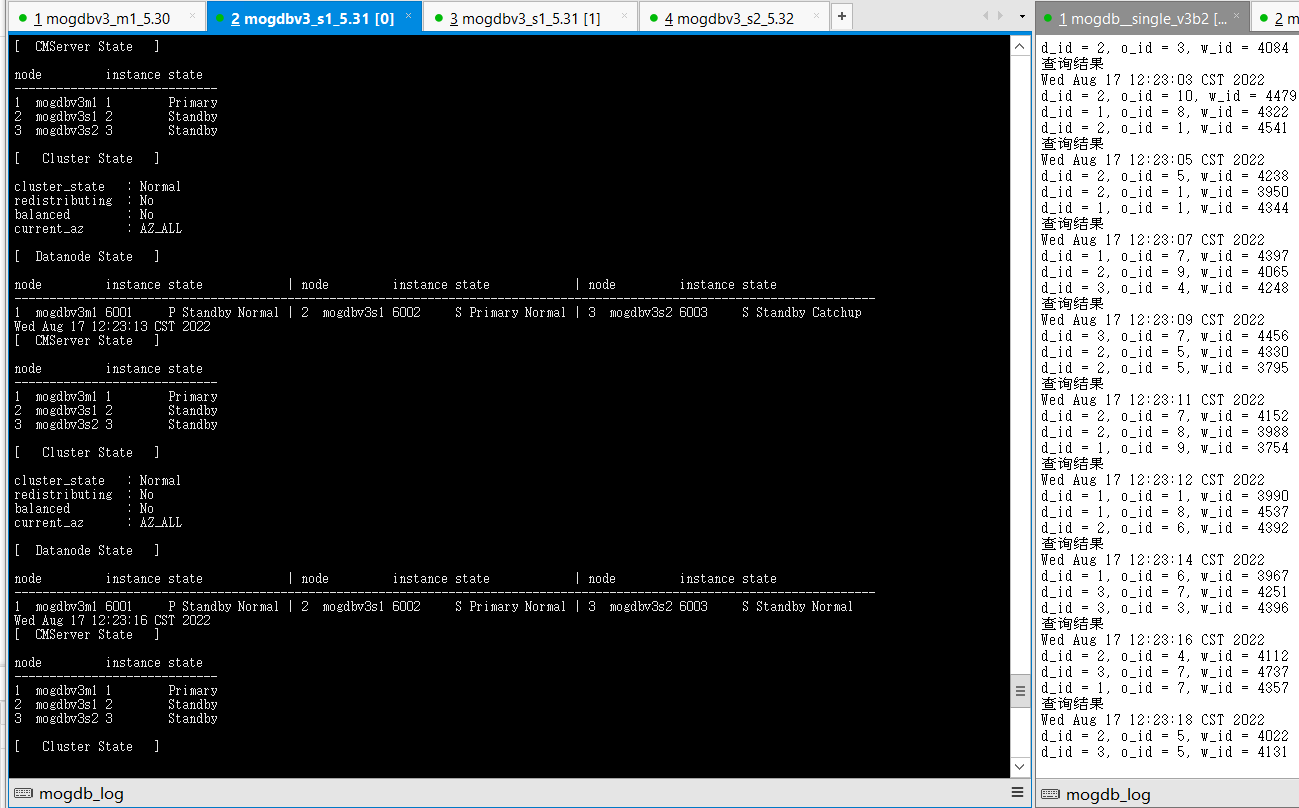

2. 關(guān)閉mogdbv3s2為從庫的datanode節(jié)點,查看數(shù)據(jù)庫狀態(tài)和業(yè)務(wù)中斷情況,測試業(yè)務(wù)jdbc連接存在5s左右的等待,但并未發(fā)生連接報錯。由于cmserver主庫也運行在該節(jié)點,測試觸發(fā)切換cm命令持續(xù)2s左右不可用。

- 恢復(fù)宕機備庫mogdbv3m1 ,恢復(fù)過程業(yè)務(wù)未出現(xiàn)等待。

- 結(jié)論:符合預(yù)期。

2.1.3主庫主機宕機

測試目的:模擬主庫庫主機硬件故障,出現(xiàn)宕機。

測試方法:直接關(guān)閉主庫主機電源。

預(yù)期結(jié)果:主庫主機宕機,業(yè)務(wù)中斷若干秒后,在2個從庫中選一臺提升為新的主庫,業(yè)務(wù)恢復(fù)正常,故障主機恢復(fù)后,宕機主庫角色變?yōu)閺膸欤⒆詣幼菲窖舆t。

測試過程和結(jié)論:

- 切換前環(huán)境狀態(tài)

[omm@mogdbv3s1 ~]$ gs_ctl query

[2022-08-17 13:24:27.061][30665][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

sender_pid : 14580

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 1/F86E1820

sender_write_location : 1/F86E1820

sender_flush_location : 1/F86E1820

sender_replay_location : 1/F86E1820

receiver_received_location : 1/F86E1820

receiver_write_location : 1/F86E1820

receiver_flush_location : 1/F86E1820

receiver_replay_location : 1/F86E1820

sync_percent : 100%

sync_state : Sync

sync_priority : 1

sync_most_available : On

channel : 192.168.5.31:26001-->192.168.5.32:42666

sender_pid : 6036

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 1/F86E1820

sender_write_location : 1/F86E1820

sender_flush_location : 1/F86E1820

sender_replay_location : 1/F86E1820

receiver_received_location : 1/F86E1820

receiver_write_location : 1/F86D4690

receiver_flush_location : 1/F86D4690

receiver_replay_location : 1/F86B59F8

sync_percent : 99%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.31:26001-->192.168.5.30:58258

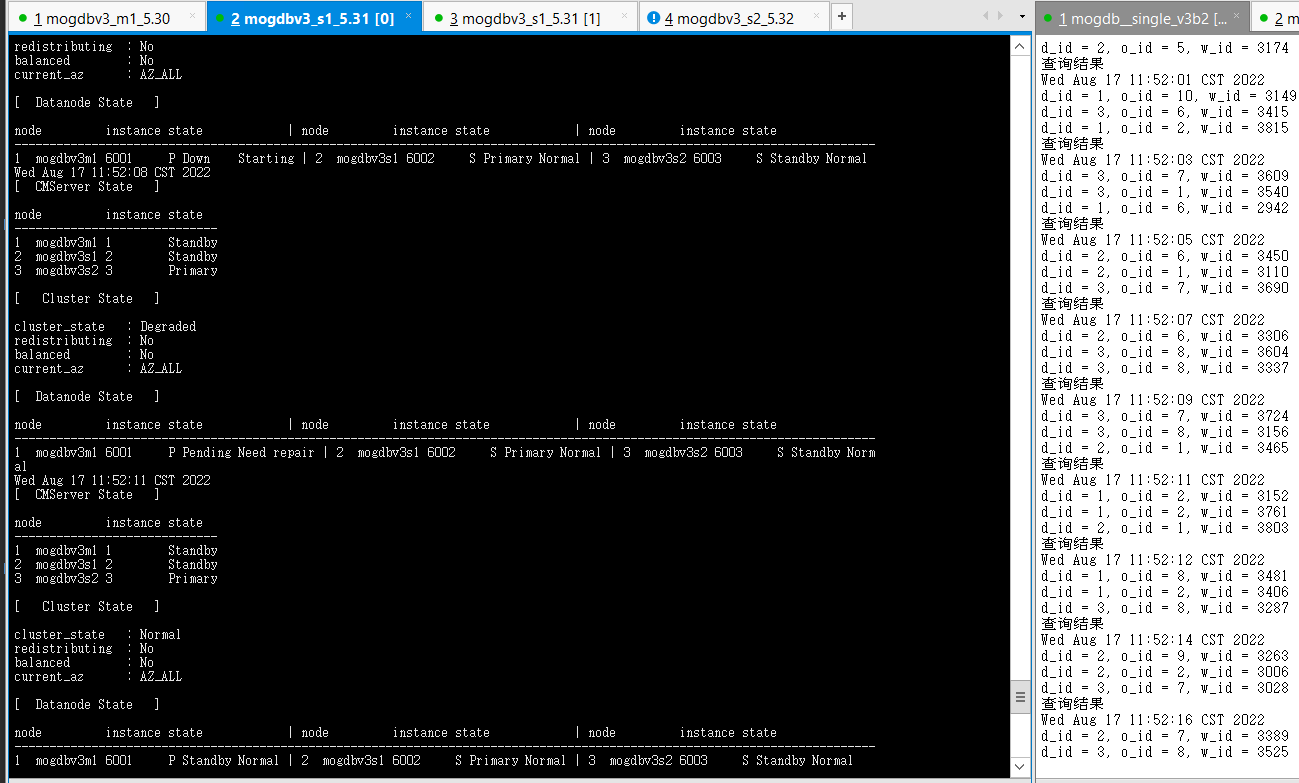

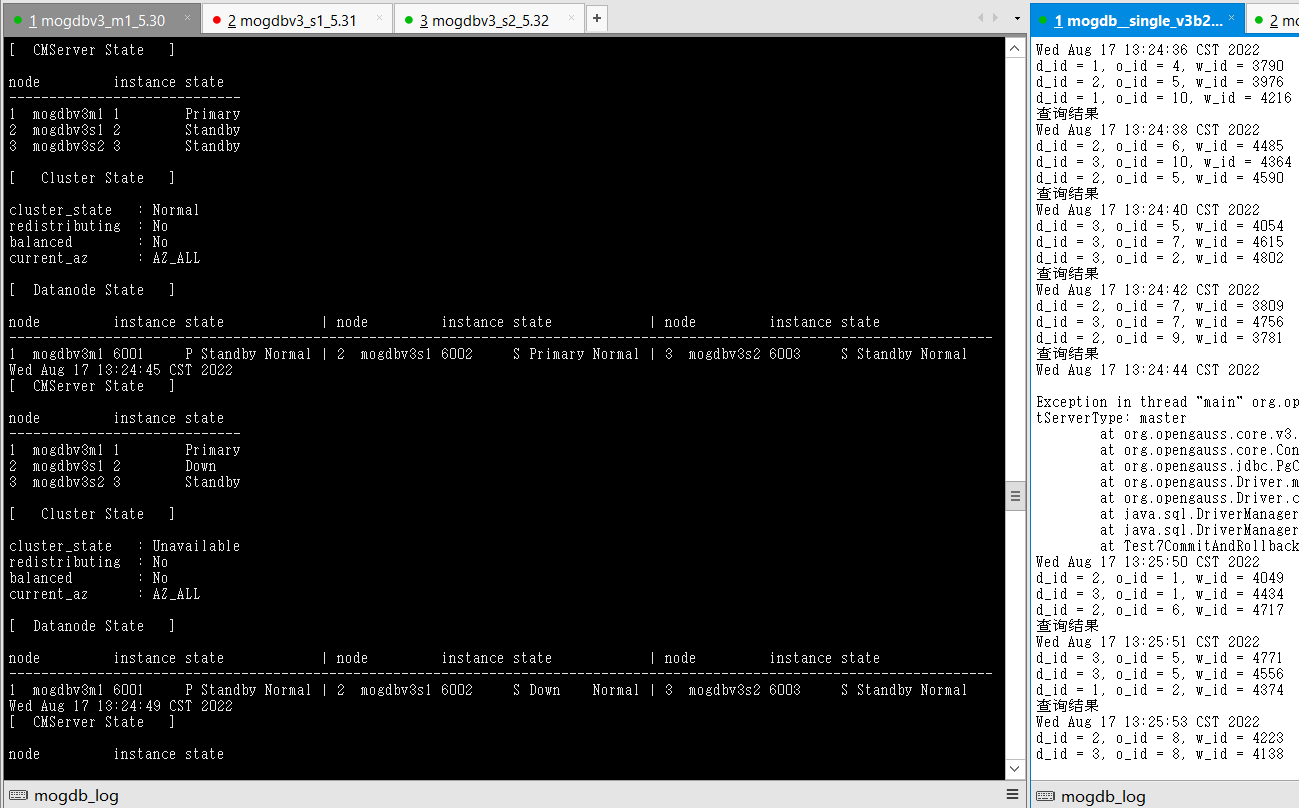

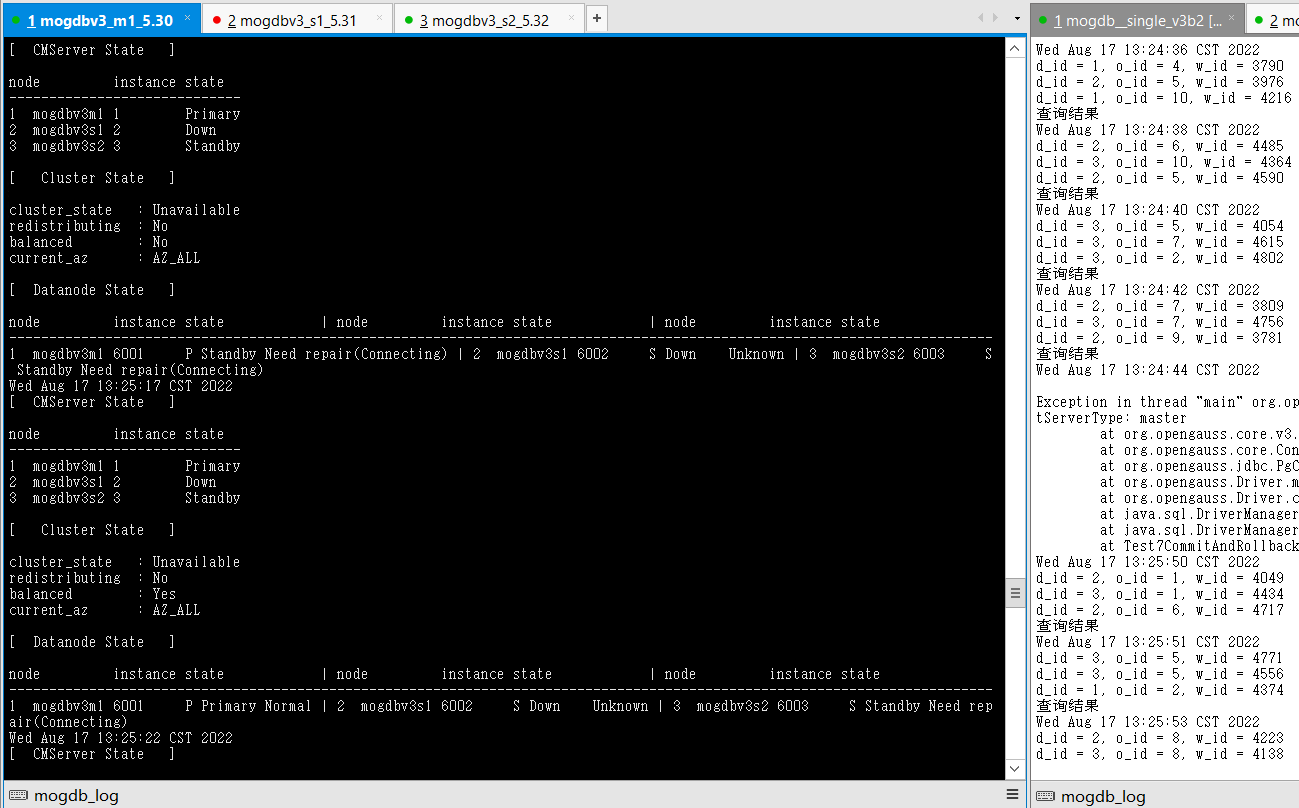

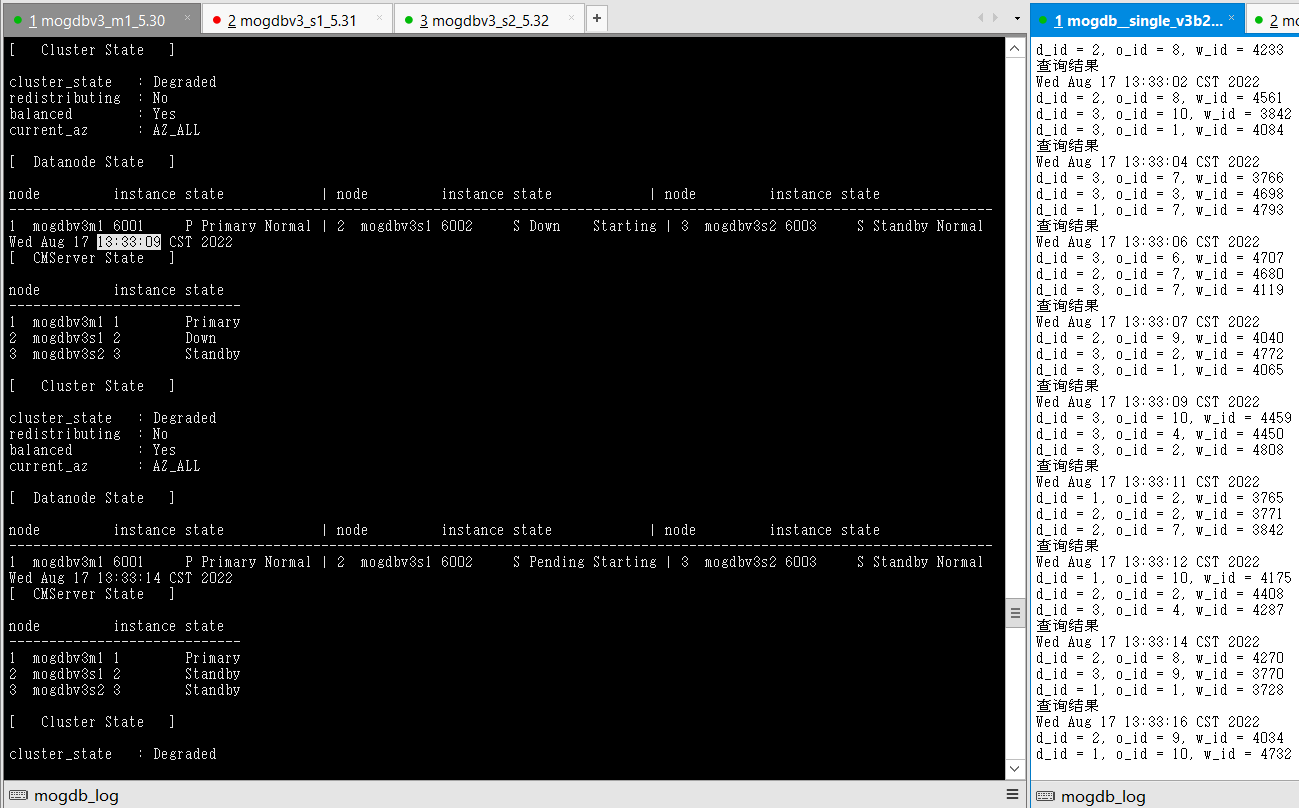

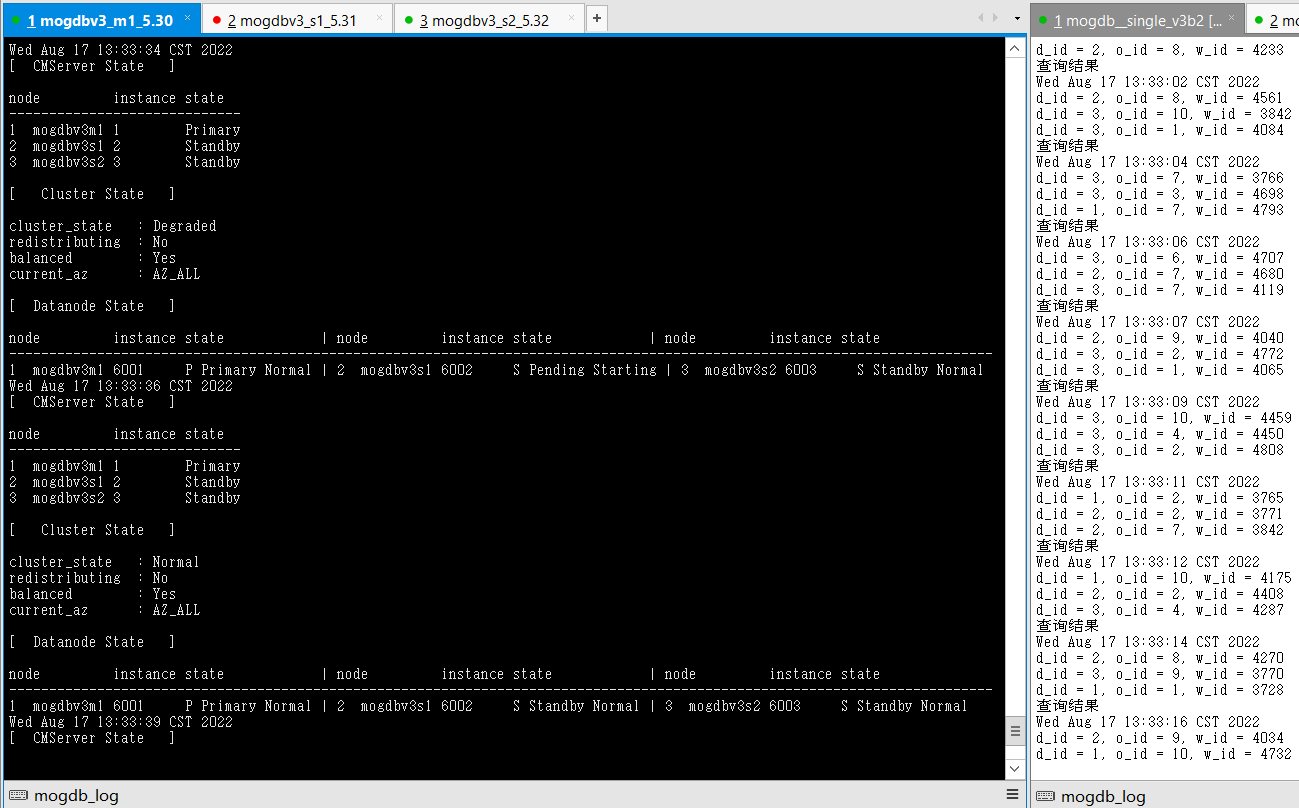

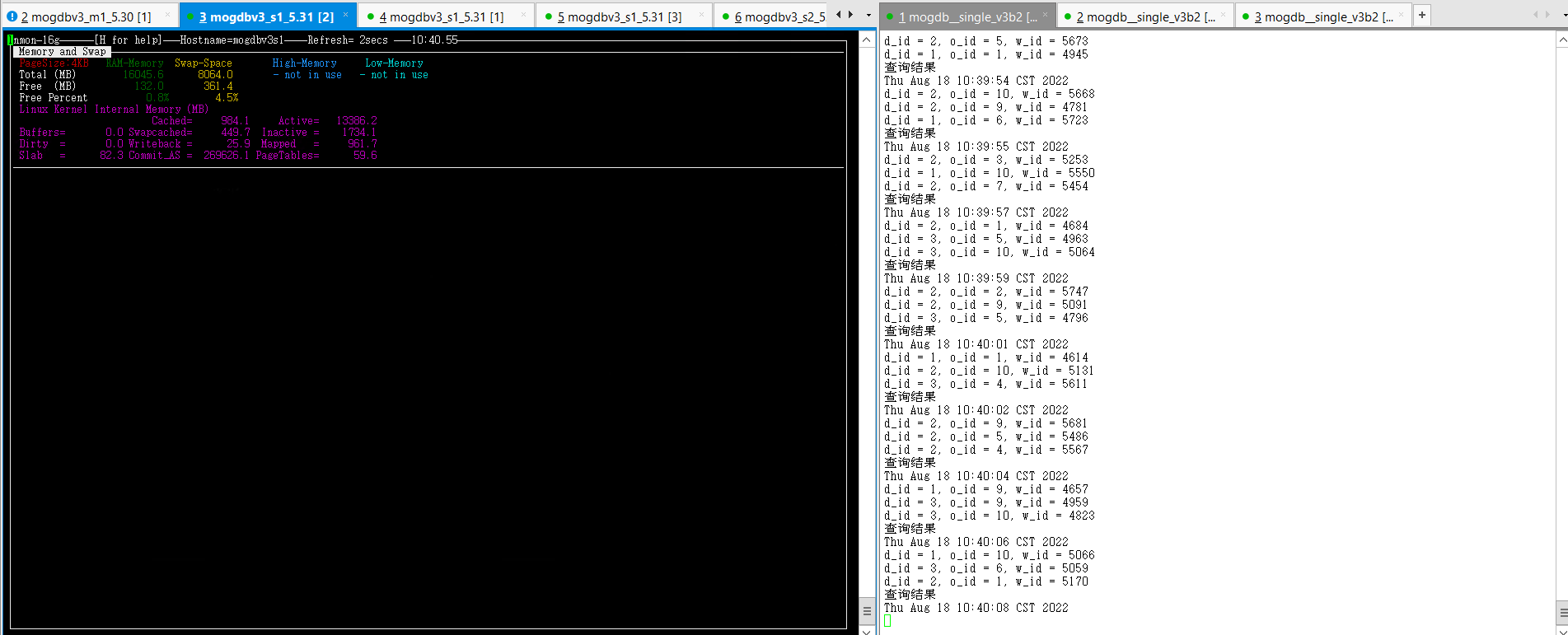

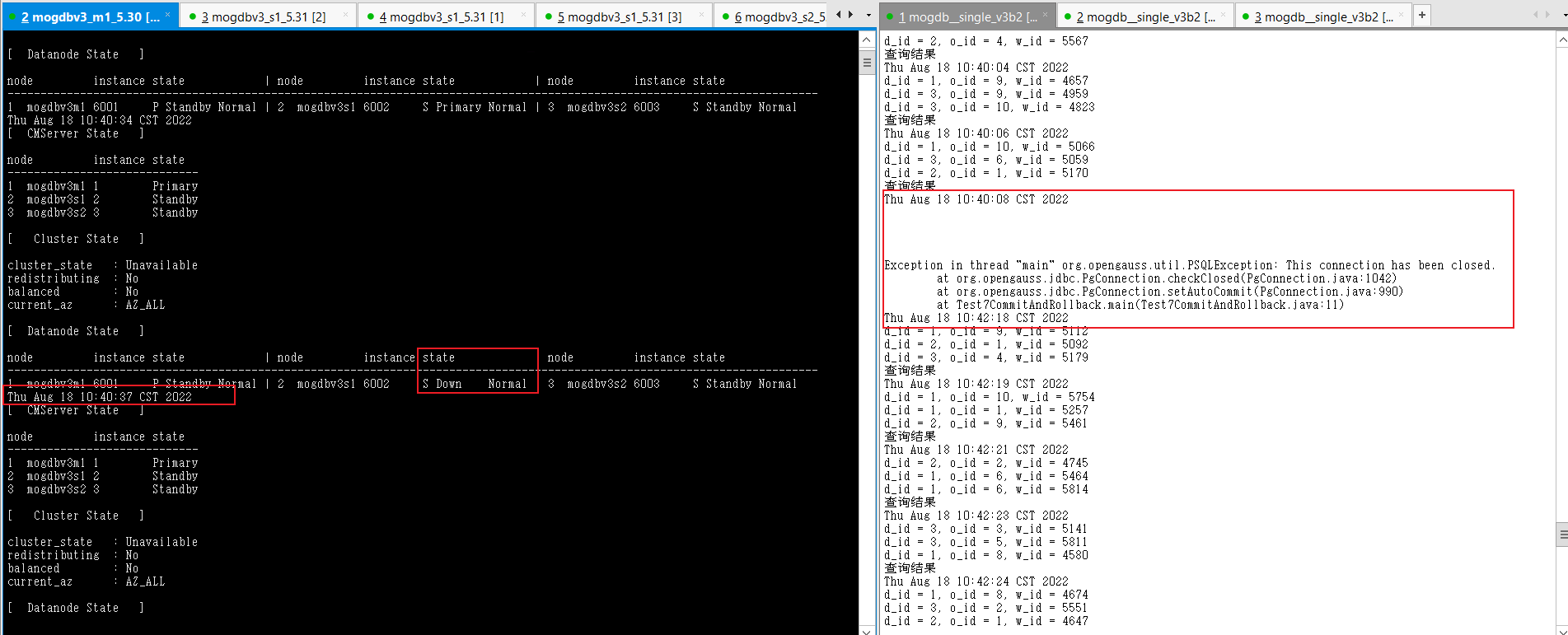

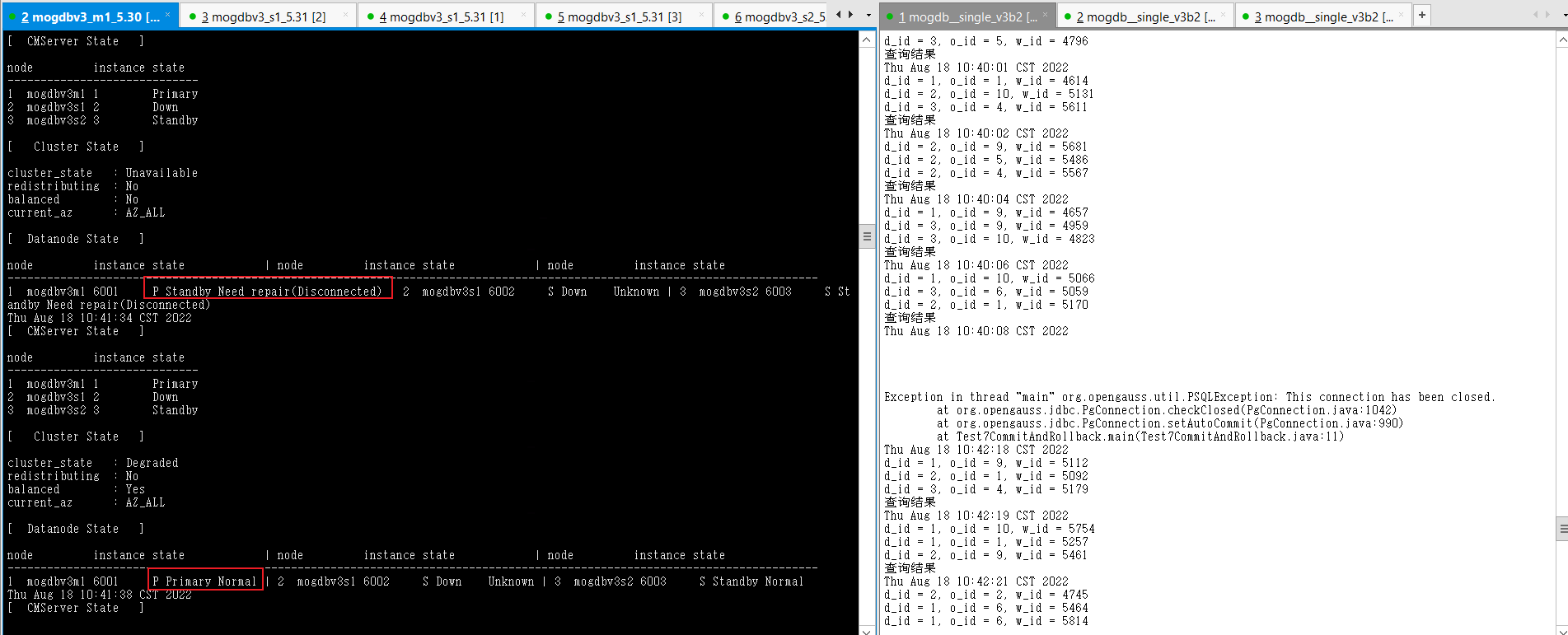

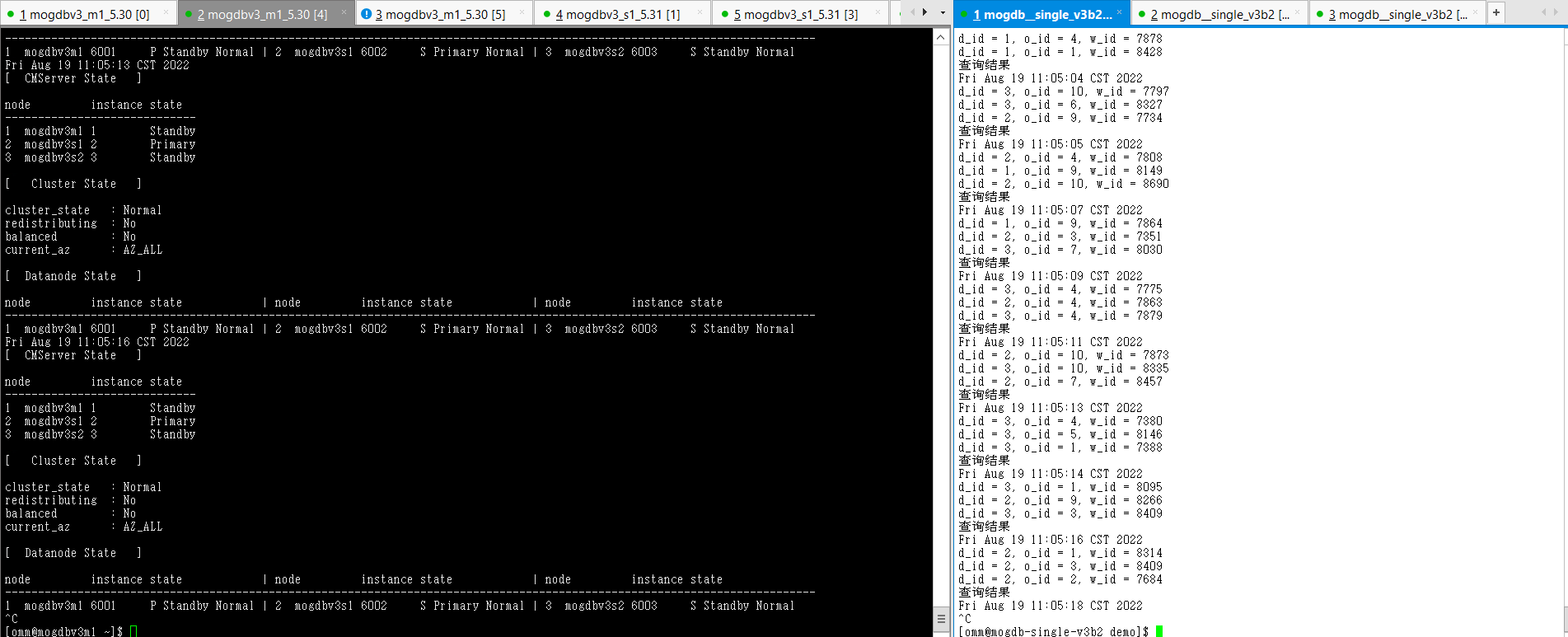

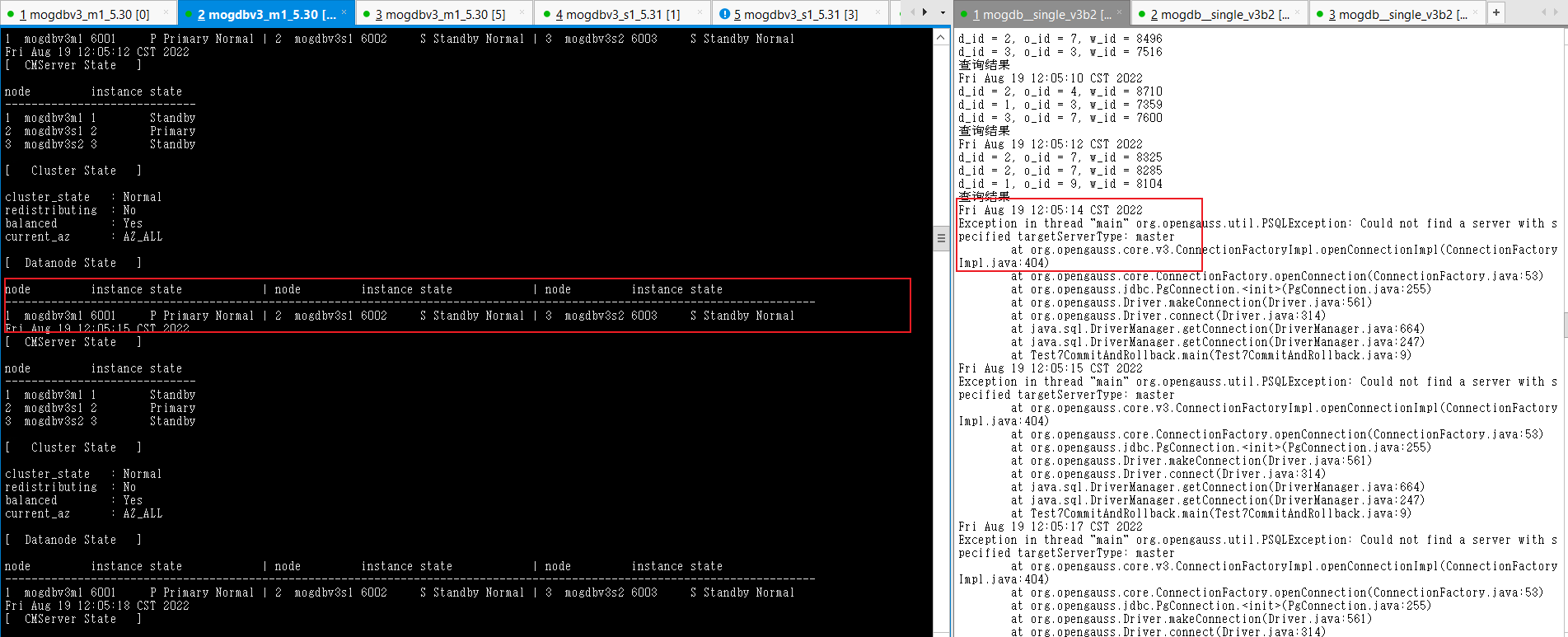

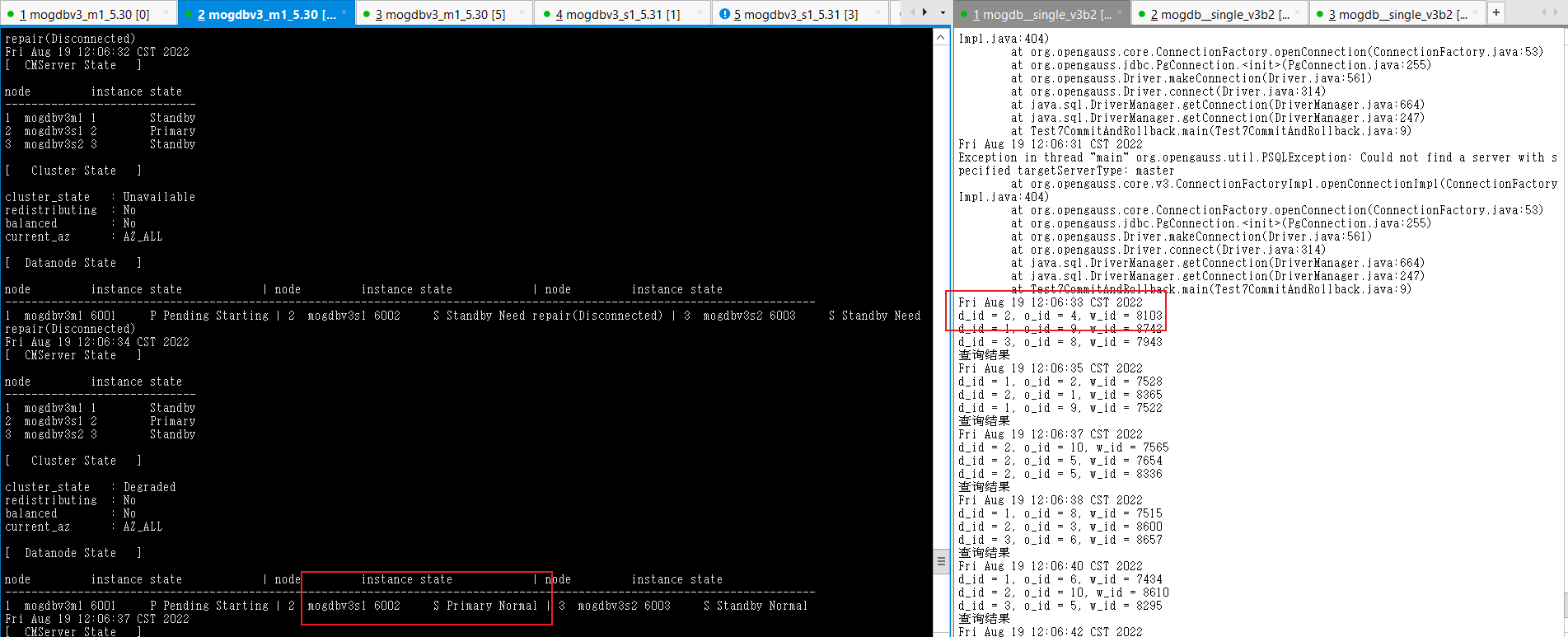

- 關(guān)閉mogdbv3s1為主庫的node節(jié)點,查看數(shù)據(jù)庫狀態(tài)和業(yè)務(wù)中斷情況,測試業(yè)務(wù)阻塞1分鐘左右的,并產(chǎn)生連接報錯,一分鐘后新的主庫產(chǎn)生,業(yè)務(wù)重連成功。

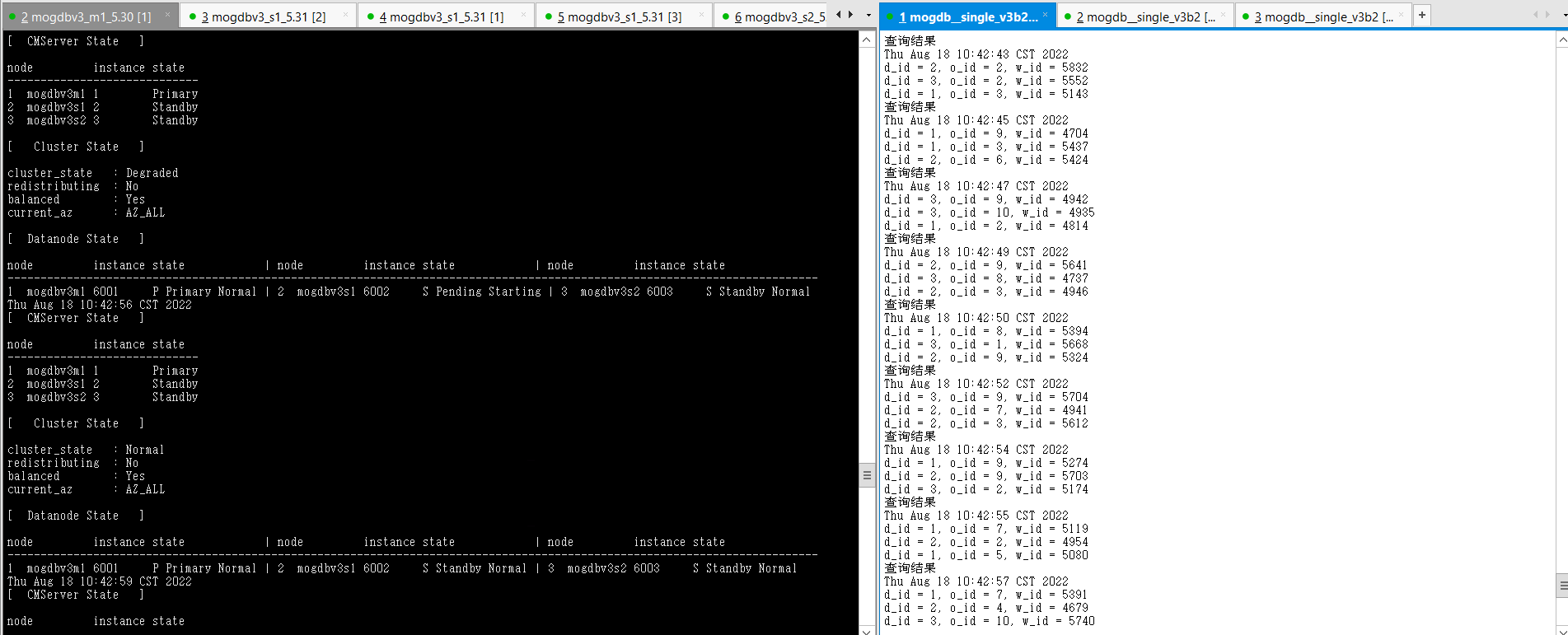

- 恢復(fù)宕機主庫后自動和新主庫追平,并成為備庫。恢復(fù)期間測試業(yè)務(wù)未受影響。

- 結(jié)論:符合預(yù)期,但切換過程業(yè)務(wù)阻塞時間較長。

2.1.4主庫文件系統(tǒng)滿

測試目的:模擬運行環(huán)境中的主庫文件系統(tǒng)滿。

測試方法:使用falloate命令創(chuàng)建文件,使mogdb軟件和數(shù)據(jù)文件所在的目錄,使用率100%。測試前需要設(shè)置CM的enable_transaction_read_only參數(shù),執(zhí)行_gs_guc set -Z cmserver -N all -I all -c "enable_transaction_read_only=off"降低CM對于此類異常的過度操作。

預(yù)期結(jié)果:主庫無法寫入導(dǎo)致數(shù)據(jù)異常宕庫,在2個從庫中選一臺提升為新的主庫,業(yè)務(wù)恢復(fù)正常。 故障主機恢復(fù)后,宕機主庫角色變?yōu)閺膸欤⒆詣幼菲窖舆t。

測試過程和結(jié)論:

- 正常狀態(tài)

[omm@mogdbv3m1 ~]$ df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 7.9G 0 7.9G 0% /dev

tmpfs 7.9G 20K 7.9G 1% /dev/shm

tmpfs 7.9G 8.9M 7.9G 1% /run

tmpfs 7.9G 0 7.9G 0% /sys/fs/cgroup

/dev/mapper/centos-root 92G 15G 77G 16% /

/dev/sda1 1014M 137M 878M 14% /boot

tmpfs 1.6G 0 1.6G 0% /run/user/0

tmpfs 1.6G 0 1.6G 0% /run/user/1000

[omm@mogdbv3m1 ~]$ echo $PGDATA

/opt/mogdb/data

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal

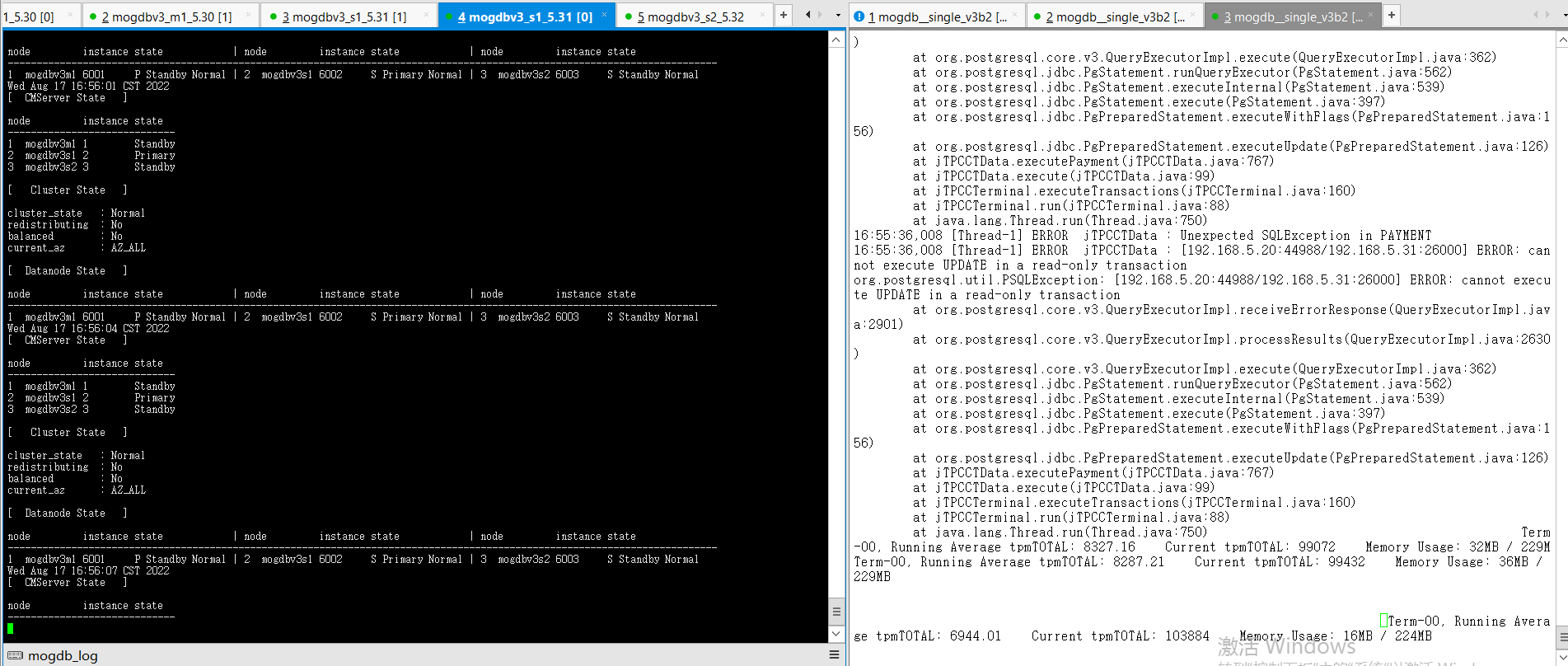

- 使用falloate命令填滿mogdb家目錄,主庫由于無法寫入宕庫,mogdbv3s1節(jié)點升為主庫。切換期間業(yè)務(wù)中斷19s。cmserver 主節(jié)點由于也在該節(jié)點發(fā)生切換,導(dǎo)致cm命令中斷4秒。

[omm@mogdbv3m1 ~]$ df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 7.9G 0 7.9G 0% /dev

tmpfs 7.9G 20K 7.9G 1% /dev/shm

tmpfs 7.9G 8.9M 7.9G 1% /run

tmpfs 7.9G 0 7.9G 0% /sys/fs/cgroup

/dev/mapper/centos-root 92G 92G 900K 100% /

/dev/sda1 1014M 137M 878M 14% /boot

tmpfs 1.6G 0 1.6G 0% /run/user/0

tmpfs 1.6G 0 1.6G 0% /run/user/1000

- 文件系統(tǒng)空間釋放后,宕庫節(jié)點自動啟動,并重新進行build恢復(fù)正常狀態(tài),恢復(fù)期間業(yè)務(wù)不受影響。

- 結(jié)論:符合預(yù)期。

2.1.5從庫文件系統(tǒng)滿

測試方法:使用falloate命令創(chuàng)建文件,使某個從庫mogdb軟件和數(shù)據(jù)文件所在的目錄,使用率100%。測試前需要設(shè)置CM的enable_transaction_read_only參數(shù),執(zhí)行_gs_guc set -Z cmserver -N all -I all -c "enable_transaction_read_only=off"降低CM對于此類異常的過度操作。

預(yù)期結(jié)果:從庫無法寫入導(dǎo)致數(shù)據(jù)庫同步中斷,并出現(xiàn)異常宕庫,期間業(yè)務(wù)不受影響。故障節(jié)點從庫恢復(fù)后,自動追平延遲。

測試過程和結(jié)論:

- 正常狀態(tài)

[omm@mogdbv3s1 pg_xlog]$ df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 7.9G 0 7.9G 0% /dev

tmpfs 7.9G 20K 7.9G 1% /dev/shm

tmpfs 7.9G 73M 7.8G 1% /run

tmpfs 7.9G 0 7.9G 0% /sys/fs/cgroup

/dev/mapper/centos-root 92G 25G 67G 27% /

/dev/sda1 1014M 137M 878M 14% /boot

tmpfs 1.6G 0 1.6G 0% /run/user/1000

tmpfs 1.6G 0 1.6G 0% /run/user/0

[omm@mogdbv3s1 pg_xlog]$ echo $PGDATA

/opt/mogdb/data

[omm@mogdbv3s1 pg_xlog]$ cm_ctl query -vC;

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance

state-------------------------------------------------------------------------------------------------------

--------------------1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003

S Standby Normal

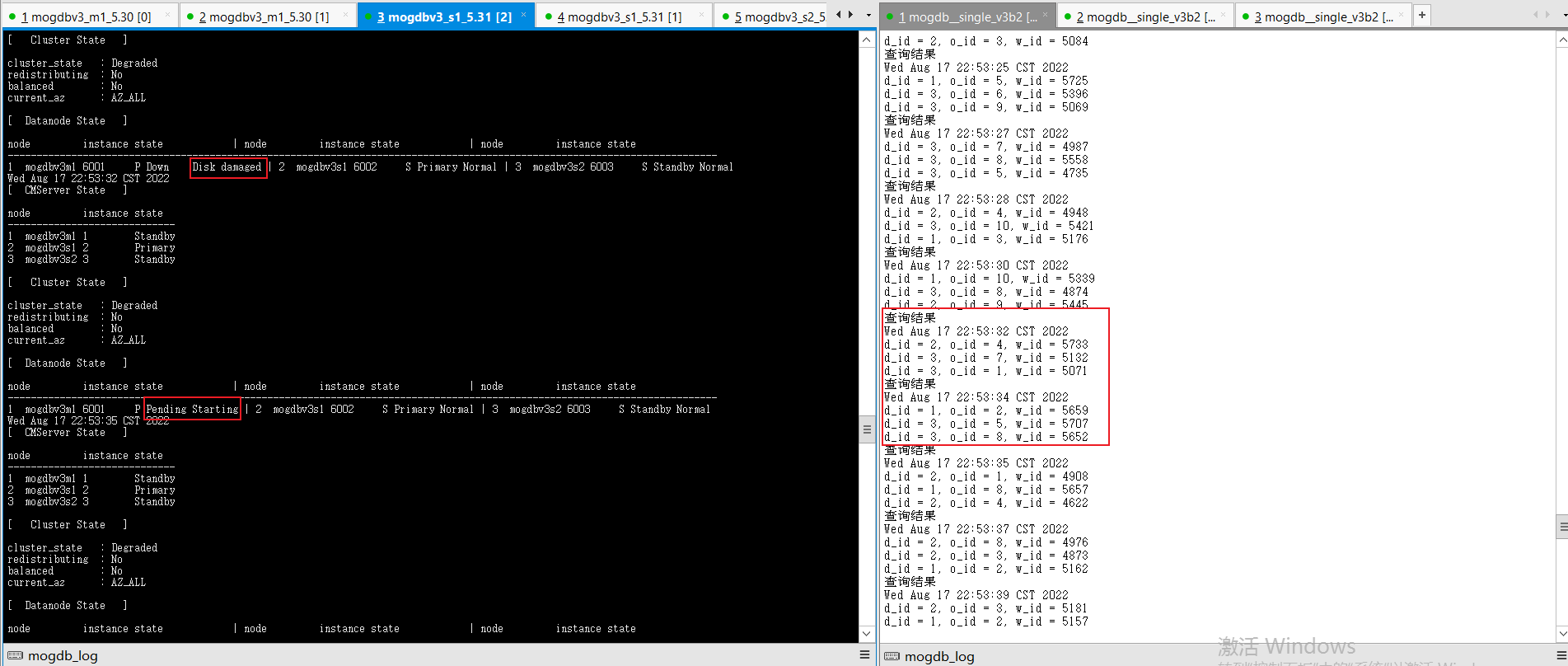

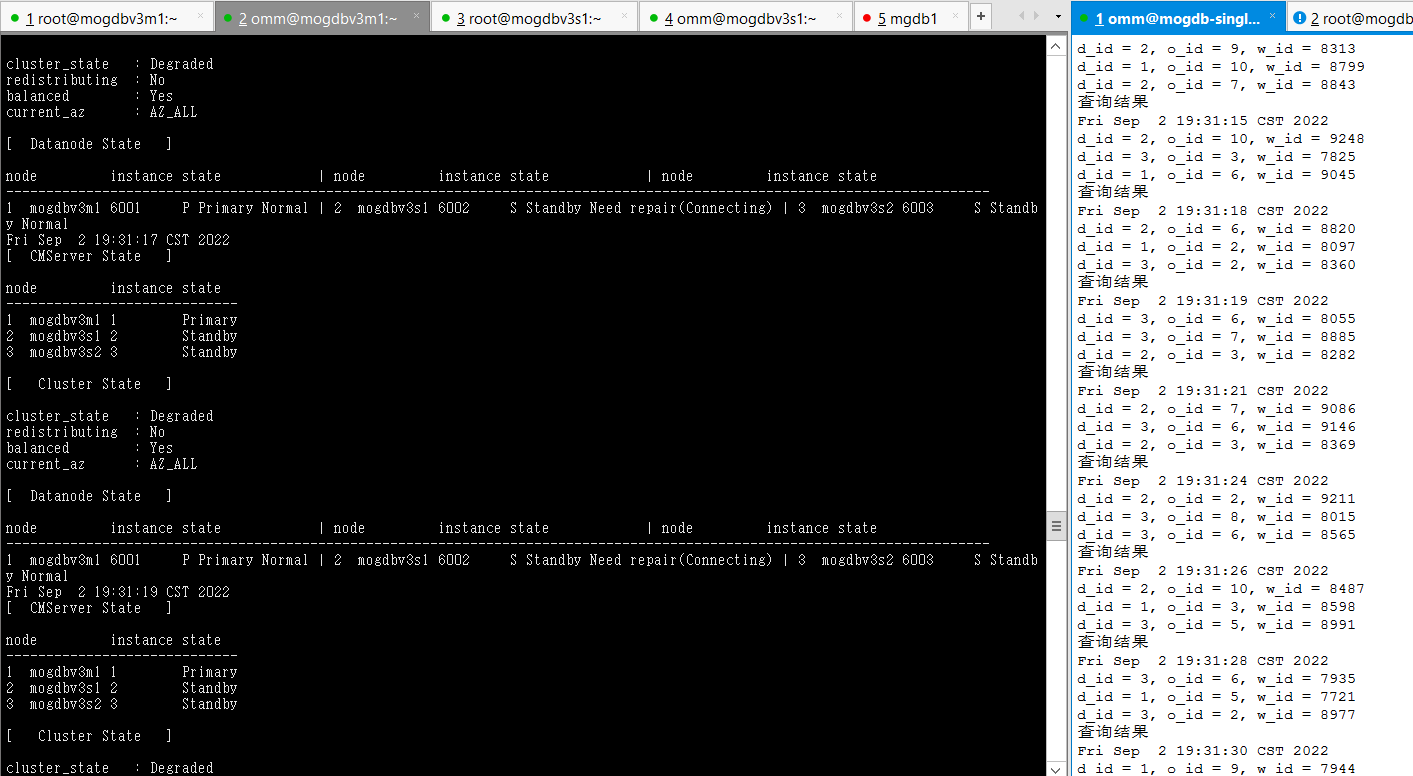

- 使用falloate命令填滿mogdbv3s1節(jié)點mogdb家目錄,從庫由于由于無法寫入宕庫,宕庫后CM嘗試拉起數(shù)據(jù)庫失敗,從庫狀態(tài)變?yōu)?Standby Need repair(Connecting)最終顯示為Disk damaged狀態(tài),期間業(yè)務(wù)未受影響。

[root@mogdbv3s1 ~]# df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 7.9G 0 7.9G 0% /dev

tmpfs 7.9G 20K 7.9G 1% /dev/shm

tmpfs 7.9G 73M 7.8G 1% /run

tmpfs 7.9G 0 7.9G 0% /sys/fs/cgroup

/dev/mapper/centos-root 92G 92G 428K 100% /

/dev/sda1 1014M 137M 878M 14% /boot

tmpfs 1.6G 0 1.6G 0% /run/user/1000

tmpfs 1.6G 0 1.6G 0% /run/user/0

-

文件系統(tǒng)空間釋放后,宕庫節(jié)點自動啟動,并進行Catchup恢復(fù)正常狀態(tài),恢復(fù)期間業(yè)務(wù)不受影響。

-

結(jié)論:符合預(yù)期。

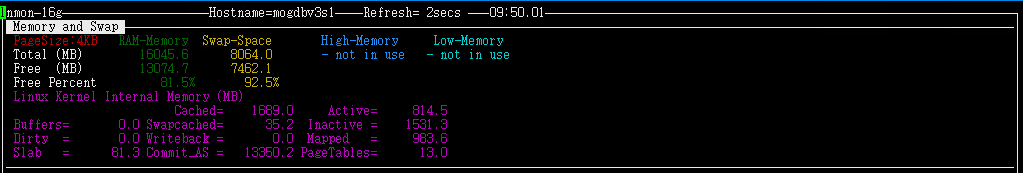

2.1.6主庫內(nèi)存不足

測試目的:模擬主庫主機內(nèi)存不足。

測試方法:使用strees命令模擬主機物理內(nèi)存和swap均降至0。

預(yù)期結(jié)果:主庫隨著內(nèi)存使用率不斷上升,性能逐漸下降,直至出現(xiàn)OOM后主庫出現(xiàn)宕庫,主庫宕庫后,在2個從庫中選一臺提升為新的主庫。內(nèi)存不足主機恢復(fù)后,宕庫主庫角色變?yōu)閺膸欤⒆詣幼菲窖舆t。

測試過程和結(jié)論:

- 正常狀態(tài)

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : No

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Standby Normal | 2 mogdbv3s1 6002 S Primary Normal | 3 mogdbv3s2 6003 S Standby Normal

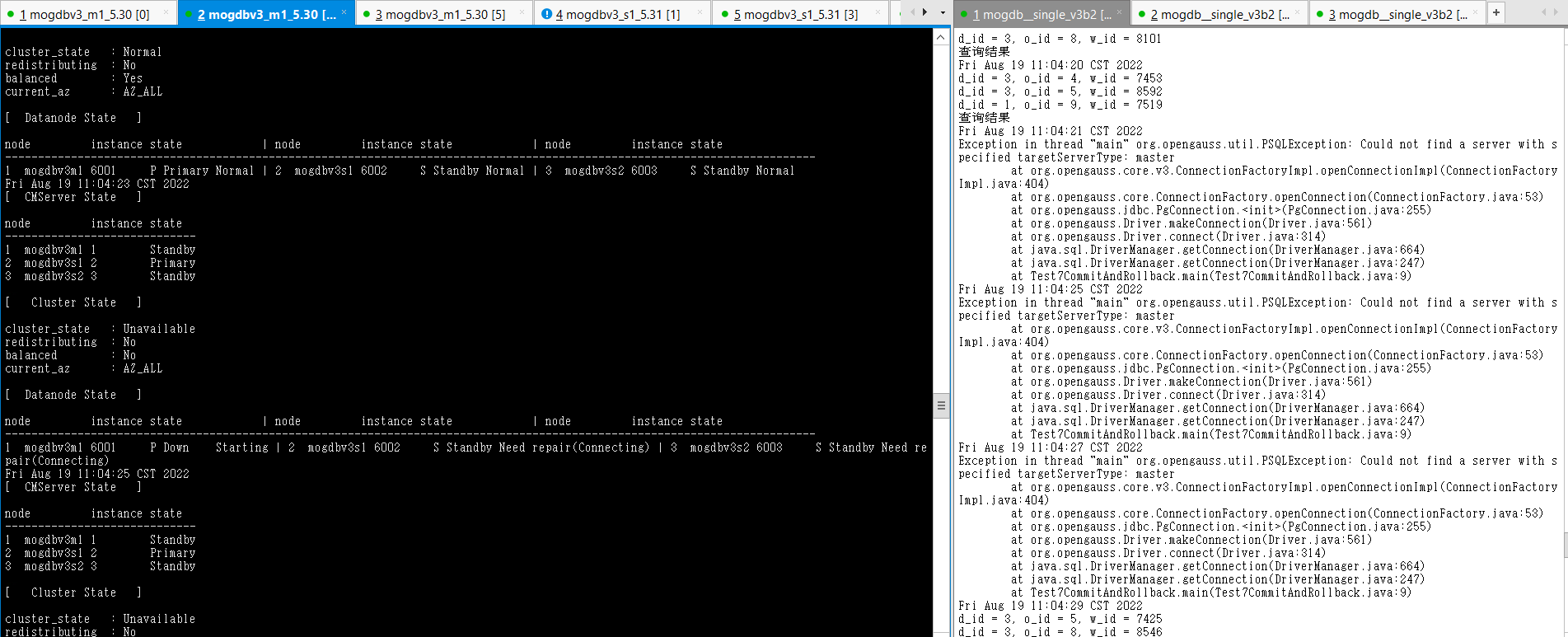

- 在mogdbv3m1服務(wù)使用stress --vm 200 --vm-bytes 1280M --vm-hang 0 命令,盡快消耗該主機內(nèi)存至0,一旦物理內(nèi)存被消耗完,開始使用swap時數(shù)據(jù)庫響應(yīng)明顯變慢,直至swap消耗完,主機出現(xiàn)OOM。CM檢測到到該節(jié)點異常,CM狀態(tài)顯示數(shù)據(jù)庫狀態(tài)為down該過程耗時26s,mogdbv3s1進行角色轉(zhuǎn)換為主庫,業(yè)務(wù)恢復(fù)正常,該場景下整個切換過程耗時130s后,業(yè)務(wù)恢復(fù)正常。

- mogdbv3s1主機操作系統(tǒng)觸發(fā)oom后,消耗內(nèi)存的進程被kill,物理內(nèi)存恢復(fù)正常。CM檢測到物理內(nèi)存恢復(fù)正常后,自動拉起數(shù)據(jù)庫,拉起后數(shù)據(jù)庫角色變?yōu)閭鋷欤陂g業(yè)務(wù)未受影響。

- 結(jié)論:符合預(yù)期,推薦業(yè)務(wù)類型為OLTP,物理內(nèi)存充裕盡量減少SWAP分配或者關(guān)閉SWAP,當采用此種配置遇到內(nèi)存不足時,問題節(jié)點可以盡快宕機,加速選主過程以使業(yè)務(wù)得以更快恢復(fù)。

2.2網(wǎng)絡(luò)問題

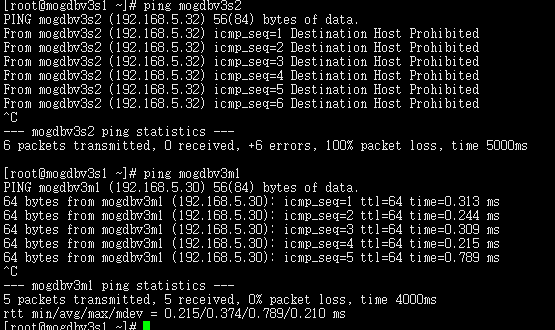

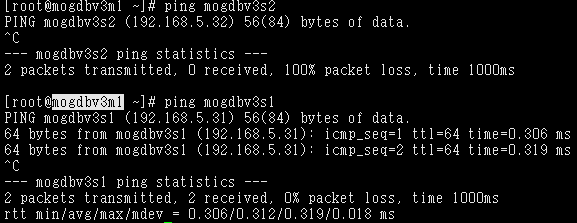

2.2.1datanode和cmnode兩個備之間網(wǎng)絡(luò)異常。

測試目的: 測試DataNode兩個從節(jié)點和CMServer Node兩個從節(jié)點間網(wǎng)絡(luò)中斷場景。

測試方法:當DataNode和 CMServer Node所在的節(jié)點都為從環(huán)境,使用firewall隔離DataNode和CMServer Node 兩個從節(jié)點之間的網(wǎng)絡(luò)。CMServer Node從環(huán)境訪問主環(huán)境正常。

預(yù)期結(jié)果:DataNode復(fù)制狀態(tài),數(shù)據(jù)庫對外業(yè)務(wù)未受影響。CMServer有一個從節(jié)點instance狀態(tài)變?yōu)閐own,

測試過程和結(jié)論:

- 正常狀態(tài),尚未開啟防火墻。

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal

-

配置好firewall規(guī)則,啟動3個幾點的firewall服務(wù),mogdbv3s1和mogdbv3s2節(jié)點相互無法ping通。DataNode復(fù)制狀態(tài)正常,CMServer Node各個節(jié)點狀態(tài)未受影響

-

停止firewall服務(wù)后,mogdbv3s2下的cmserver instance 恢復(fù)正常。數(shù)據(jù)庫業(yè)務(wù)未受影響。

-

結(jié)論:符合預(yù)期

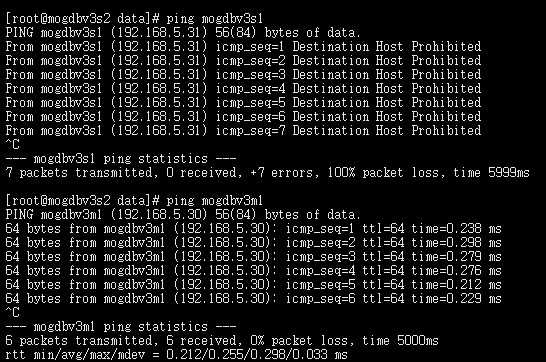

2.2.2一主一Potential從之間網(wǎng)絡(luò)不通

測試目的: 測試DataNode主節(jié)點和Potential從節(jié)點,CMServer Node主節(jié)點和一個從節(jié)點之前網(wǎng)絡(luò)中斷場景。

測試方法:使用firewall隔離mogdbv3m1和mogdbv3s2之間的DataNode和CMServer Node網(wǎng)絡(luò)。

預(yù)期結(jié)果:DataNode mogdbv3m1和mogdbv3s2之間復(fù)制中斷,數(shù)據(jù)庫對外業(yè)務(wù)未受影響。CMServer 有一個從節(jié)點instance狀態(tài)變?yōu)閐own,

測試過程和結(jié)論:

- 測試前防火墻配置規(guī)則,尚未開啟防火墻時集群同步狀態(tài)。

[omm@mogdbv3m1 ~]$ gs_ctl query

[2022-08-18 17:11:17.783][25525][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

sender_pid : 25003

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 2/B5889CD0

sender_write_location : 2/B5889CD0

sender_flush_location : 2/B5889CD0

sender_replay_location : 2/B5889CD0

receiver_received_location : 2/B5889CD0

receiver_write_location : 2/B5889CD0

receiver_flush_location : 2/B5889CD0

receiver_replay_location : 2/B5889CD0

sync_percent : 100%

sync_state : Sync

sync_priority : 1

sync_most_available : On

channel : 192.168.5.30:26001-->192.168.5.31:53976

sender_pid : 25004

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 2/B5889CD0

sender_write_location : 2/B5889CD0

sender_flush_location : 2/B5889CD0

sender_replay_location : 2/B5889CD0

receiver_received_location : 2/B5889CD0

receiver_write_location : 2/B5889CD0

receiver_flush_location : 2/B5889CD0

receiver_replay_location : 2/B5889CD0

sync_percent : 100%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.30:26001-->192.168.5.32:41596`

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal`

[root@mogdbv3m1 ~]# firewall-cmd --zone=drop --list-all

drop (active)

target: DROP

icmp-block-inversion: no

interfaces:

sources: 192.168.5.32

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3m1 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces:

sources: 192.168.5.31 192.168.5.20

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:`

[root@mogdbv3s1 ~]# firewall-cmd --zone=drop --list-all

drop

target: DROP

icmp-block-inversion: no

interfaces:

sources:

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3s1 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces:

sources: 192.168.5.20 192.168.5.30 192.168.5.32

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:`

[root@mogdbv3s2 ~]# firewall-cmd --zone=drop --list-all

drop (active)

target: DROP

icmp-block-inversion: no

interfaces:

sources: 192.168.5.30

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3s2 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces: ens192

sources: 192.168.5.20

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:`

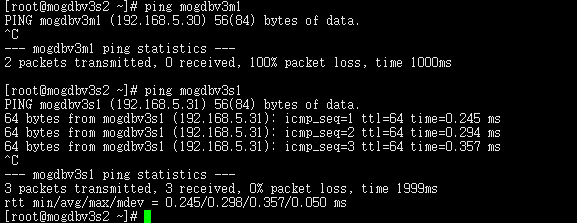

- 啟動3個節(jié)點已配置規(guī)則的firewall,DataNode主節(jié)點mogdbv3m1和Potential從節(jié)點mogdbv3s2之間網(wǎng)絡(luò)中斷,mogdbv3s2從庫處于down狀態(tài),業(yè)務(wù)未受影響。各個CMServer Node狀態(tài)均正常。

3. 關(guān)閉三個節(jié)點網(wǎng)絡(luò)防火墻約2s后,mogdbv3s2節(jié)點的Potential從庫開始自動恢復(fù),期間業(yè)務(wù)未受影響。

4. 結(jié)論:測試符合預(yù)期

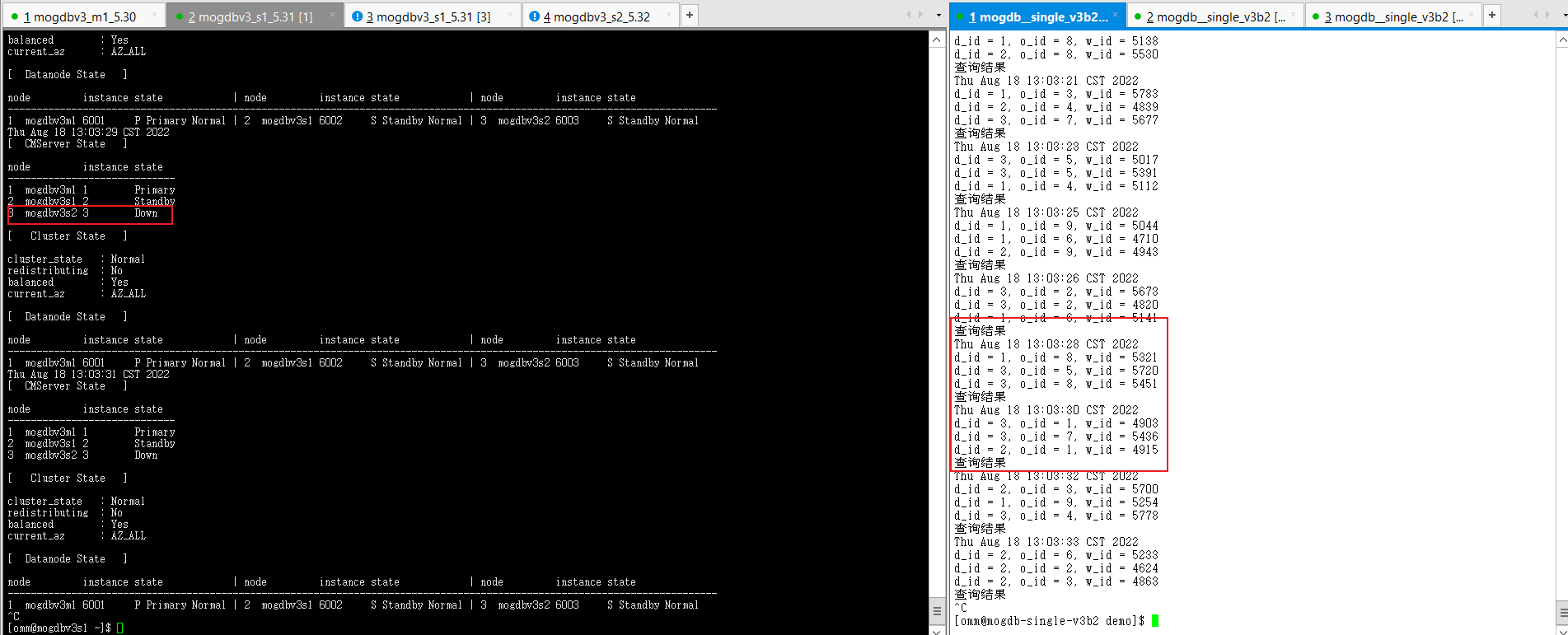

2.2.3一主一Sync備庫之間網(wǎng)絡(luò)不通

測試目的: 測試DataNode主節(jié)點和Potential從節(jié)點,CMServer Node主節(jié)點和一個從節(jié)點之前網(wǎng)絡(luò)中斷場景。

測試方法:使用firewall隔離mogdbv3m1和mogdbv3s1之間的DataNode和CMServer Node網(wǎng)絡(luò)。

預(yù)期結(jié)果:DataNode復(fù)制狀態(tài),數(shù)據(jù)庫對外業(yè)務(wù)未受影響。CMServer 有一個從節(jié)點instance狀態(tài)顯示異常。

測試過程和結(jié)論:

- 測試前防火墻配置規(guī)則,尚未開啟防火墻,集群的同步狀態(tài)。

[root@mogdbv3s1 ~]# firewall-cmd --zone=drop --list-all

drop (active)

target: DROP

icmp-block-inversion: no

interfaces:

sources: 192.168.5.30

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3s1 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces:

sources: 192.168.5.20 192.168.5.32

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:`

[root@mogdbv3m1 ~]# firewall-cmd --zone=drop --list-all

drop (active)

target: DROP

icmp-block-inversion: no

interfaces:

sources: 192.168.5.31

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3m1 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces:

sources: 192.168.5.20 192.168.5.32

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[omm@mogdbv3m1 ~]$ gs_ctl query

[2022-08-19 08:07:19.773][31183][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

sender_pid : 7037

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 2/D3B021D8

sender_write_location : 2/D3B021D8

sender_flush_location : 2/D3B021D8

sender_replay_location : 2/D3B021D8

receiver_received_location : 2/D3B021D8

receiver_write_location : 2/D3B021D8

receiver_flush_location : 2/D3B021D8

receiver_replay_location : 2/D3B021D8

sync_percent : 100%

sync_state : Sync

sync_priority : 1

sync_most_available : On

channel : 192.168.5.30:26001-->192.168.5.31:54904

sender_pid : 7039

local_role : Primary

peer_role : Standby

peer_state : Normal

state : Streaming

sender_sent_location : 2/D3B021D8

sender_write_location : 2/D3B021D8

sender_flush_location : 2/D3B021D8

sender_replay_location : 2/D3B021D8

receiver_received_location : 2/D3B021D8

receiver_write_location : 2/D3B021D8

receiver_flush_location : 2/D3B021D8

receiver_replay_location : 2/D3B00A10

sync_percent : 100%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.30:26001-->192.168.5.32:44410

Receiver info:

No information

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal`

-

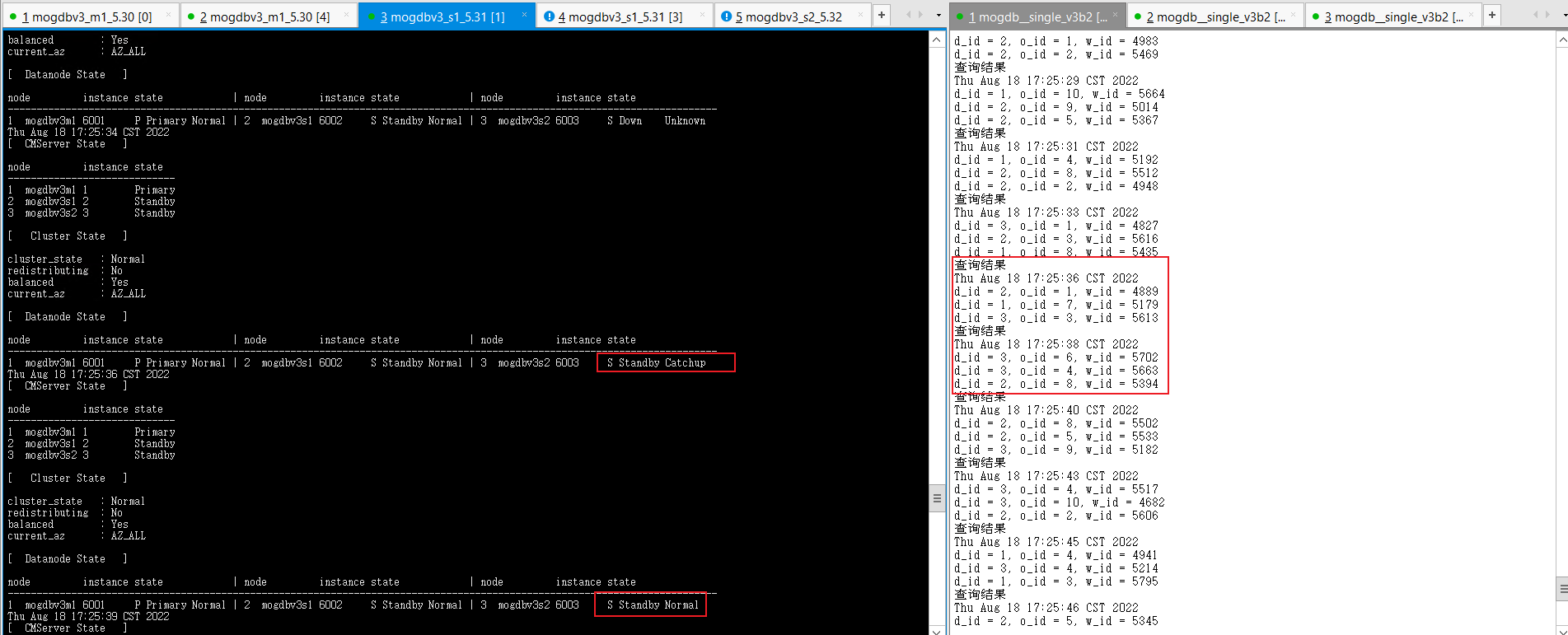

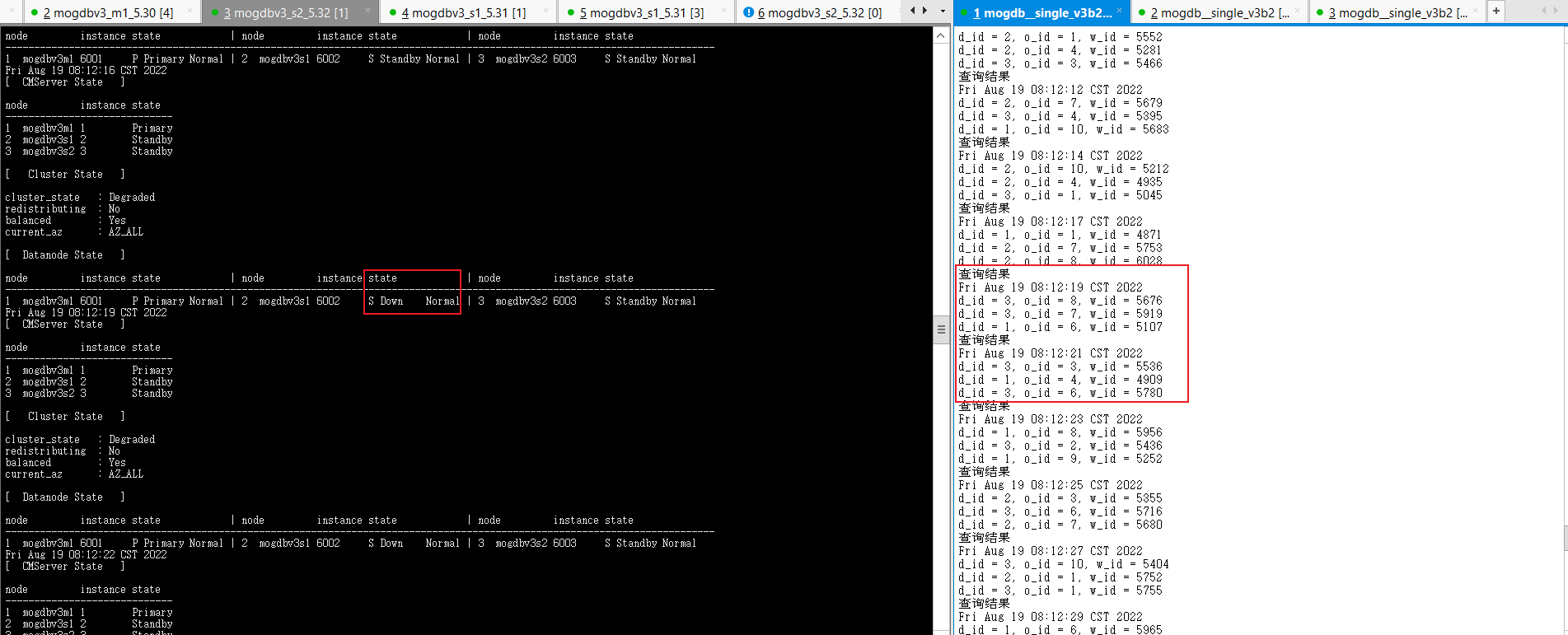

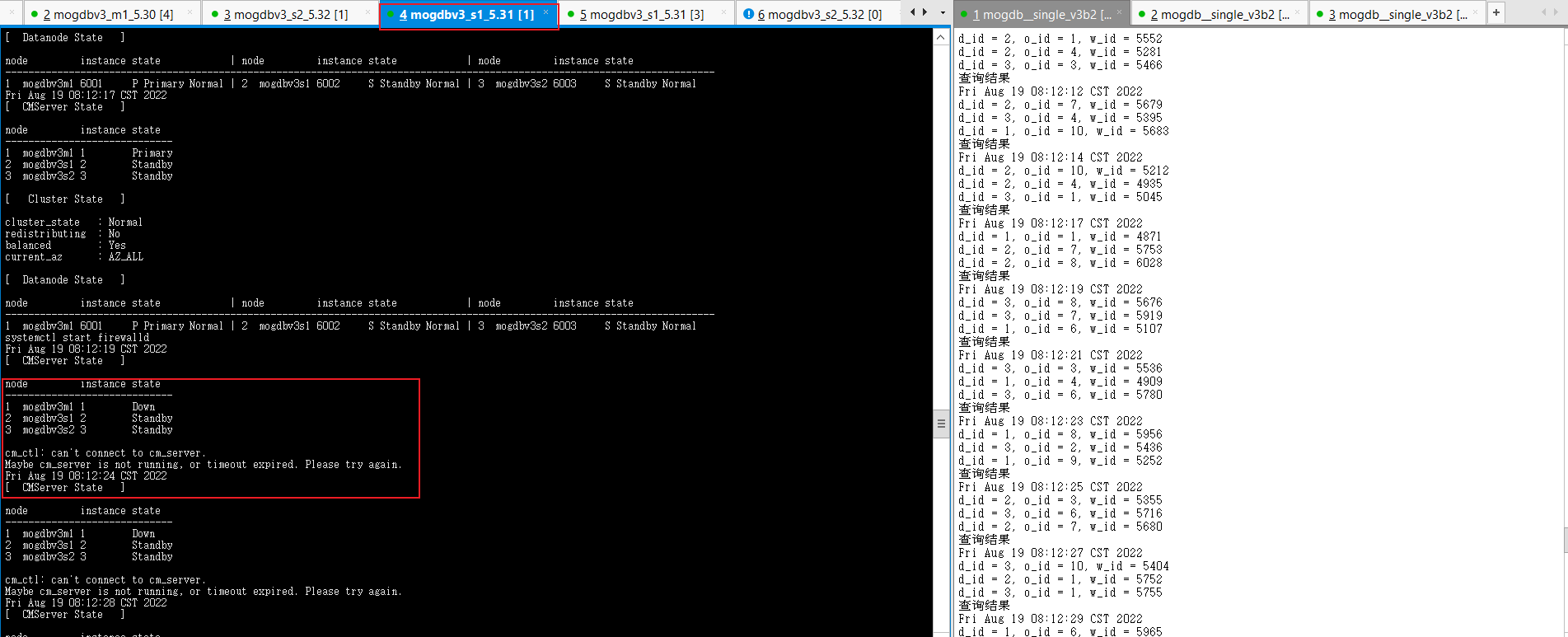

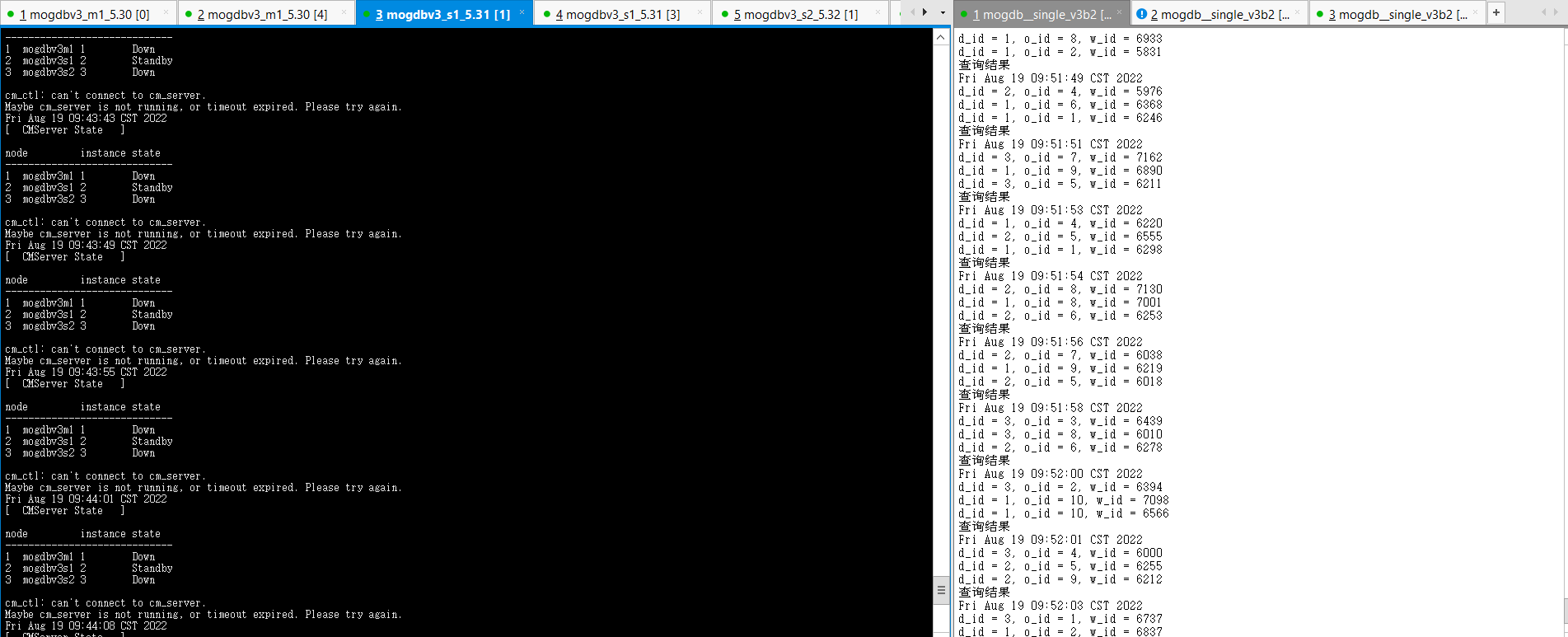

啟動3個節(jié)點已配置規(guī)則的firewall,DataNode主節(jié)點mogdbv3m1和Sync從節(jié)點mogdbv3s1之間網(wǎng)絡(luò)中斷,CM集群狀態(tài)中顯示mogdbv3s1從庫處于down狀態(tài),期間業(yè)務(wù)未受影響。從mogdbv3s2觀察各個CMServer Node狀態(tài)均正常,但實際在mogdbv3s1執(zhí)行cm_clt命令報無法連接cm_server錯誤。

-

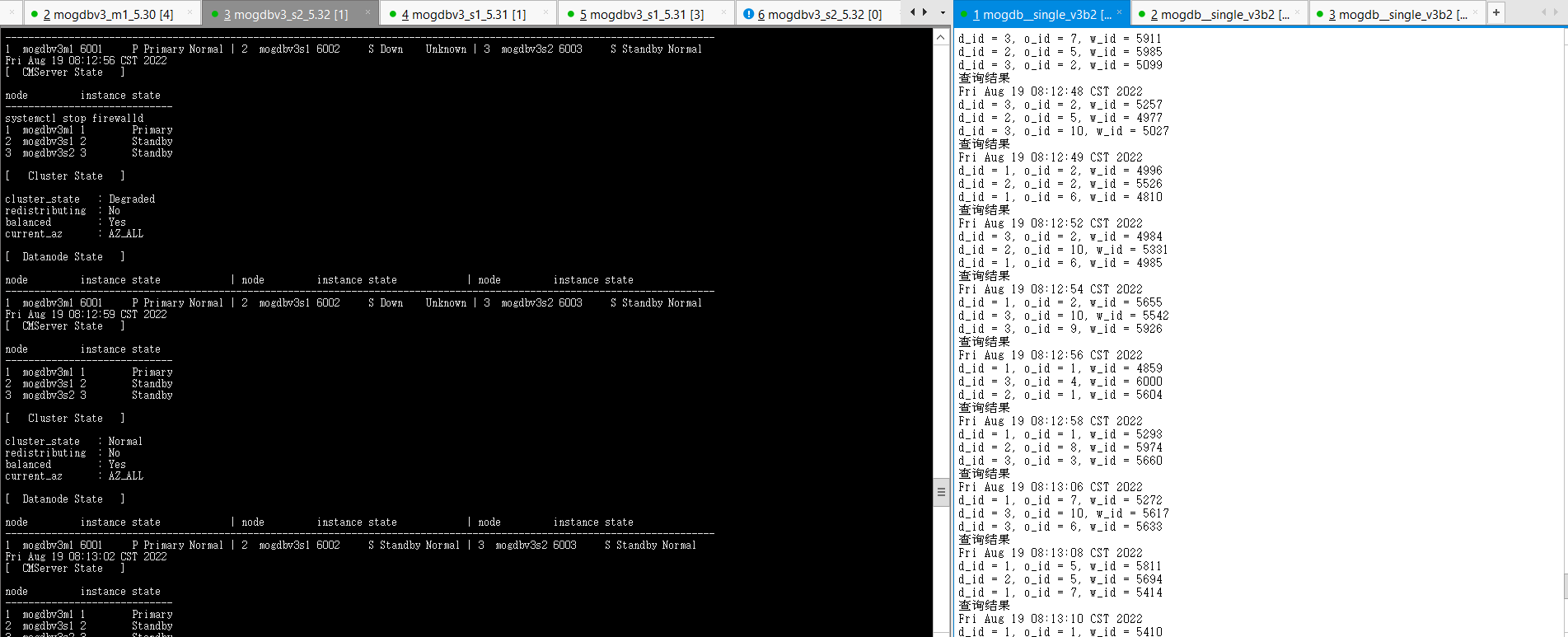

關(guān)閉三個節(jié)點網(wǎng)絡(luò)防火墻約2s后,mogdbv3s1節(jié)點的Sync從庫開始自動恢復(fù),期間業(yè)務(wù)未受影響。

-

結(jié)論:符合預(yù)期。

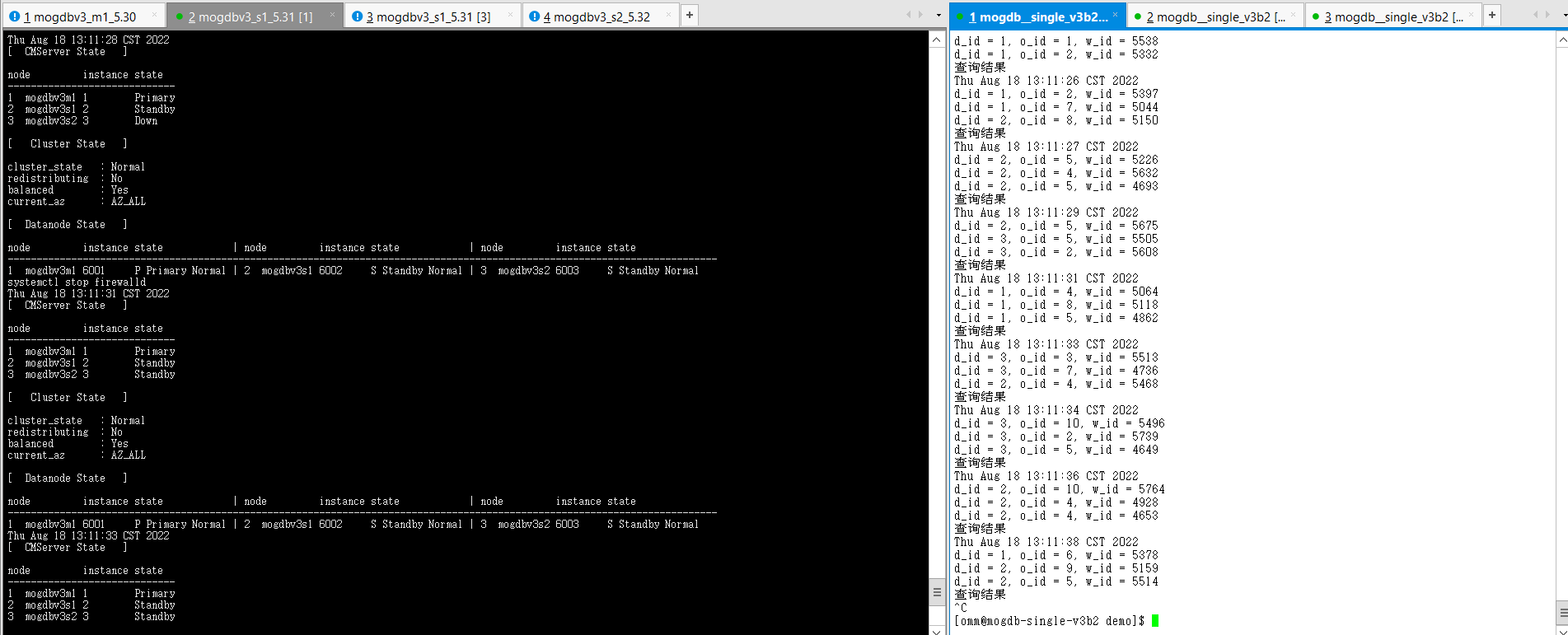

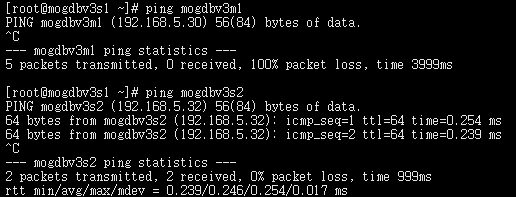

2.2.5三臺服務(wù)之間網(wǎng)絡(luò)均不通

測試目的: 測試DataNode主節(jié)點、Potential從節(jié)點、Sync從節(jié)點相互之間的網(wǎng)絡(luò)均不同,CMServer Node主節(jié)點和2個從節(jié)點相互之間網(wǎng)絡(luò)中斷場景。

測試方法:使用firewall隔離mogdbv3m1、mogdbv3s1、mogdbv3s2之間的DataNode和CMServer Node網(wǎng)絡(luò)。

預(yù)期結(jié)果:DataNode復(fù)制狀態(tài)異常,數(shù)據(jù)庫對外業(yè)務(wù)未受影響。CMServer狀態(tài)均異常,業(yè)務(wù)不受影響,

測試過程和結(jié)論:

- 測試前防火墻配置規(guī)則,尚未開啟防火墻,集群同步狀態(tài)。

[root@mogdbv3m1 ~]# firewall-cmd --zone=drop --list-all

drop (active)

target: DROP

icmp-block-inversion: no

interfaces:

sources: 192.168.5.31 192.168.5.32

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3m1 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces:

sources: 192.168.5.20

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3s1 ~]# firewall-cmd --zone=drop --list-all

drop (active)

target: DROP

icmp-block-inversion: no

interfaces:

sources: 192.168.5.30 192.168.5.32

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

[root@mogdbv3s1 ~]# firewall-cmd --zone=trusted --list-all

trusted (active)

target: ACCEPT

icmp-block-inversion: no

interfaces:

sources: 192.168.5.20

services:

ports:

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:`

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Primary

2 mogdbv3s1 2 Standby

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal

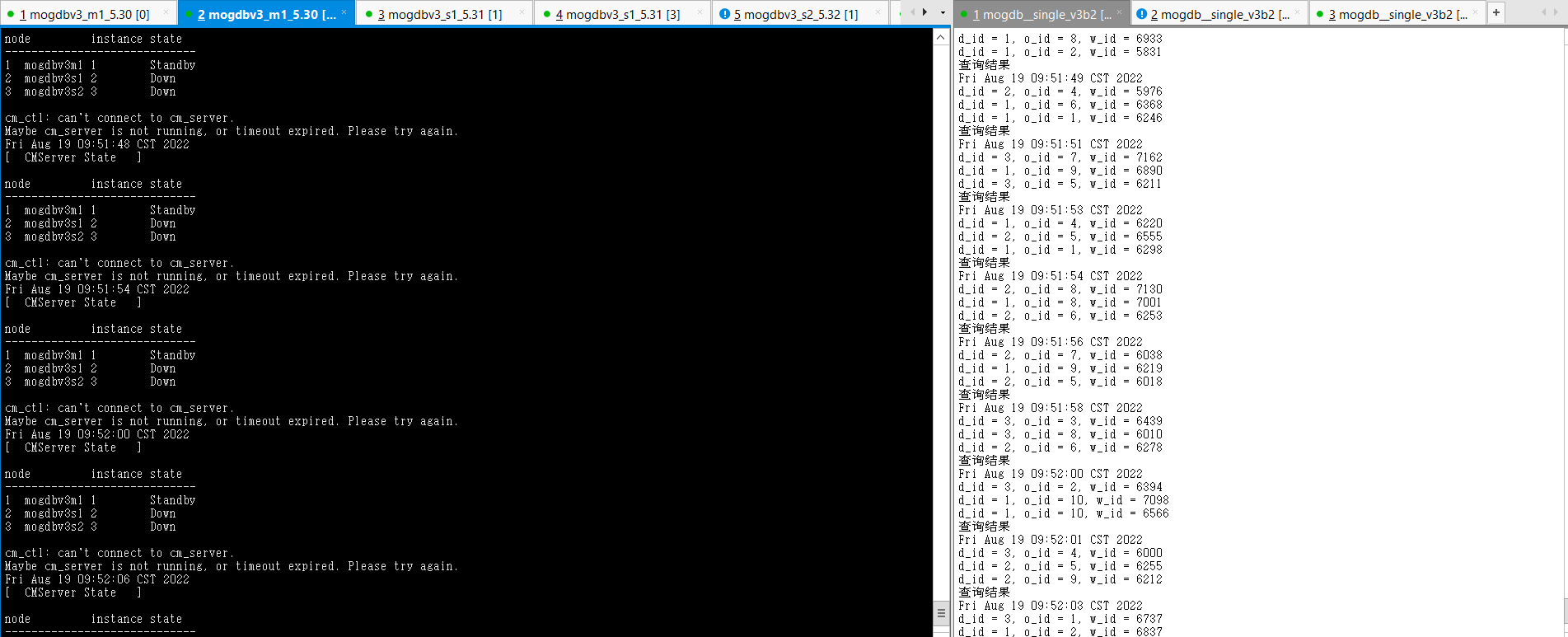

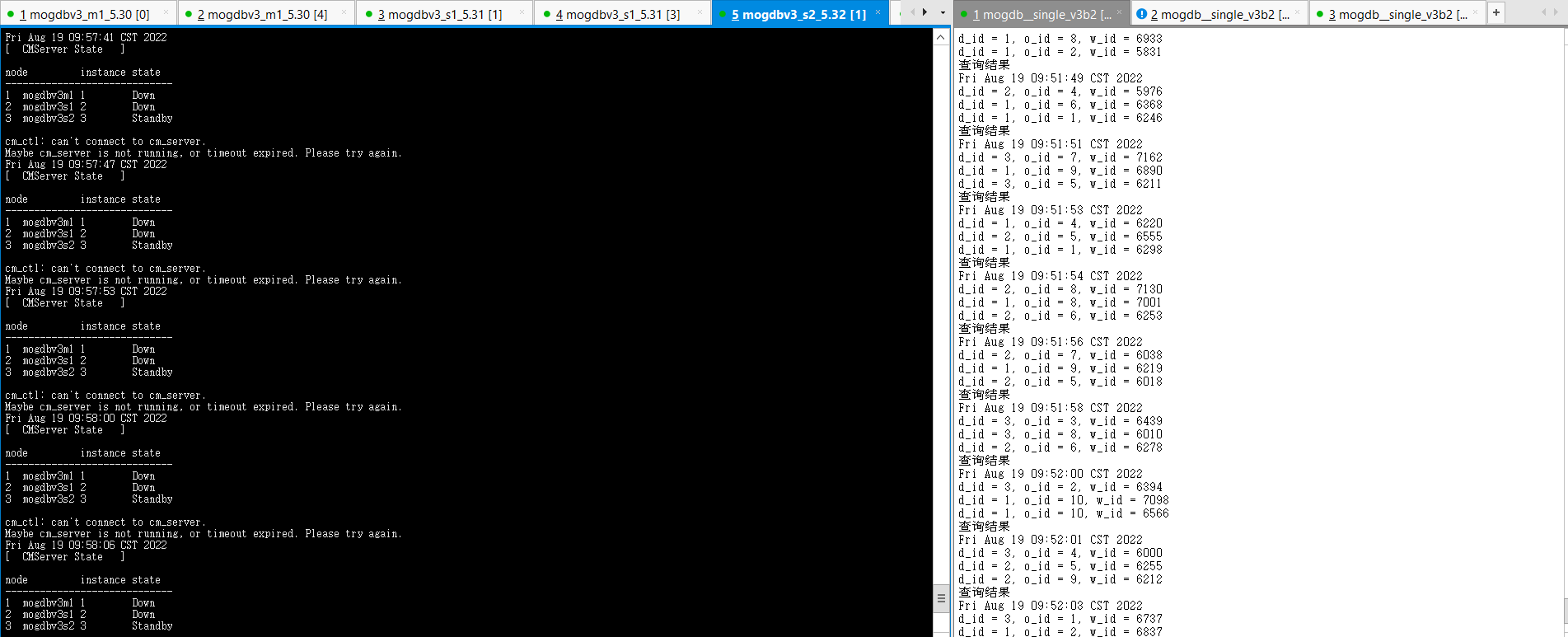

- 啟動3個節(jié)點已配置規(guī)則的firewall,DataNode節(jié)點mogdbv3m1、從節(jié)點mogdbv3s1、mogdbv3s2之間網(wǎng)絡(luò)中斷,使用cm_clt檢查集群運行情況,由于網(wǎng)絡(luò)不通,無法獲取對端2個節(jié)點的相關(guān)信息,只能顯示自己節(jié)點的信息。在每個節(jié)點使用gs_ctl query檢查數(shù)據(jù)庫運行均正常,從庫顯示need repair。業(yè)務(wù)在啟動防火墻期間運行正常,在啟動防火墻瞬間有2s短暫阻塞。

##mogdbv3m1

[omm@mogdbv3m1 ~]$ gs_ctl query

[2022-08-19 09:57:22.351][17204][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

No information

Receiver info:

No information

##mogdbv3s1

[omm@mogdbv3s1 ~]$ gs_ctl query

[2022-08-19 09:57:07.613][24975][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Standby

static_connections : 2

db_state : Need repair

detail_information : Disconnected

Senders info:

No information

Receiver info:

No information

##mogdbv3s2

[omm@mogdbv3s2 ~]$ gs_ctl query

[2022-08-19 09:58:29.410][19052][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Standby

static_connections : 2

db_state : Need repair

detail_information : Disconnected

Senders info:

No information

Receiver info:

No information

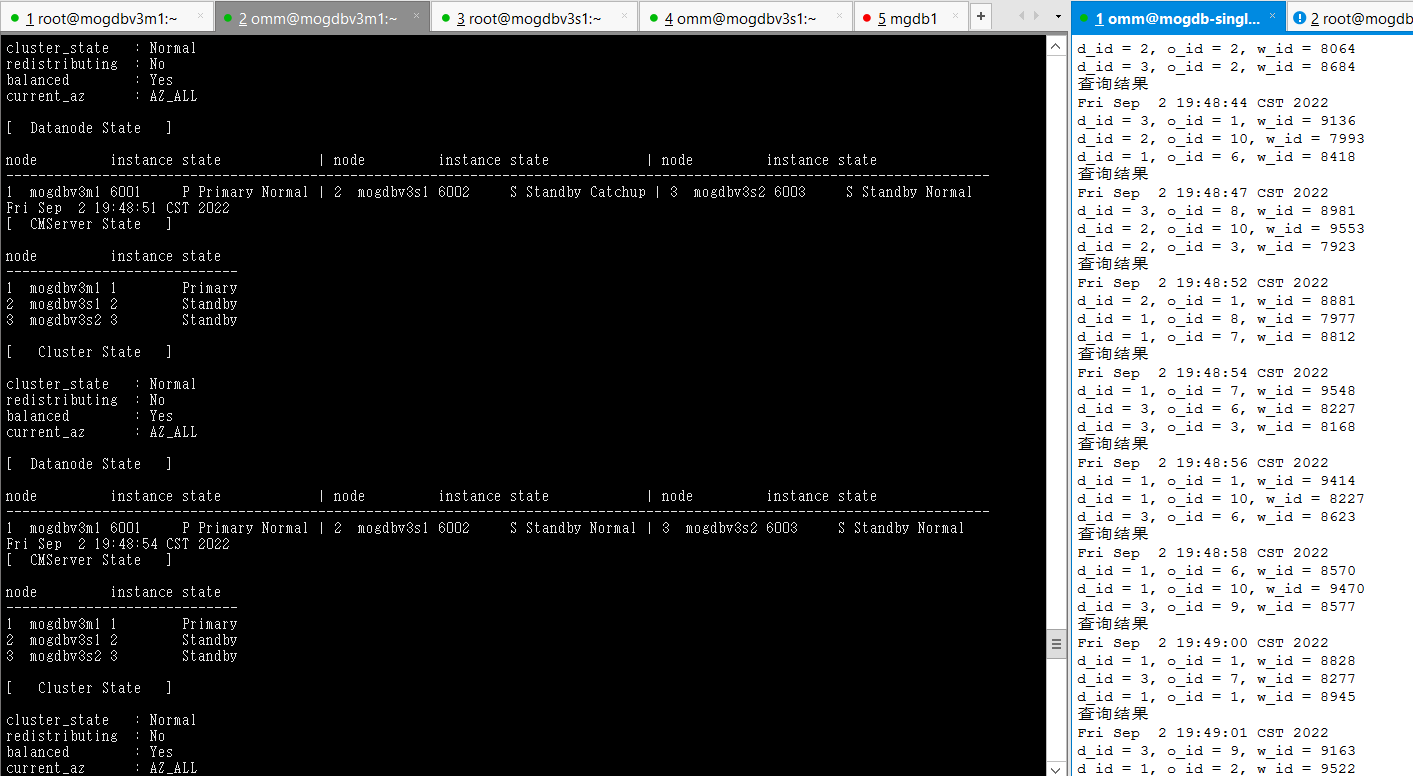

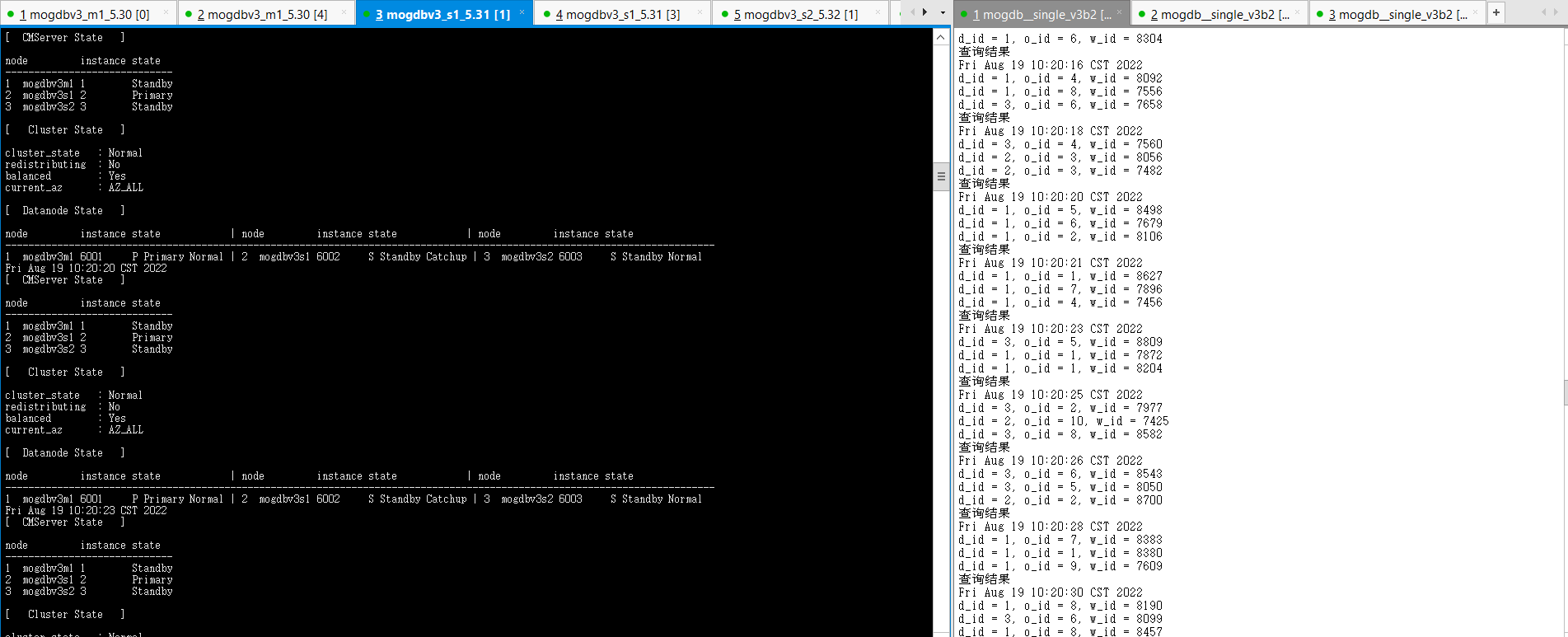

- 關(guān)閉三個節(jié)點網(wǎng)絡(luò)防火墻約48s后,各個節(jié)點cm server恢復(fù)正常,可以使用cm_ctl進行狀態(tài)查詢。mogdbv3s1、mogdbv3s2兩個從節(jié)點開始進行catchup并自動恢復(fù),期間業(yè)務(wù)未受影響。

[omm@mogdbv3m1 ~]$ gs_ctl query

[2022-08-19 10:10:47.357][20858][][gs_ctl]: gs_ctl query ,datadir is /opt/mogdb/data

HA state:

local_role : Primary

static_connections : 2

db_state : Normal

detail_information : Normal

Senders info:

sender_pid : 20663

local_role : Primary

peer_role : Standby

peer_state : Catchup

state : Catchup

sender_sent_location : 3/38800000

sender_write_location : 5/DBC08A8

sender_flush_location : 5/DBC08A8

sender_replay_location : 5/DBC08A8

receiver_received_location : 3/37000000

receiver_write_location : 3/34000000

receiver_flush_location : 3/34000000

receiver_replay_location : 3/175C2948

sync_percent : 7%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.30:26001-->192.168.5.32:56034

sender_pid : 20664

local_role : Primary

peer_role : Standby

peer_state : Catchup

state : Catchup

sender_sent_location : 3/35800000

sender_write_location : 5/DBC08A8

sender_flush_location : 5/DBC08A8

sender_replay_location : 5/DBC08A8

receiver_received_location : 3/347FFFF9

receiver_write_location : 3/32000000

receiver_flush_location : 3/32000000

receiver_replay_location : 3/10D8BA40

sync_percent : 7%

sync_state : Potential

sync_priority : 1

sync_most_available : On

channel : 192.168.5.30:26001-->192.168.5.31:37990

Receiver info:

No information

- 結(jié)論:復(fù)合預(yù)期。

2.3數(shù)據(jù)庫問題

2.3.1實例崩潰

測試目的:測試主節(jié)點數(shù)據(jù)在運行期間遇到錯誤導(dǎo)致實例奔潰。

測試方法:使用kill -9 命令直接kiil掉mogdb進程

預(yù)期結(jié)果:主庫宕庫后,業(yè)務(wù)中斷若干秒后,在2個從庫中選一臺提升為新的主庫,業(yè)務(wù)恢復(fù)正常,CM立刻拉起被kill原主庫,原主庫角色角色變?yōu)閺膸欤⒆詣幼菲窖舆t。

測試過程和結(jié)論:

- 測試前正常狀態(tài)

[ CMServer State ]

node instance state

-----------------------------

1 mogdbv3m1 1 Standby

2 mogdbv3s1 2 Primary

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal

-

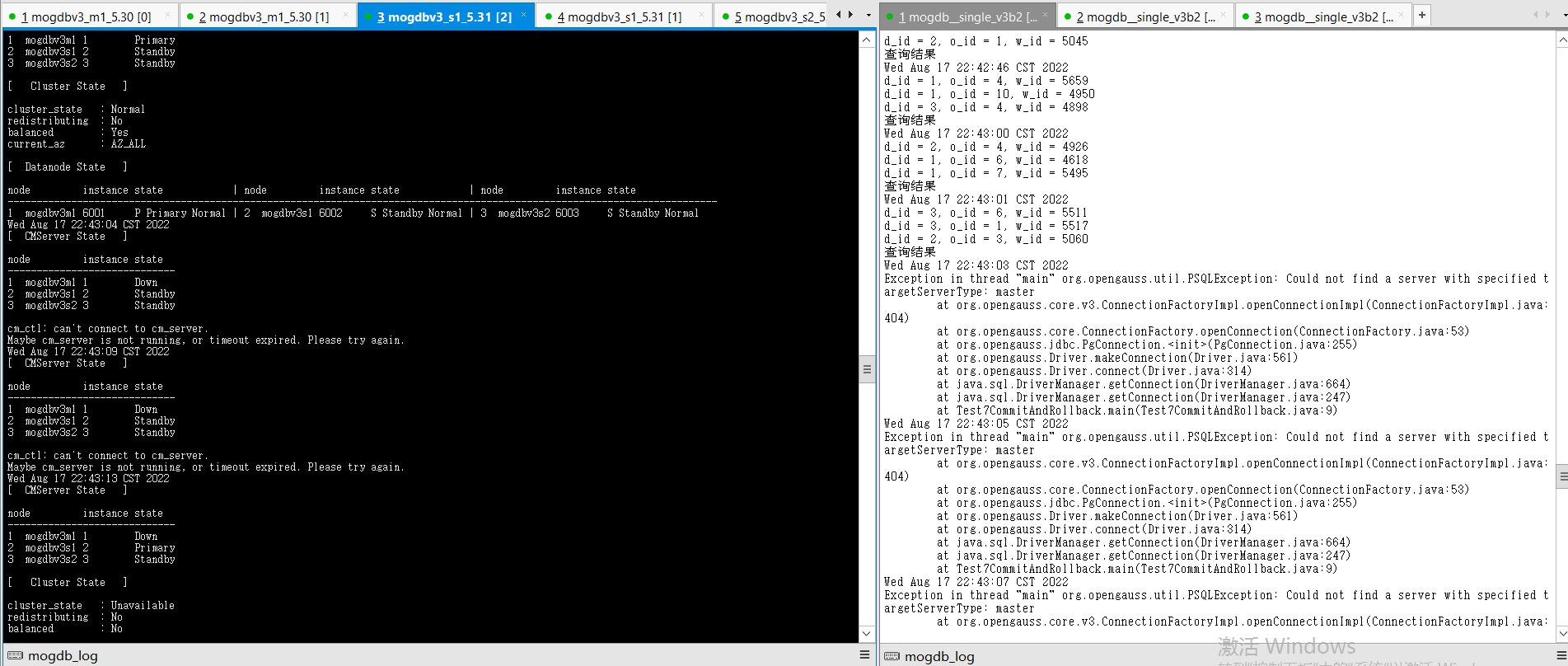

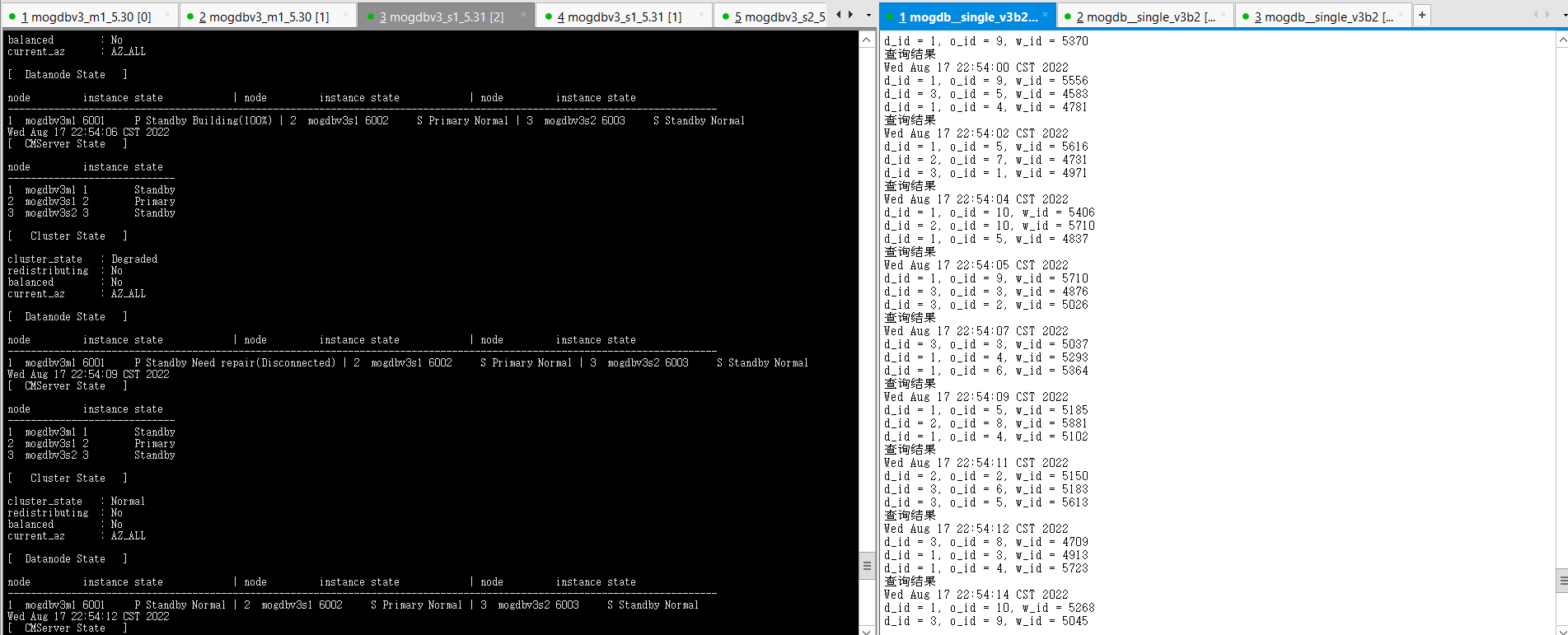

使用kill -9 殺掉mogdbv3m1節(jié)點的mogdb進程,cm檢測到主庫宕機,選擇mogdbv3s1同步從庫提升為主庫,業(yè)務(wù)中斷8s。被kill掉的原有主庫自動拉起后,降為備庫。

-

結(jié)論:符合預(yù)期

2.3.2文件被刪除

測試目的:測試主庫從庫主機硬件故障,出現(xiàn)宕機。

測試方法:選擇一個主庫環(huán)境,對PGDATA目錄下的base目錄執(zhí)行mv base base_bak模擬數(shù)據(jù)文件被刪除。

預(yù)期結(jié)果:主庫文件被刪除后,業(yè)務(wù)中斷若干秒后,在2個從庫中選一臺提升為新的主庫,業(yè)務(wù)恢復(fù)正常。

測試過程和結(jié)論:

- 測試前正常狀態(tài)

node instance state

-----------------------------

1 mogdbv3m1 1 Standby

2 mogdbv3s1 2 Primary

3 mogdbv3s2 3 Standby

[ Cluster State ]

cluster_state : Normal

redistributing : No

balanced : Yes

current_az : AZ_ALL

[ Datanode State ]

node instance state | node instance state | node instance state

---------------------------------------------------------------------------------------------------------------------------

1 mogdbv3m1 6001 P Primary Normal | 2 mogdbv3s1 6002 S Standby Normal | 3 mogdbv3s2 6003 S Standby Normal

-

在mogdbv3m1節(jié)點PGDATA下,執(zhí)行mv base base_bak模擬文件被刪除,執(zhí)行后業(yè)務(wù)立即報錯出現(xiàn)異常,此時cm尚未檢測到數(shù)據(jù)庫異常,約在79s后cm完成新的主庫提升,業(yè)務(wù)恢復(fù)正常。

-

結(jié)論,過程符合預(yù)期,但是CM檢測主節(jié)點文件刪除異常過程觸發(fā)新的選主操作較慢,導(dǎo)致業(yè)務(wù)中斷時間較長。